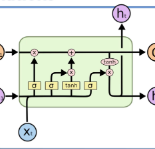

The detection of facial action units (AUs) has been studied as it has the competition due to the wide-ranging applications thereof. In this paper, we propose a novel framework for the AU detection from a single input image by grasping the \textbf{c}o-\textbf{o}ccurrence and \textbf{m}utual \textbf{ex}clusion (COMEX) as well as the intensity distribution among AUs. Our algorithm uses facial landmarks to detect the features of local AUs. The features are input to a bidirectional long short-term memory (BiLSTM) layer for learning the intensity distribution. Afterwards, the new AU feature continuously passed through a self-attention encoding layer and a continuous-state modern Hopfield layer for learning the COMEX relationships. Our experiments on the challenging BP4D and DISFA benchmarks without any external data or pre-trained models yield F1-scores of 63.7\% and 61.8\% respectively, which shows our proposed networks can lead to performance improvement in the AU detection task.

翻译:由于面部行动单位(AUs)的应用范围很广,因此已经研究过对面部行动单位(AUs)的检测,因为其应用范围很广,因此,我们提出了一个新的框架,供AU从单一输入图像中检测,方法是抓住\ textbf{c}o-textbf{o}curence 和\ textbf{m}m}tual\textbf{ex}clus(COMEX)以及AUs之间的强度分布。我们的算法使用面部标志来检测当地AU的特征。这些特征是对双向长期短期内存层(BLSTM)的投入,用于学习强度分布。随后,新的AU特征不断通过一个自用编码层和连续的现代Hopfield层传递,用于学习ECONX关系。我们关于具有挑战性的BP4D和DISFA基准的实验没有外部数据或预先训练过的模型,结果分别为63.7 ⁇ 和61.8 ⁇ 。显示我们提议的网络可以导致非盟探测任务的业绩改进。