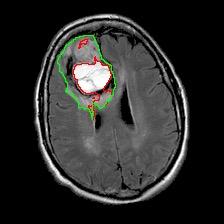

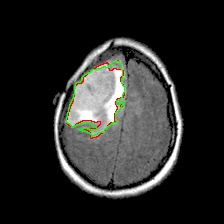

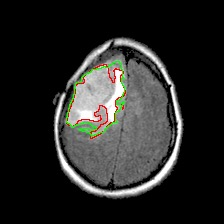

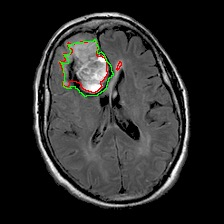

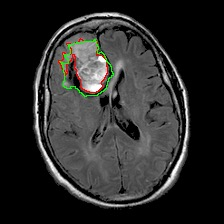

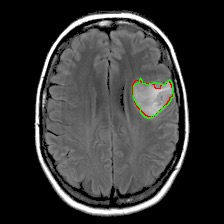

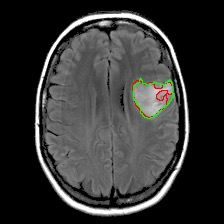

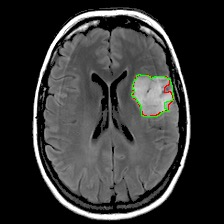

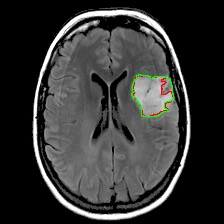

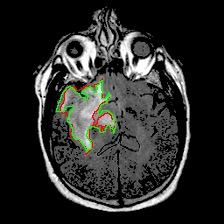

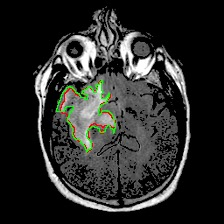

Neural processes have recently emerged as a class of powerful neural latent variable models that combine the strengths of neural networks and stochastic processes. As they can encode contextual data in the network's function space, they offer a new way to model task relatedness in multi-task learning. To study its potential, we develop multi-task neural processes, a new variant of neural processes for multi-task learning. In particular, we propose to explore transferable knowledge from related tasks in the function space to provide inductive bias for improving each individual task. To do so, we derive the function priors in a hierarchical Bayesian inference framework, which enables each task to incorporate the shared knowledge provided by related tasks into its context of the prediction function. Our multi-task neural processes methodologically expand the scope of vanilla neural processes and provide a new way of exploring task relatedness in function spaces for multi-task learning. The proposed multi-task neural processes are capable of learning multiple tasks with limited labeled data and in the presence of domain shift. We perform extensive experimental evaluations on several benchmarks for the multi-task regression and classification tasks. The results demonstrate the effectiveness of multi-task neural processes in transferring useful knowledge among tasks for multi-task learning and superior performance in multi-task classification and brain image segmentation.

翻译:神经过程最近作为一个强大的神经潜伏变量模型的类别出现了,这些模型结合了神经网络和随机过程的优势。由于它们可以将网络功能空间的背景数据编码成网络功能空间的环境数据,它们提供了在多任务学习中模拟任务关联性的新方式。为了研究它的潜力,我们开发了多任务神经过程,这是多任务学习的神经过程的新变体。特别是,我们提议探索功能空间中相关任务中可转让的知识,以便为改进每一项任务提供诱导偏差。为了这样做,我们从一个等级分级的贝耶斯理论框架中得出函数的前身,使每一项任务都能将相关任务提供的共享知识纳入其预测功能中。我们多任务神经过程在方法上扩大了香草神经过程的范围,并为探索多任务学习功能空间中的任务关联性提供了一种新的方式。拟议的多任务性神经过程能够学习多种任务,但标签数据有限,在域变换中也存在。我们在多任务结构结构结构的多任务中,对多任务结构结构结构结构结构中的一些实用性基准进行了广泛的实验性评估,在多任务中,在多任务中展示了多任务级结构结构结构的学习结果。