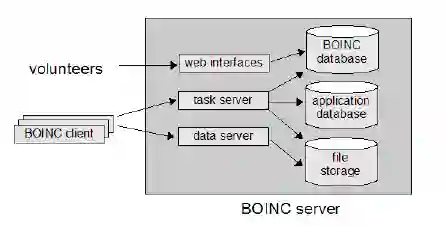

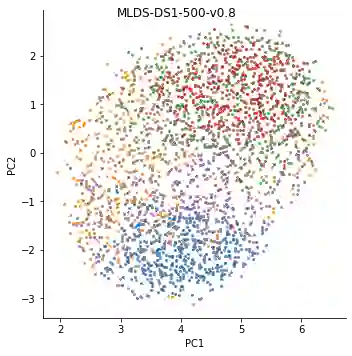

Neural networks are powerful models that solve a variety of complex real-world problems. However, the stochastic nature of training and large number of parameters in a typical neural model makes them difficult to evaluate via inspection. Research shows this opacity can hide latent undesirable behavior, be it from poorly representative training data or via malicious intent to subvert the behavior of the network, and that this behavior is difficult to detect via traditional indirect evaluation criteria such as loss. Therefore, it is time to explore direct ways to evaluate a trained neural model via its structure and weights. In this paper we present MLDS, a new dataset consisting of thousands of trained neural networks with carefully controlled parameters and generated via a global volunteer-based distributed computing platform. This dataset enables new insights into both model-to-model and model-to-training-data relationships. We use this dataset to show clustering of models in weight-space with identical training data and meaningful divergence in weight-space with even a small change to the training data, suggesting that weight-space analysis is a viable and effective alternative to loss for evaluating neural networks.

翻译:神经网络是解决各种复杂的现实世界问题的强大模型,然而,由于培训的随机性质和典型神经模型中的大量参数,很难通过检查进行评估。研究表明,这种不透明可以隐藏潜在的不良行为,无论是来自代表性不强的培训数据,还是通过恶意意图破坏网络行为,而且这种行为很难通过损失等传统的间接评价标准来发现。因此,现在应该探索直接方法,通过结构与重量来评价经过训练的神经模型。在本文中,我们提出MLDS,这是一个由经过训练的有仔细控制的参数的神经网络组成的新数据集,通过全球自愿分布的计算平台生成。这个数据集使人们能够对模型到模型和模型到培训数据的关系有新的洞察力。我们用这个数据集来显示重空间模型与相同的培训数据以及重量空间中有意义的差异,甚至对培训数据也有小的改变,表明重空间分析是评价神经网络损失的一个可行和有效的替代办法。