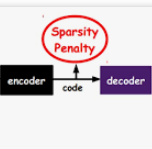

With rising uncertainty in the real world, online Reinforcement Learning (RL) has been receiving increasing attention due to its fast learning capability and improving data efficiency. However, online RL often suffers from complex Value Function Approximation (VFA) and catastrophic interference, creating difficulty for the deep neural network to be applied to an online RL algorithm in a fully online setting. Therefore, a simpler and more adaptive approach is introduced to evaluate value function with the kernel-based model. Sparse representations are superior at handling interference, indicating that competitive sparse representations should be learnable, non-prior, non-truncated and explicit when compared with current sparse representation methods. Moreover, in learning sparse representations, attention mechanisms are utilized to represent the degree of sparsification, and a smooth attentive function is introduced into the kernel-based VFA. In this paper, we propose an Online Attentive Kernel-Based Temporal Difference (OAKTD) algorithm using two-timescale optimization and provide convergence analysis of our proposed algorithm. Experimental evaluations showed that OAKTD outperformed several Online Kernel-based Temporal Difference (OKTD) learning algorithms in addition to the Temporal Difference (TD) learning algorithm with Tile Coding on public Mountain Car, Acrobot, CartPole and Puddle World tasks.

翻译:随着现实世界的不确定性不断上升,在线强化学习(RL)因其快速学习能力和数据效率的提高而日益受到越来越多的关注,然而,在线RL往往受到复杂的价值观功能接近和灾难性干扰,给在完全在线环境下将深神经网络应用于在线RL算法造成困难,因此,采用了更简单、更适应性更强的方法来评价内核模型的价值功能。不完全的表示在处理干扰方面优于处理干扰,表明与目前稀少的代表方法相比,竞争性的少代表应当可以学习、非主要、非管理和明确。此外,在学习少代表方法方面,利用关注机制来代表深度化的程度,并在基于内核的VFAFA中引入一个顺畅的注意功能。因此,我们建议采用在线惯性心心室基温度差异(OAKTD)算法,使用两个时间尺度的优化,并对我们提议的算法进行趋同分析。实验性评价显示,在OAKTD中,在在线KEN-CORD Temalalalblection(OKOKROD) 和Trevelopalal-Caltragalalalalalalal 学习(Taltraction)中,在Treportal-Traction-Caltractionsalbalbalking-Calking(OOLtraction)中,我们提议算算法中,我们提议了数个AAAAAAAKTD,我们建议,我们提议的CLTARDLTLTLTLTLTLTLTL)中,我们建议。