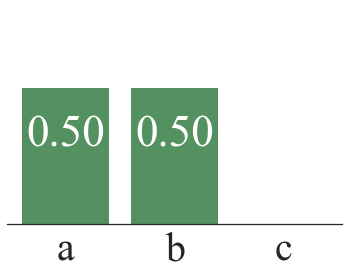

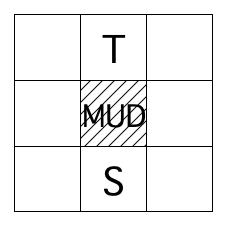

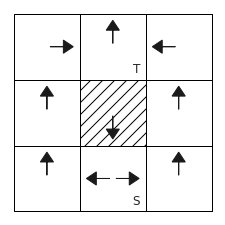

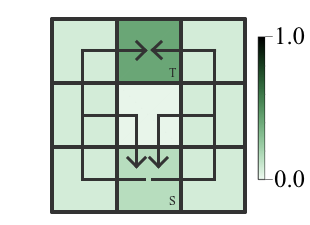

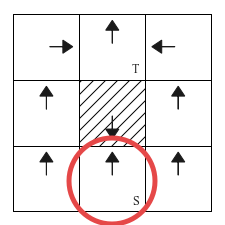

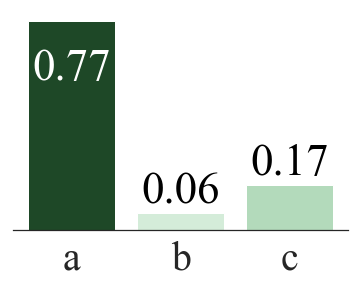

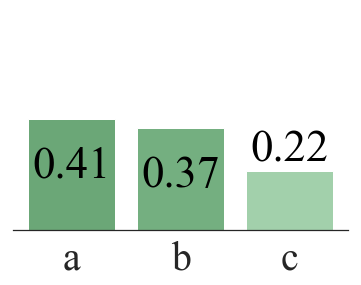

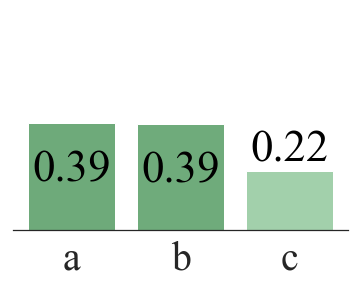

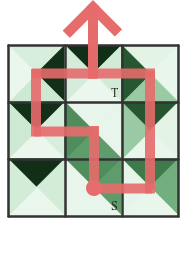

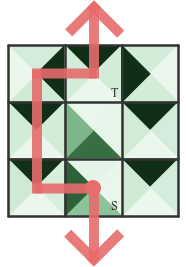

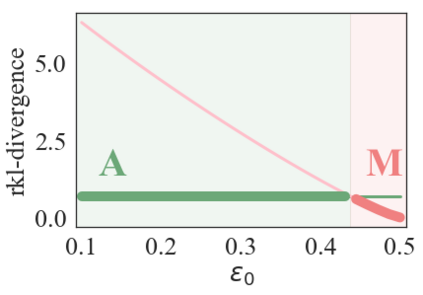

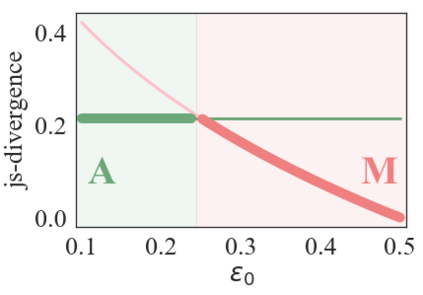

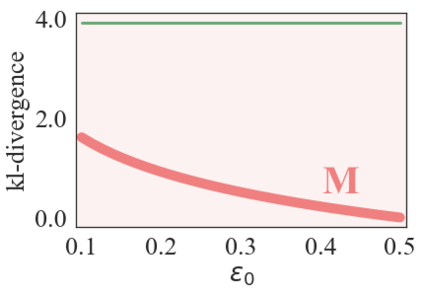

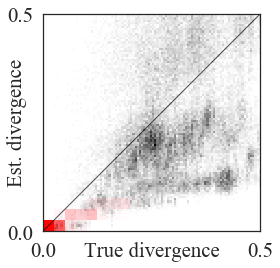

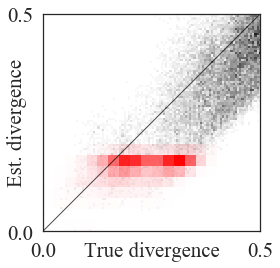

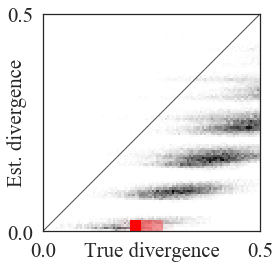

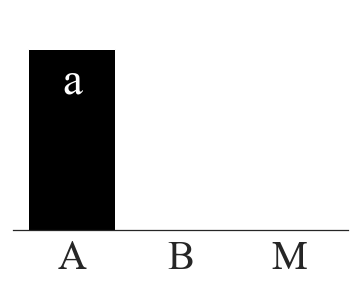

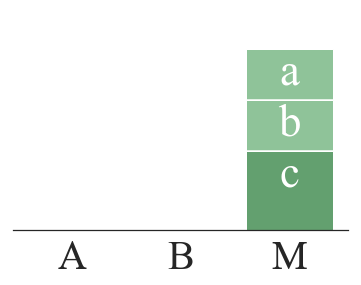

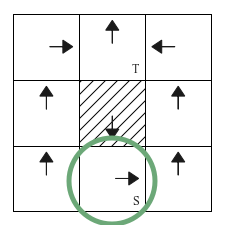

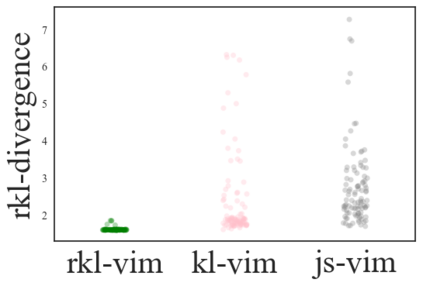

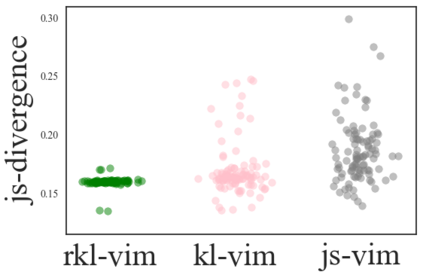

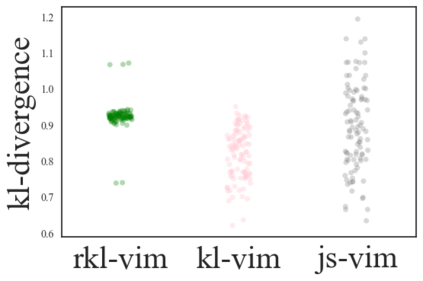

We address the problem of imitation learning with multi-modal demonstrations. Instead of attempting to learn all modes, we argue that in many tasks it is sufficient to imitate any one of them. We show that the state-of-the-art methods such as GAIL and behavior cloning, due to their choice of loss function, often incorrectly interpolate between such modes. Our key insight is to minimize the right divergence between the learner and the expert state-action distributions, namely the reverse KL divergence or I-projection. We propose a general imitation learning framework for estimating and minimizing any f-Divergence. By plugging in different divergences, we are able to recover existing algorithms such as Behavior Cloning (Kullback-Leibler), GAIL (Jensen Shannon) and Dagger (Total Variation). Empirical results show that our approximate I-projection technique is able to imitate multi-modal behaviors more reliably than GAIL and behavior cloning.

翻译:我们用多种模式演示来解决模仿学习的问题。 我们不试图学习所有模式,而是认为在许多任务中,只要模仿其中任何一个模式就足够了。 我们表明,最先进的方法,如GAIL和行为克隆,由于它们选择了损失功能,往往错误地在这些模式之间进行内插。 我们的关键洞察力是最大限度地缩小学习者与专家国家行动分布之间的正确差异,即反向的 KL 差异或I- 预测。 我们提出了一个用于估计和尽量减少任何f-Diverence的一般模拟学习框架。 通过插入不同的差异,我们能够恢复现有的算法,如Behavior Cloning(Kullback-Leiber)、GAIL(Jensen Shann)和Dagger(Tal Varication )等。 我们大致的I- 投影技术能够模仿比GAIL和行为克隆更可靠的多模式行为。