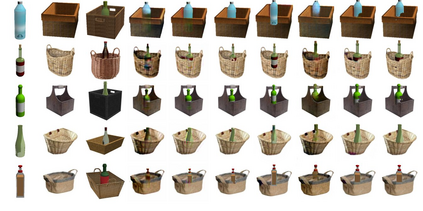

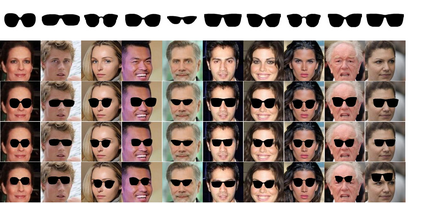

Generative Adversarial Networks (GANs) can produce images of surprising complexity and realism, but are generally modeled to sample from a single latent source ignoring the explicit spatial interaction between multiple entities that could be present in a scene. Capturing such complex interactions between different objects in the world, including their relative scaling, spatial layout, occlusion, or viewpoint transformation is a challenging problem. In this work, we propose to model object composition in a GAN framework as a self-consistent composition-decomposition network. Our model is conditioned on the object images from their marginal distributions to generate a realistic image from their joint distribution by explicitly learning the possible interactions. We evaluate our model through qualitative experiments and user evaluations in both the scenarios when either paired or unpaired examples for the individual object images and the joint scenes are given during training. Our results reveal that the learned model captures potential interactions between the two object domains given as input to output new instances of composed scene at test time in a reasonable fashion.

翻译:生成自变网络(GANs) 能够产生出令人惊讶的复杂性和现实主义的图像,但通常被建模为样本,从单一的潜在来源进行抽样,忽略了多个实体之间可能出现在场景中的明显的空间互动。捕捉世界不同物体之间的这种复杂互动,包括它们相对的缩放、空间布局、隔离或观点转换,是一个具有挑战性的问题。在这项工作中,我们提议将GAN框架中的物体构成建模为一个自相矛盾的构成分解网络。我们的模型以其边际分布的物体图像为条件,通过明确学习可能的相互作用,从联合分布中产生现实图像。我们在培训期间对单个物体图像和联合场景进行配对或无偏差的示例时,通过定性实验和用户评价来评估我们的模型。我们的结果显示,所学的模型捕捉了两个对象领域之间的潜在互动,作为输入输入在合理的时间测试场景中显示的新场景。