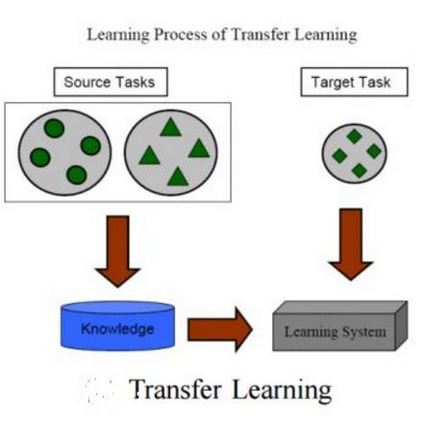

While a key component to the success of deep learning is the availability of massive amounts of training data, medical image datasets are often limited in diversity and size. Transfer learning has the potential to bridge the gap between related yet different domains. For medical applications, however, it remains unclear whether it is more beneficial to pre-train on natural or medical images. We aim to shed light on this problem by comparing initialization on ImageNet and RadImageNet on seven medical classification tasks. We investigate their learned representations with Canonical Correlation Analysis (CCA) and compare the predictions of the different models. We find that overall the models pre-trained on ImageNet outperform those trained on RadImageNet. Our results show that, contrary to intuition, ImageNet and RadImageNet converge to distinct intermediate representations, and that these representations are even more dissimilar after fine-tuning. Despite these distinct representations, the predictions of the models remain similar. Our findings challenge the notion that transfer learning is effective due to the reuse of general features in the early layers of a convolutional neural network and show that weight similarity before and after fine-tuning is negatively related to performance gains.

翻译:虽然深层学习成功的一个关键组成部分是提供大量培训数据,但医学图像数据集在多样性和规模上往往有限。转让学习有可能弥合相关但不同领域之间的差距。但是,对于医疗应用,仍然不清楚它是否更有利于自然图像或医学图像的预培训。我们的目标是通过比较图像网和RadImageNet在7项医疗分类任务方面的初始化来澄清这一问题。我们调查了它们与Canonical Conclinic Conclination 分析(CCA)的学习表现,并比较了不同模型的预测。我们发现,在图像网络上预先培训的模型总体上比在RadImagageNet上培训的模型要好。我们的结果显示,与直觉相反,图像网和RadImagNet的组合与不同的中间表述不同,这些表述在经过细微调整后甚至更加不相近。尽管存在这些不同的表述,但模型的预测仍然相似。我们的调查结果质疑,由于在变异神经网络早期重新使用一般特征,转移学习是有效的概念。我们发现,显示,在调整之前和之后的重量相近似于业绩。