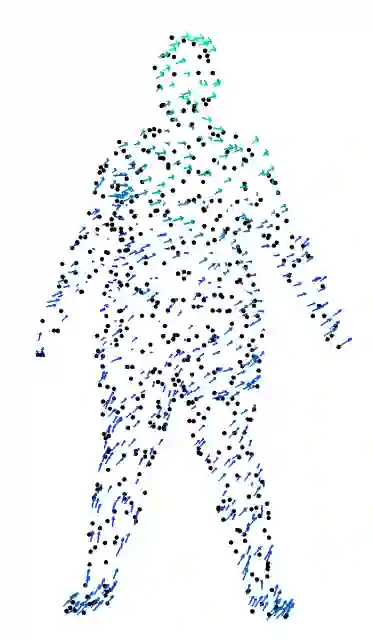

Object reconstruction from 3D point clouds has achieved impressive progress in the computer vision and computer graphics research field. However, reconstruction from time-varying point clouds (a.k.a. 4D point clouds) is generally overlooked. In this paper, we propose a new network architecture, namely RFNet-4D, that jointly reconstruct objects and their motion flows from 4D point clouds. The key insight is that simultaneously performing both tasks via learning spatial and temporal features from a sequence of point clouds can leverage individual tasks, leading to improved overall performance. To prove this ability, we design a temporal vector field learning module using unsupervised learning approach for flow estimation, leveraged by supervised learning of spatial structures for object reconstruction. Extensive experiments and analyses on benchmark dataset validated the effectiveness and efficiency of our method. As shown in experimental results, our method achieves state-of-the-art performance on both flow estimation and object reconstruction while performing much faster than existing methods in both training and inference. Our code and data are available at https://github.com/hkust-vgd/RFNet-4D

翻译:从 3D 点云重建3D 点云在计算机视野和计算机图形研究领域取得了令人印象深刻的进展。然而,从时间变化点云(a.k.a.a. 4D点云)的重建一般被忽视。在本文件中,我们提议一个新的网络结构,即RFNet-4D,联合重建天体及其运动,从 4D 点云重建天体。关键的洞察力是,通过从点云序列中学习时空特点同时执行两个任务,可以发挥个别任务的作用,导致总体性能的改善。为了证明这一能力,我们设计了一个时间矢量实地学习模块,使用不受监督的学习方法来估计流量,并借助对天体重建的空间结构的监督下学习。关于基准数据集的广泛试验和分析证实了我们的方法的有效性和效率。如实验结果所示,我们的方法在流量估计和天体重建方面都取得了最先进的业绩,同时在培训和推断方面比现有方法都快得多。我们的代码和数据可在 https://github.com/hkust-vgd/RFNet-4D。