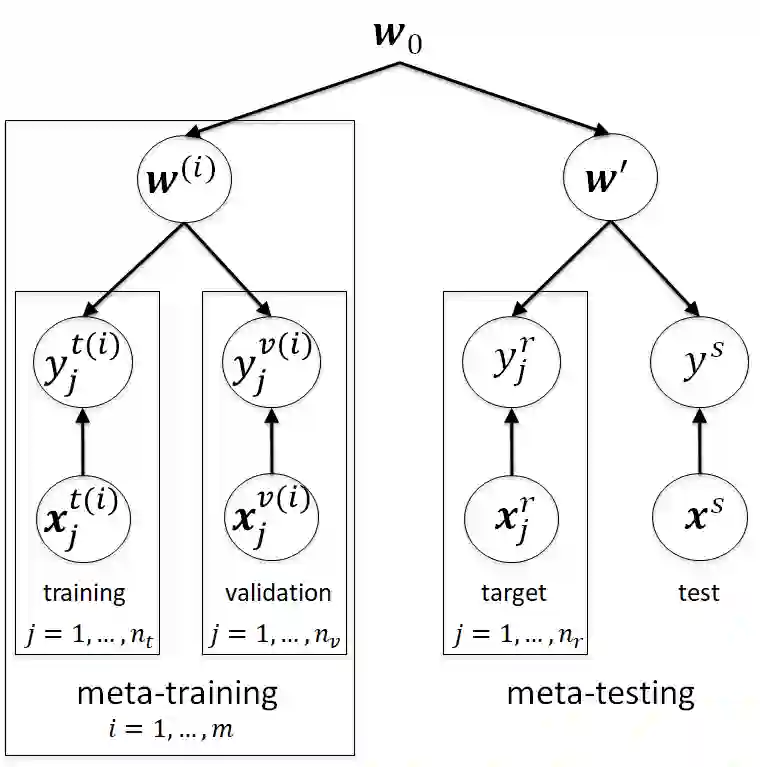

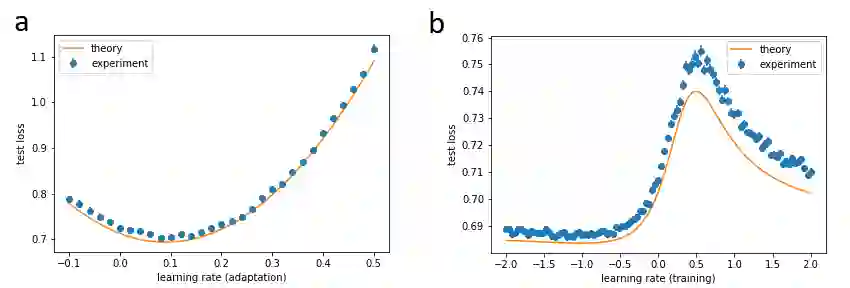

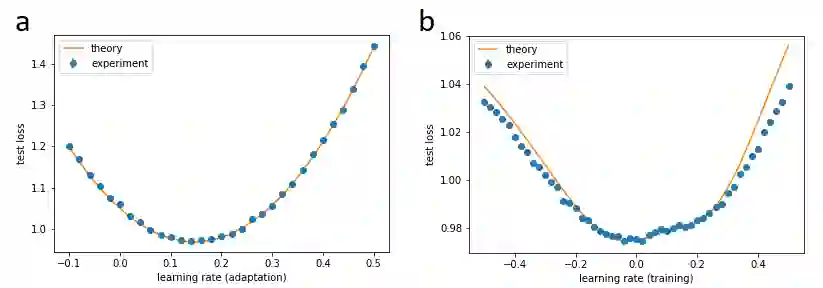

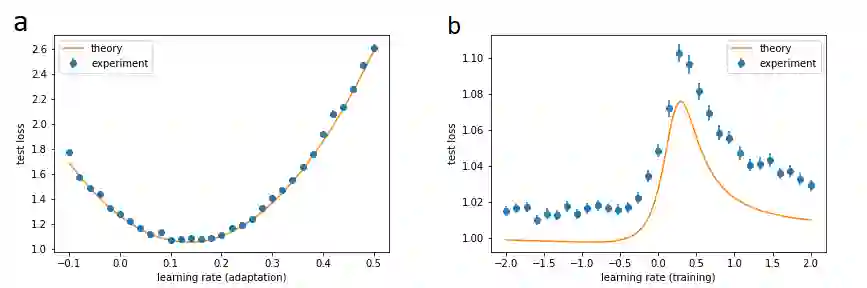

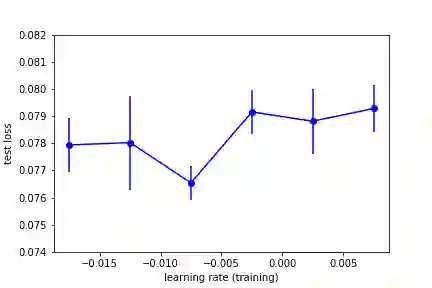

Deep learning models require a large amount of data to perform well. When data is scarce for a target task, we can transfer the knowledge gained by training on similar tasks to quickly learn the target. A successful approach is meta-learning, or learning to learn a distribution of tasks, where learning is represented by an outer loop, and to learn by an inner loop of gradient descent. However, a number of recent empirical studies argue that the inner loop is unnecessary and more simple models work equally well or even better. We study the performance of MAML as a function of the learning rate of the inner loop, where zero learning rate implies that there is no inner loop. Using random matrix theory and exact solutions of linear models, we calculate an algebraic expression for the test loss of MAML applied to mixed linear regression and nonlinear regression with overparameterized models. Surprisingly, while the optimal learning rate for adaptation is positive, we find that the optimal learning rate for training is always negative, a setting that has never been considered before. Therefore, not only does the performance increase by decreasing the learning rate to zero, as suggested by recent work, but it can be increased even further by decreasing the learning rate to negative values. These results help clarify under what circumstances meta-learning performs best.

翻译:深层学习模式需要大量的数据才能很好地发挥作用。 当数据对于目标任务而言缺乏数据时, 我们可以转让通过类似任务培训获得的知识, 快速学习目标。 一个成功的方法是元学习, 或者学习任务分配, 学习由外环代表的外环, 学习由梯度下降的内环学习。 然而, 最近的一些实证研究表明, 内环是不必要的, 更简单的模式同样或更好。 我们研究MAML 的绩效, 因为它是内环学习率的函数, 在那里, 零学习率意味着没有内环。 使用随机矩阵理论和线性模型的精确解决方案, 我们计算MAML 测试损失的代数表达法, 用于混合线性回归和非线性回归, 与过度分解模型。 令人惊讶的是, 虽然适应的最佳学习率是积极的, 我们发现最佳培训学习率总是消极的, 一种以前从未考虑过的环境。 因此, 我们不仅通过将学习率降低到零, 来提高绩效, 正如最近的工作所建议的那样, 还可以通过进一步的学习率来提高。