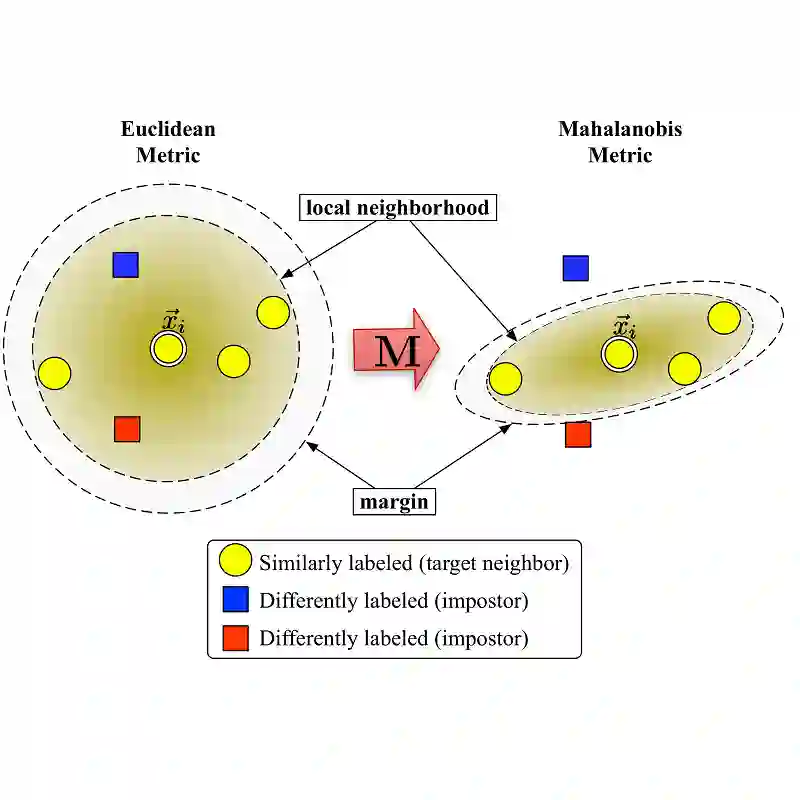

Few-shot Learning aims to learn classifiers for new classes with only a few training examples per class. Existing meta-learning or metric-learning based few-shot learning approaches are limited in handling diverse domains with various number of labels. The meta-learning approaches train a meta learner to predict weights of homogeneous-structured task-specific networks, requiring a uniform number of classes across tasks. The metric-learning approaches learn one task-invariant metric for all the tasks, and they fail if the tasks diverge. We propose to deal with these limitations with meta metric learning. Our meta metric learning approach consists of task-specific learners, that exploit metric learning to handle flexible labels, and a meta learner, that discovers good parameters and gradient decent to specify the metrics in task-specific learners. Thus the proposed model is able to handle unbalanced classes as well as to generate task-specific metrics. We test our approach in the `$k$-shot $N$-way' few-shot learning setting used in previous work and new realistic few-shot setting with diverse multi-domain tasks and flexible label numbers. Experiments show that our approach attains superior performances in both settings.

翻译:少见的学习旨在为新班级学习分类,每个班只有几个培训实例; 现有的元学习或基于衡量学习的少见学习方法在以不同标签处理不同领域方面受到限制; 元学习方法培训元学习者,以预测单一结构任务特定网络的重量,要求不同任务的不同班级统一数量; 衡量学习方法对所有任务都学习一个任务差异性指标,如果任务不同,它们就会失败。 我们提议用元指标学习来应对这些限制。 我们的元指标学习方法由特定任务学习者组成,利用指标学习来处理灵活标签,以及一个元学习者,发现好参数和梯度,以具体任务学习者具体指标。 因此,拟议的模型能够处理不平衡的班级,并产生任务特定指标。 我们用“$-一毛-一毛-一毛-毛-毛-毛-毛-毛-毛-毛”来测试我们的方法。 我们提议用“一毛”的学习设置来测试我们的方法。 我们的微小的学习方法由特定任务学习者组成, 利用标准学习方法处理灵活多重任务和标签数字的新现实的几分制。 实验显示我们的方法在两个环境中都取得了优业绩。