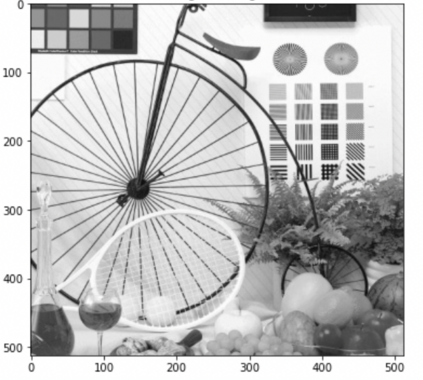

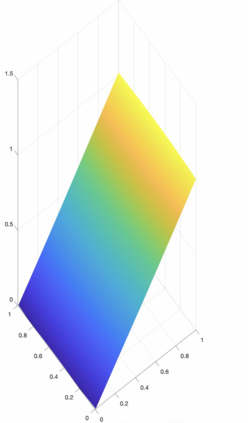

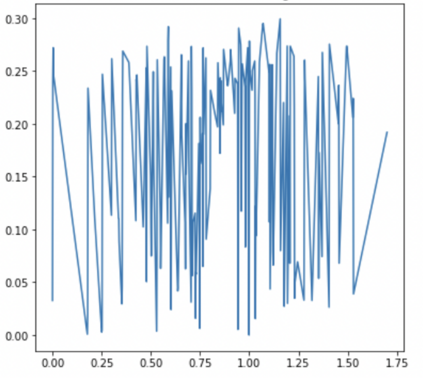

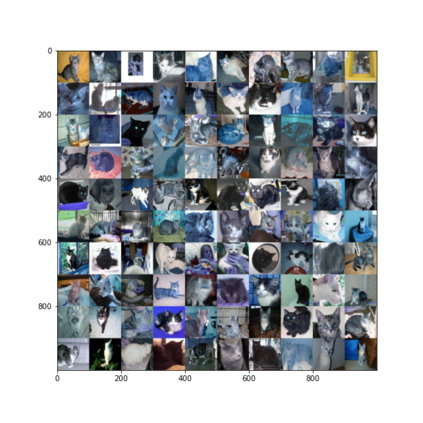

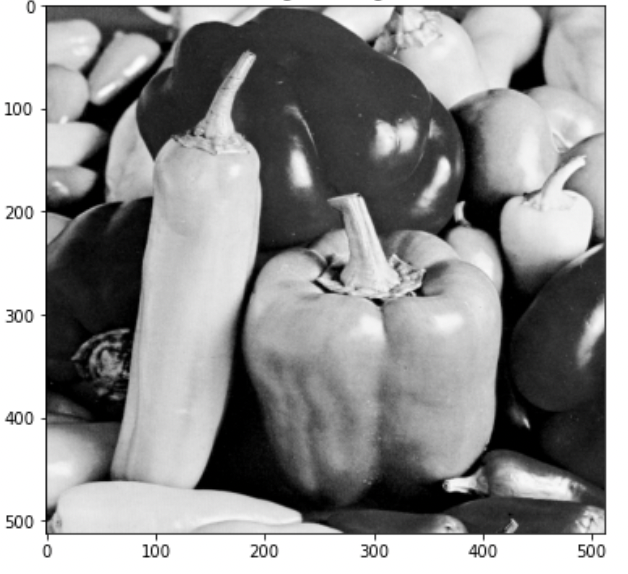

We explain how to use Kolmogorov Superposition Theorem (KST) to overcome the curse of dimensionality in approximating multi-dimensional functions and learning multi-dimensional data sets by using neural networks of two hidden layers. That is, there is a class of functions called $K$-Lipschitz continuous in the sense that the K-outer function $g$ of $f$ is Lipschitz continuous and can be approximated by a ReLU network of two layers with $(2d+1)dn, dn$ widths to have an approximation order $O(d^2/n)$. In addition, we show that polynomials of high degree can be reproduced by using neural networks with activation function $\sigma_\ell(t)=(t_+)^\ell$ for $\ell\ge 2$ with multiple layers and appropriate widths. More layers of neural networks, the higher degree polynomials can be reproduced. Furthermore, we explain how many layers, weights, and neurons of neural networks are needed in order to reproduce high degree polynomials based on $\sigma_\ell$. Finally, we present a mathematical justification for image classification by using the convolutional neural network algorithm.

翻译:我们解释如何使用Kolmogorov Superposition Theorem (KST) 来通过两个隐藏层的神经网络来克服多维功能和学习多维数据集中维度的诅咒。 也就是说, K- Exerer 函数的“ $K$- Lipschitz ” 是连续的, K- Exer 函数为$g$g$f美元, 可以被一个双层( 2d+1) dn 的ReLU 网络所近似。 此外, 我们解释需要多少层、 重量和神经网络神经元的近似值 $O( d ⁇ 2/n) 。 此外, 我们显示, 使用具有激活功能的“ $\ gigma ⁇ ell( t) =( t ⁇ ) ” 的神经网络可以复制高维度的多维度多维度多维值多维值数据。 使用基于多个层和宽度的ReLU网络的网络, 需要多少层、 重量和神经网络的神经元, 来复制高维度的多维度 。 。 我们解释需要多少层、 倍的多维数级的神经网络, 。