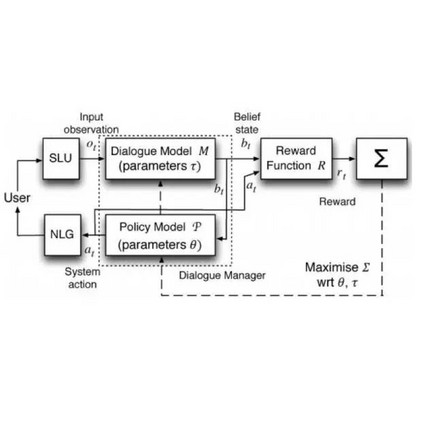

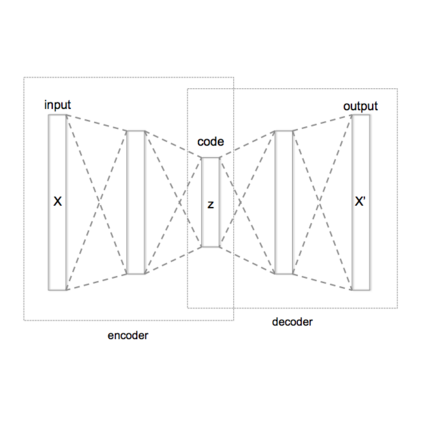

Lifelong learning (LL) is vital for advanced task-oriented dialogue (ToD) systems. To address the catastrophic forgetting issue of LL, generative replay methods are widely employed to consolidate past knowledge with generated pseudo samples. However, most existing generative replay methods use only a single task-specific token to control their models. This scheme is usually not strong enough to constrain the generative model due to insufficient information involved. In this paper, we propose a novel method, prompt conditioned VAE for lifelong learning (PCLL), to enhance generative replay by incorporating tasks' statistics. PCLL captures task-specific distributions with a conditional variational autoencoder, conditioned on natural language prompts to guide the pseudo-sample generation. Moreover, it leverages a distillation process to further consolidate past knowledge by alleviating the noise in pseudo samples. Experiments on natural language understanding tasks of ToD systems demonstrate that PCLL significantly outperforms competitive baselines in building LL models.

翻译:终身学习(LL)对于高级任务导向对话(ToD)系统至关重要。为了解决LLL的灾难性遗忘问题,人们广泛采用基因回放方法,用生成的假样品来巩固过去的知识。然而,大多数现有的基因回放方法只使用一个特定的任务符号来控制模型。由于涉及的信息不足,这个方法通常不足以限制基因模型。在本文中,我们提出了一个新颖的方法,即为终身学习提供快速条件的VAE(PCLL),通过整合任务统计数据来增强基因回放。PCLL捕捉了以有条件的变异自动编码器为条件的特定任务分布,以自然语言提示为条件,引导伪抽样的一代。此外,它利用蒸馏过程来通过减少伪样品中的噪音来进一步巩固过去的知识。关于ToD系统自然语言理解任务的实验表明,PCLLL在建立LM模型时大大超越了竞争性基线。