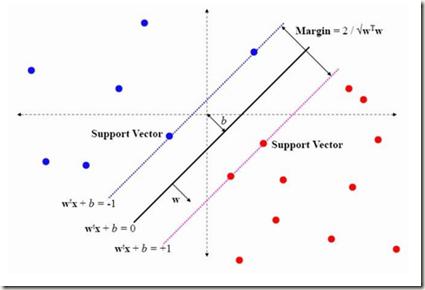

We compare classification and regression tasks in an overparameterized linear model with Gaussian features. On the one hand, we show that with sufficient overparameterization all training points are support vectors: solutions obtained by least-squares minimum-norm interpolation, typically used for regression, are identical to those produced by the hard-margin support vector machine (SVM) that minimizes the hinge loss, typically used for training classifiers. On the other hand, we show that there exist regimes where these interpolating solutions generalize well when evaluated by the 0-1 test loss function, but do not generalize if evaluated by the square loss function, i.e. they approach the null risk. Our results demonstrate the very different roles and properties of loss functions used at the training phase (optimization) and the testing phase (generalization).

翻译:一方面,我们表明,如果足够多的多参数化,所有培训点都是辅助矢量:通常用于回归的最小平方最小中度内插法获得的解决方案与硬边支持矢量机(SVM)产生的解决方案相同,硬边支持矢量机(SVM)产生的解决方案可以最大限度地减少断层损失,通常用于培训分类师。另一方面,我们表明,存在这样的制度,即这些内插解决方案在用0-1测试损失函数评估时非常普遍,但如果用平方损失函数来评估,则不普遍化,即它们接近完全风险。我们的结果显示了在培训阶段(优化)和测试阶段(一般化)使用的损失函数的不同作用和特性。