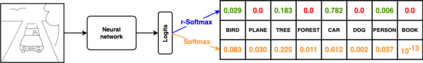

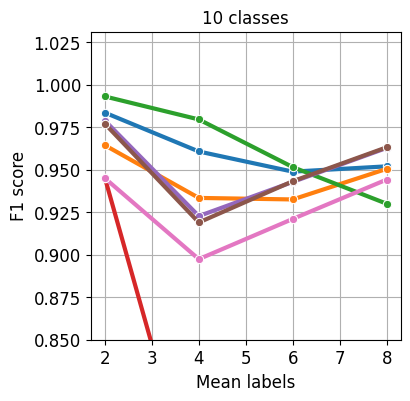

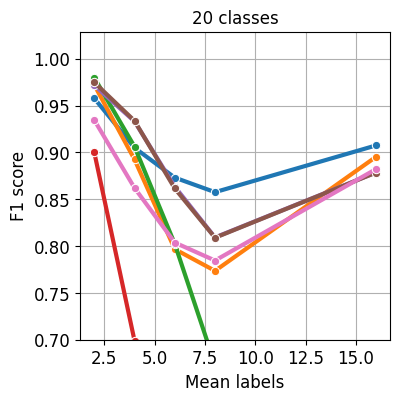

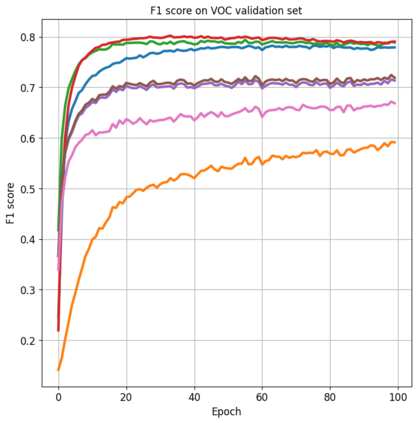

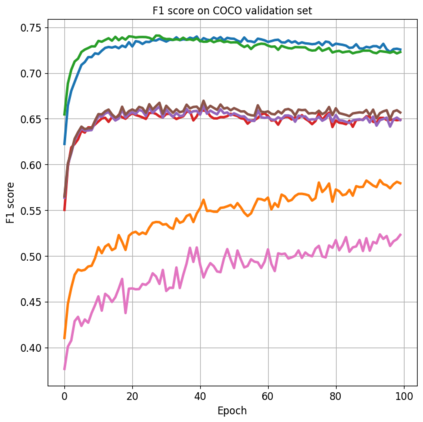

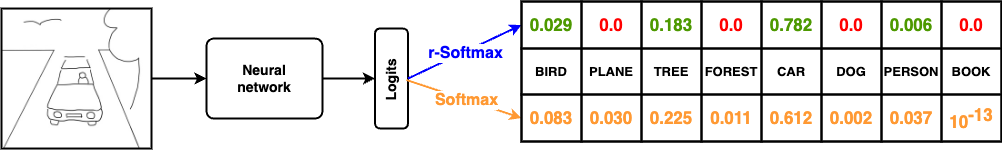

Nowadays artificial neural network models achieve remarkable results in many disciplines. Functions mapping the representation provided by the model to the probability distribution are the inseparable aspect of deep learning solutions. Although softmax is a commonly accepted probability mapping function in the machine learning community, it cannot return sparse outputs and always spreads the positive probability to all positions. In this paper, we propose r-softmax, a modification of the softmax, outputting sparse probability distribution with controllable sparsity rate. In contrast to the existing sparse probability mapping functions, we provide an intuitive mechanism for controlling the output sparsity level. We show on several multi-label datasets that r-softmax outperforms other sparse alternatives to softmax and is highly competitive with the original softmax. We also apply r-softmax to the self-attention module of a pre-trained transformer language model and demonstrate that it leads to improved performance when fine-tuning the model on different natural language processing tasks.

翻译:现在在许多领域中,人工神经网络模型取得了显著的成果。函数映射模型提供的表示到概率分布是深度学习解决方案的不可分割的方面。虽然softmax是机器学习界通常接受的概率映射函数,但它无法返回稀疏的输出,总是将正的概率分布到所有位置。在本文中,我们提出了r-softmax,一种修改的softmax,它输出稀疏的概率分布,具有可控的稀疏率。与现有的稀疏概率映射函数不同,我们提供了一种直观的机制来控制输出的稀疏程度。我们在多标签数据集上展示,r-softmax胜过了其他softmax的稀疏替代品,并且在与原始softmax的比较中表现出高度的竞争力。我们还将r-softmax应用于预训练的transformer语言模型的自我注意模块,并证明它在不同的自然语言处理任务的微调中导致了性能的提高。