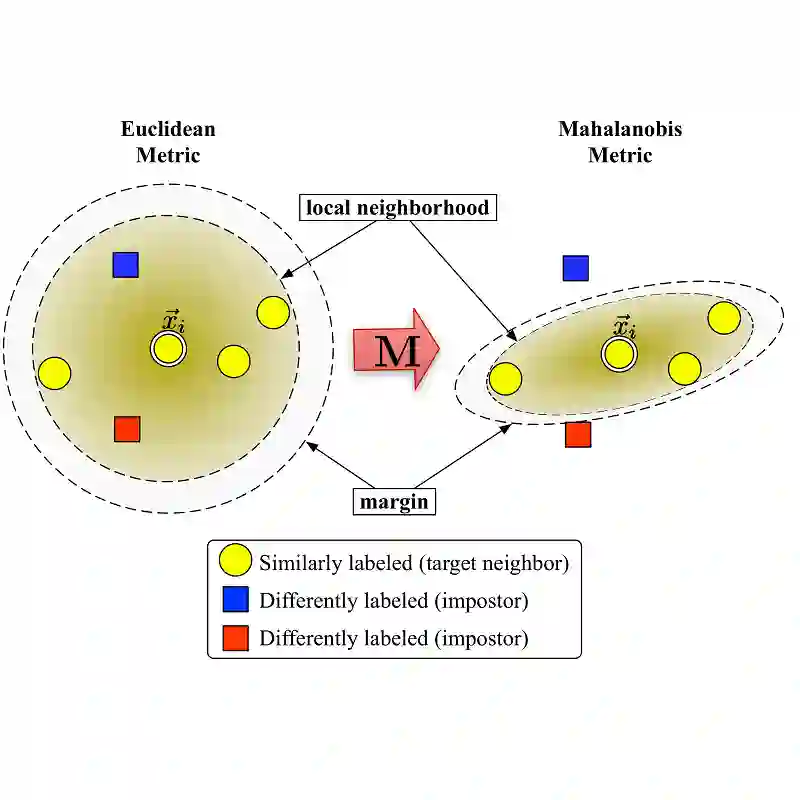

Multi-modal deep metric learning is crucial for effectively capturing diverse representations in tasks such as face verification, fine-grained object recognition, and product search. Traditional approaches to metric learning, whether based on distance or margin metrics, primarily emphasize class separation, often overlooking the intra-class distribution essential for multi-modal feature learning. In this context, we propose a novel loss function called Density-Aware Adaptive Margin Loss(DAAL), which preserves the density distribution of embeddings while encouraging the formation of adaptive sub-clusters within each class. By employing an adaptive line strategy, DAAL not only enhances intra-class variance but also ensures robust inter-class separation, facilitating effective multi-modal representation. Comprehensive experiments on benchmark fine-grained datasets demonstrate the superior performance of DAAL, underscoring its potential in advancing retrieval applications and multi-modal deep metric learning.

翻译:暂无翻译