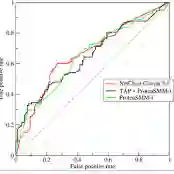

Deepfake is the manipulated video made with a generative deep learning technique such as Generative Adversarial Networks (GANs) or Auto Encoder that anyone can utilize. Recently, with the increase of Deepfake videos, some classifiers consisting of the convolutional neural network that can distinguish fake videos as well as deepfake datasets have been actively created. However, the previous studies based on the CNN structure have the problem of not only overfitting, but also considerable misjudging fake video as real ones. In this paper, we propose a Vision Transformer model with distillation methodology for detecting fake videos. We design that a CNN features and patch-based positioning model learns to interact with all positions to find the artifact region for solving false negative problem. Through comparative analysis on Deepfake Detection (DFDC) Dataset, we verify that the proposed scheme with patch embedding as input outperforms the state-of-the-art using the combined CNN features. Without ensemble technique, our model obtains 0.978 of AUC and 91.9 of f1 score, while previous SOTA model yields 0.972 of AUC and 90.6 of f1 score on the same condition.

翻译:深层假肢是经过操纵的录相,用任何人都可以使用的基因化反反转网络或自动编码器等基因化深层次学习技术制作的视频。最近,随着Deepfake视频的增加,一些由共进神经网络组成的分类器已经积极创建,这些分类器可以区分假视频和深淡数据集。然而,以前基于CNN结构的研究不仅存在超配问题,而且还存在相当大误判假假视频的真实问题。在本文中,我们提出了带有蒸馏方法的愿景变异器模型,用于探测假视频。我们设计了一个CNN特征和补丁定位模型,以学习与所有位置互动,找到用于解决虚假负面问题的文物区域。通过对Deepfake检测(DFDC)数据集的比较分析,我们核实了将补丁嵌入作为投入的拟议的方案是否使用CNN的组合功能,使国家艺术的状态形成完善。没有混合技术,我们的模型获得0.978和91.9分的AUC和F1分数,而以前的SOTA模型得出了0.97分的A和90.6分。