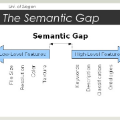

Text-guided 3D shape generation remains challenging due to the absence of large paired text-shape data, the substantial semantic gap between these two modalities, and the structural complexity of 3D shapes. This paper presents a new framework called Image as Stepping Stone (ISS) for the task by introducing 2D image as a stepping stone to connect the two modalities and to eliminate the need for paired text-shape data. Our key contribution is a two-stage feature-space-alignment approach that maps CLIP features to shapes by harnessing a pre-trained single-view reconstruction (SVR) model with multi-view supervisions: first map the CLIP image feature to the detail-rich shape space in the SVR model, then map the CLIP text feature to the shape space and optimize the mapping by encouraging CLIP consistency between the input text and the rendered images. Further, we formulate a text-guided shape stylization module to dress up the output shapes with novel textures. Beyond existing works on 3D shape generation from text, our new approach is general for creating shapes in a broad range of categories, without requiring paired text-shape data. Experimental results manifest that our approach outperforms the state-of-the-arts and our baselines in terms of fidelity and consistency with text. Further, our approach can stylize the generated shapes with both realistic and fantasy structures and textures.

翻译:文本制成 3D 形状的生成仍然具有挑战性, 原因是没有大型配对文本形状数据, 这两种模式之间存在巨大的语义差异, 以及 3D 形状的结构复杂性 。 本文展示了一个新的框架, 名为“ Stepping Stone 图像 ”, 用于任务, 将 2D 图像作为跳板, 用于连接两种模式, 并消除配对文本形状数据的需求 。 我们的主要贡献是一个两阶段的地貌- 空间对齐方法, 将 CLIP 特性映射成形状, 其方式是使用多视图监管的预先训练的单一视图重建模型( SVR ) : 首先将 CLIP 图像特性映射成SVR 模型中细节丰富的形状空间, 然后将 CLIP 文本特性映射成形状, 通过鼓励 CLIP 输入文本文本与配对图像数据的一致性来优化 。 此外, 我们开发一个以3D 形状生成的模型模型的模型, 我们的新方法是一般的, 以直观性文本的形状来构建一个直观的形状, 。 要求更精确的文本的直观的直观的文本,, 和直观的直观的图像的形状将我们的文本以直观方法以更直观的形状排列, 。