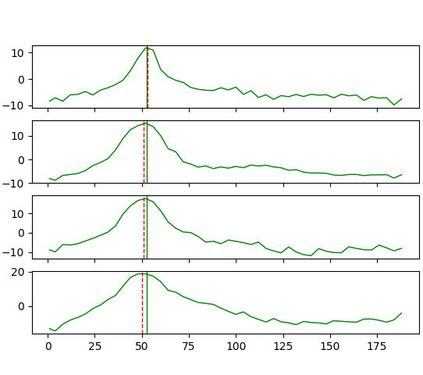

Volumetric deep learning approach towards stereo matching aggregates a cost volume computed from input left and right images using 3D convolutions. Recent works showed that utilization of extracted image features and a spatially varying cost volume aggregation complements 3D convolutions. However, existing methods with spatially varying operations are complex, cost considerable computation time, and cause memory consumption to increase. In this work, we construct Guided Cost volume Excitation (GCE) and show that simple channel excitation of cost volume guided by image can improve performance considerably. Moreover, we propose a novel method of using top-k selection prior to soft-argmin disparity regression for computing the final disparity estimate. Combining our novel contributions, we present an end-to-end network that we call Correlate-and-Excite (CoEx). Extensive experiments of our model on the SceneFlow, KITTI 2012, and KITTI 2015 datasets demonstrate the effectiveness and efficiency of our model and show that our model outperforms other speed-based algorithms while also being competitive to other state-of-the-art algorithms. Codes will be made available at https://github.com/antabangun/coex.

翻译:近期的著作表明,利用提取的图像特征和空间上差异的成本总量组合补充了3D演化。然而,空间上不同操作的现有方法十分复杂,计算时间相当长,并导致内存消耗增加。在这项工作中,我们构建了“引导成本量批量”(GCE),并显示,在图像指导下对成本量的简单通道推算可以大大改进性能。此外,我们提议了一种新颖的方法,在计算最后差异估计值时,在软态差差差之前使用最高至最高选择法来计算最后差异值。我们结合了我们的新贡献,我们提出了一个端到端的网络,我们称之为“Correlate-Excite”(CoExEx)。我们在SceneFlow、KITTI 2012和KITTI 2015 数据集上对模型进行的广泛实验,展示了我们模型的效能和效率,并显示我们的模型比其他速度算法要好得多。代码将在https://gith/antasco/antatacom上提供。