神经算法推理

·

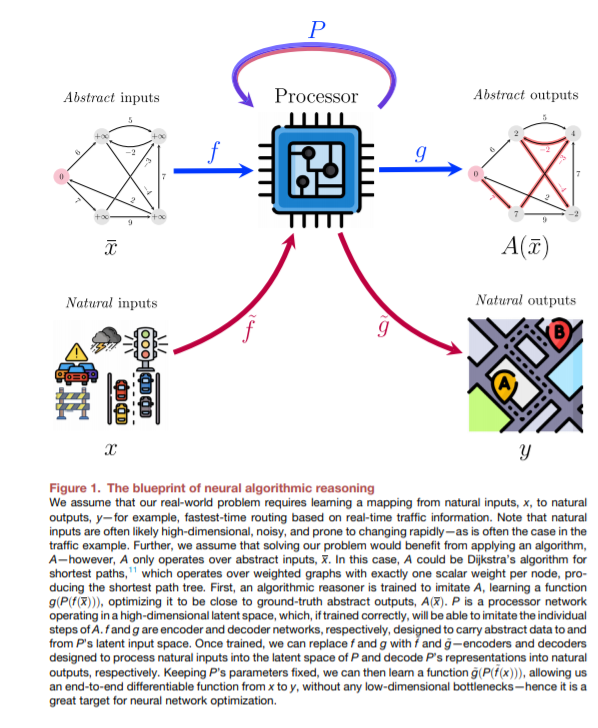

算法是最近全球技术进步的基础,特别是,它们是一个领域的技术进步迅速应用到另一个领域的基石。我们认为,算法与深度学习方法有着本质上的不同,这强烈表明,如果深度学习方法能够更好地模仿算法,那么算法所看到的那种泛化将在深度学习中成为可能——这是当前机器学习方法所无法达到的。此外,通过在学习算法的连续空间中表示元素,神经网络能够使已知算法更接近于现实世界的问题,可能发现比人类计算机科学家提出的更有效和实用的解决方案。

成为VIP会员查看完整内容

相关内容

Arxiv

0+阅读 · 2021年9月15日