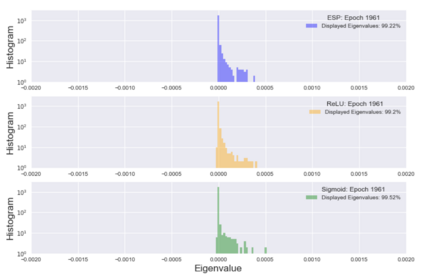

We present a Statistical Mechanics (SM) model of deep neural networks, connecting the energy-based and the feed forward networks (FFN) approach. We infer that FFN can be understood as performing three basic steps: encoding, representation validation and propagation. From the meanfield solution of the model, we obtain a set of natural activations -- such as Sigmoid, $\tanh$ and ReLu -- together with the state-of-the-art, Swish; this represents the expected information propagating through the network and tends to ReLu in the limit of zero noise.We study the spectrum of the Hessian on an associated classification task, showing that Swish allows for more consistent performances over a wider range of network architectures.

翻译:我们提出了一个深层神经网络的统计机械(SM)模型,将基于能源的网络和进料的前沿网络(FFN)方法连接起来。我们推断,FFFFF可以被理解为执行三个基本步骤:编码、代表验证和传播。我们从模型的暗地解决方案中获得了一套自然激活,例如Sigmoid、$tanh$和ReLu,以及最新技术,Swish;这是在网络中传播的预期信息,在零噪音的限度内倾向于ReLu。我们研究了赫塞人关于相关分类任务的范围,表明Swish允许在更广泛的网络结构中更加一致地运行。