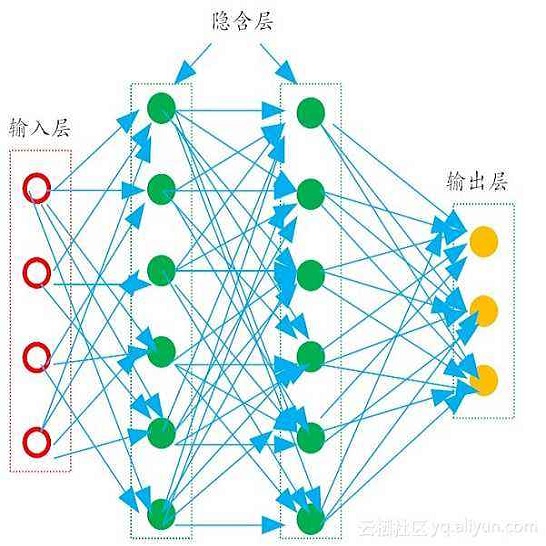

We present a joint camera and radar approach to enable autonomous vehicles to understand and react to human gestures in everyday traffic. Initially, we process the radar data with a PointNet followed by a spatio-temporal multilayer perceptron (stMLP). Independently, the human body pose is extracted from the camera frame and processed with a separate stMLP network. We propose a fusion neural network for both modalities, including an auxiliary loss for each modality. In our experiments with a collected dataset, we show the advantages of gesture recognition with two modalities. Motivated by adverse weather conditions, we also demonstrate promising performance when one of the sensors lacks functionality.

翻译:我们提出了一个联合相机和雷达方法,使自主飞行器能够理解和应对日常交通中的人类手势; 最初,我们用一个PointNet处理雷达数据,然后用一个时空多层光谱(stMLP)处理雷达数据; 独立地说,人体表面是从照相机架上提取的,然后用一个单独的 stMLP 网络处理; 我们为两种模式提议一个聚合神经网络,包括每种模式的辅助损失; 在用一个收集的数据集进行实验时,我们用两种方式展示了手势识别的优势; 受恶劣天气条件的驱使,我们还在其中一种传感器缺乏功能时展示出有希望的性能。