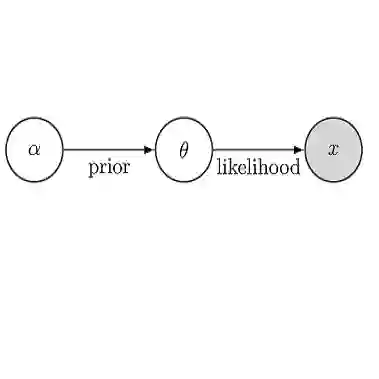

For stochastic models with intractable likelihood functions, approximate Bayesian computation offers a way of approximating the true posterior through repeated comparisons of observations with simulated model outputs in terms of a small set of summary statistics. These statistics need to retain the information that is relevant for constraining the parameters but cancel out the noise. They can thus be seen as thermodynamic state variables, for general stochastic models. For many scientific applications, we need strictly more summary statistics than model parameters to reach a satisfactory approximation of the posterior. Therefore, we propose to use the inner dimension of deep neural network based Autoencoders as summary statistics. To create an incentive for the encoder to encode all the parameter-related information but not the noise, we give the decoder access to explicit or implicit information on the noise that has been used to generate the training data. We validate the approach empirically on two types of stochastic models.

翻译:对于具有难以捉摸的可能性功能的随机模型,近似贝叶斯计算提供了一种方法,通过反复比较观测结果与模拟模型产出,从小类简要统计中,将真实的后子体相近。这些统计数据需要保留与限制参数相关的信息,但需要取消噪音。因此,它们可以被视为热力状态变量,用于一般随机模型。对于许多科学应用,我们需要比模型参数更精确的统计,才能达到对后子体的满意近似值。因此,我们提议使用以自动编码器为基础的深层神经网络的内部维度作为简要统计数据。为了鼓励编码器将所有参数相关信息编码,而不是噪音,我们让解密器获得关于用于生成培训数据的噪音的直线或隐含信息。我们验证了两种类型随机模型的经验方法。