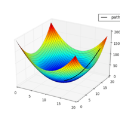

Neural networks are usually trained with different variants of gradient descent based optimization algorithms such as stochastic gradient descent or the Adam optimizer. Recent theoretical work states that the critical points (where the gradient of the loss is zero) of two-layer ReLU networks with the square loss are not all local minima. However, in this work we will explore an algorithm for training two-layer neural networks with ReLU-like activation and the square loss that alternatively finds the critical points of the loss function analytically for one layer while keeping the other layer and the neuron activation pattern fixed. Experiments indicate that this simple algorithm can find deeper optima than Stochastic Gradient Descent or the Adam optimizer, obtaining significantly smaller training loss values on four out of the five real datasets evaluated. Moreover, the method is faster than the gradient descent methods and has virtually no tuning parameters.

翻译:神经网络通常使用不同变体的梯度下降优化算法进行训练,例如随机梯度下降或Adam优化器。最近的理论工作指出,具有平方损失的两层ReLU网络的临界点(损失梯度为零的点)不都是局部最小值。然而,在这项工作中,我们将探讨一种算法,用于使用ReLU-like激活和平方损失训练两层神经网络,该算法通过在一个层中解析地找到损失函数的临界点,同时保持另一个层和神经元激活模式不变。实验表明,这种简单的算法可以比随机梯度下降或Adam优化器更深地找到优化,对五个真实数据集中的四个数据集评估,在训练损失方面获得了显著较小的值。此外,该方法比梯度下降方法更快,几乎没有调节参数。