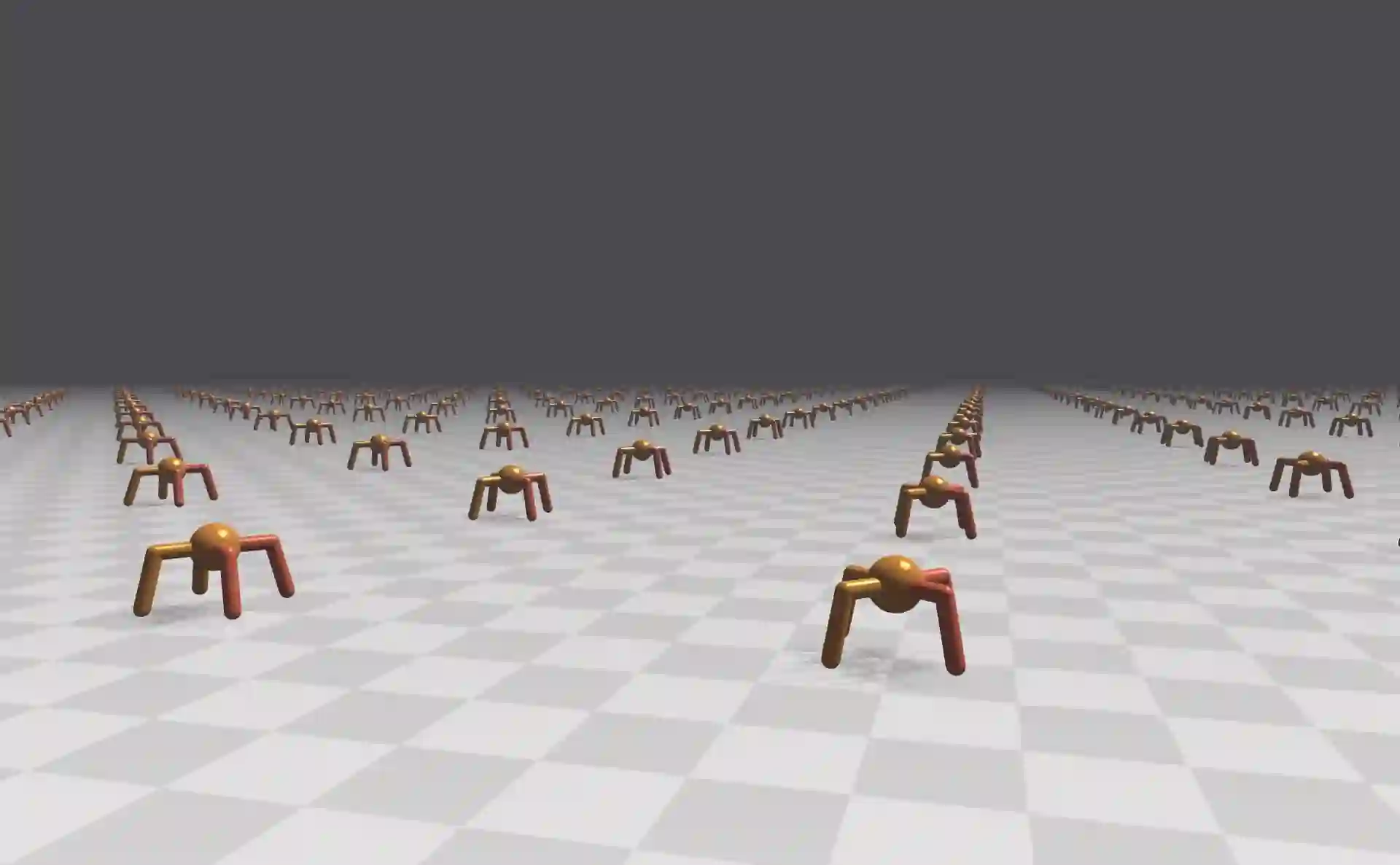

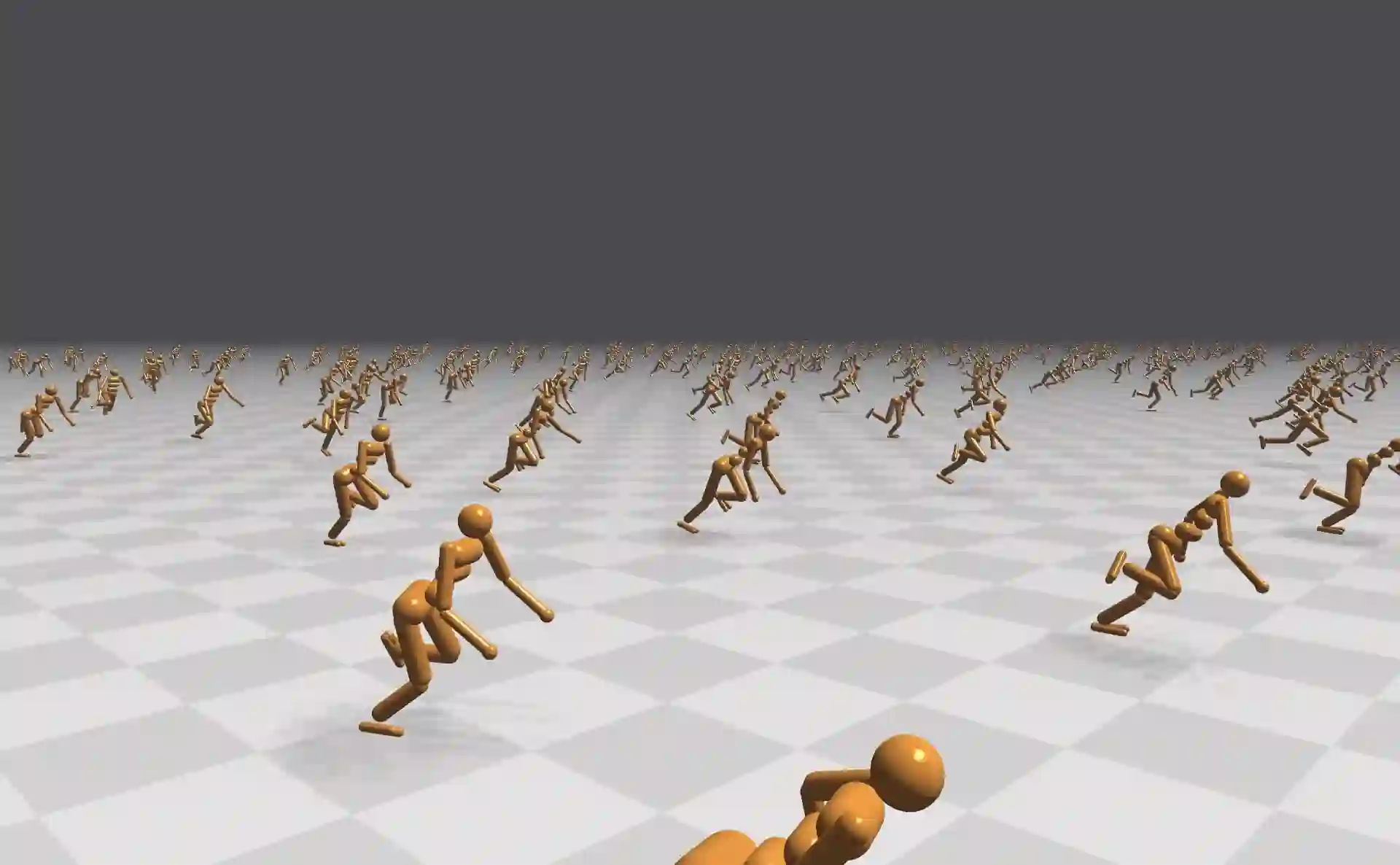

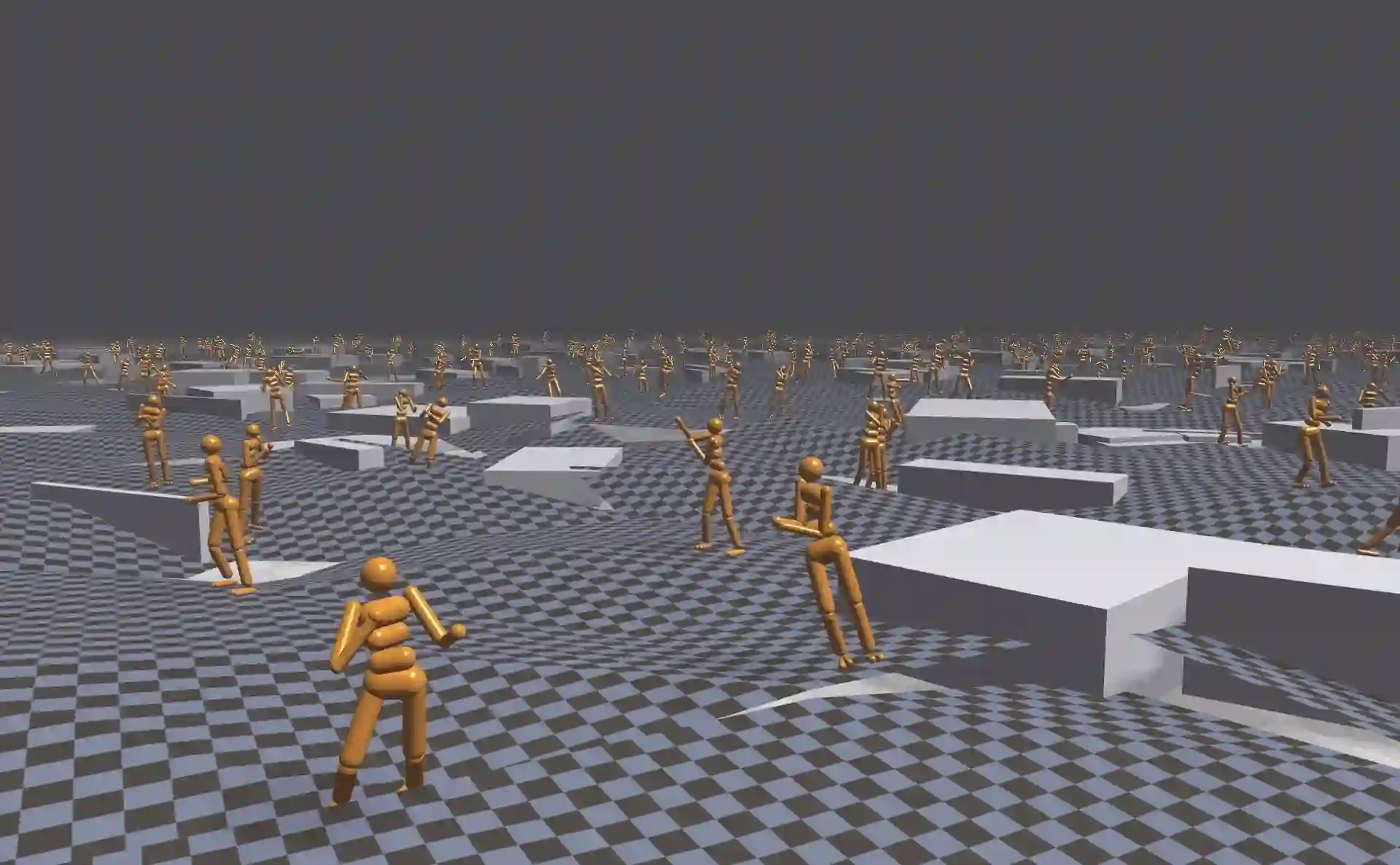

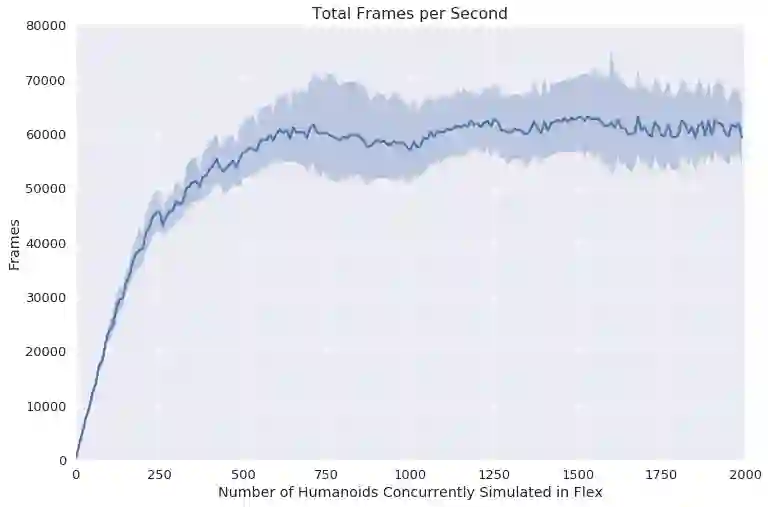

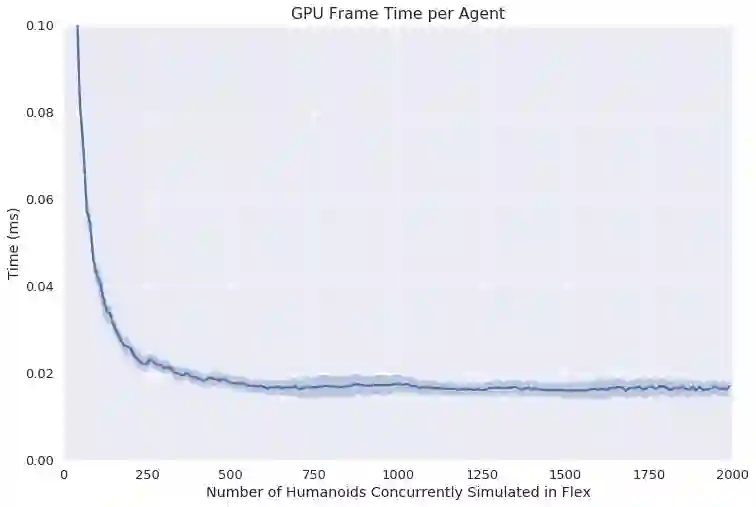

Most Deep Reinforcement Learning (Deep RL) algorithms require a prohibitively large number of training samples for learning complex tasks. Many recent works on speeding up Deep RL have focused on distributed training and simulation. While distributed training is often done on the GPU, simulation is not. In this work, we propose using GPU-accelerated RL simulations as an alternative to CPU ones. Using NVIDIA Flex, a GPU-based physics engine, we show promising speed-ups of learning various continuous-control, locomotion tasks. With one GPU and CPU core, we are able to train the Humanoid running task in less than 20 minutes, using 10-1000x fewer CPU cores than previous works. We also demonstrate the scalability of our simulator to multi-GPU settings to train more challenging locomotion tasks.

翻译:大部分深强化学习(Deep RL)算法要求大量培训样本,用于学习复杂任务。许多关于加快深RL的近期工作侧重于分布式培训和模拟。虽然分布式培训通常是在GPU上完成的,但模拟不是。在这项工作中,我们提议使用GPU加速的RL模拟作为CPU的替代。我们用基于GPU的物理引擎NVIDIA Flex,展示了学习各种连续控制、移动任务的有希望的加速。用一个 GPU 和 CPU 核心,我们可以在20分钟以内用比以前工作少10-1000x CPU核心来训练人形运行任务。我们还展示了我们的模拟器在多GPU环境中的可扩展性,以训练更具挑战性的移动任务。