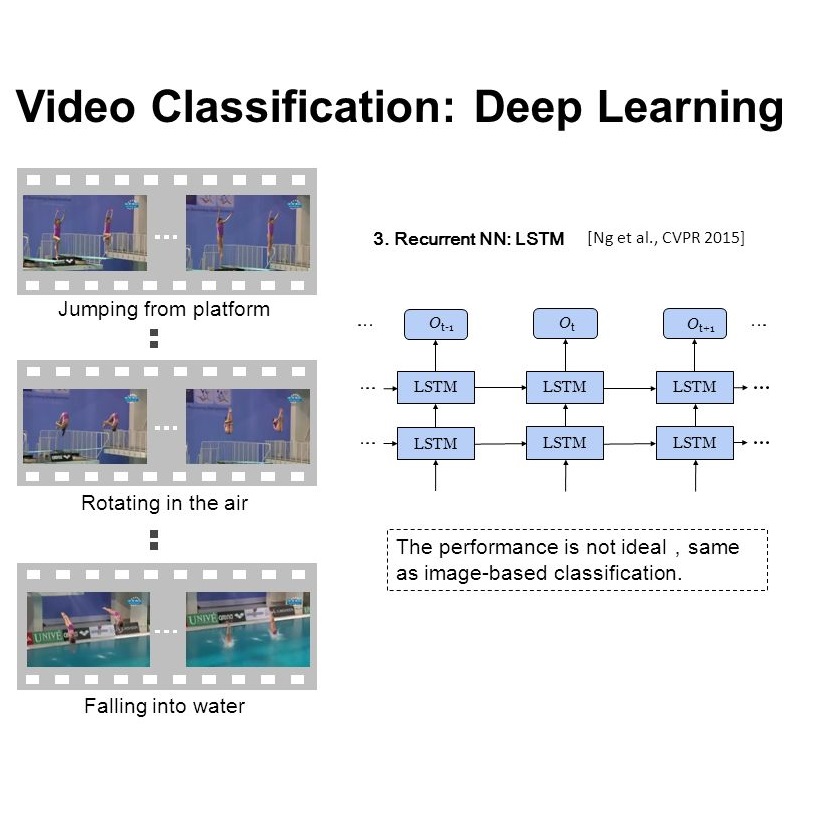

Videos are multimodal in nature. Conventional video recognition pipelines typically fuse multimodal features for improved performance. However, this is not only computationally expensive but also neglects the fact that different videos rely on different modalities for predictions. This paper introduces Hierarchical and Conditional Modality Selection (HCMS), a simple yet efficient multimodal learning framework for efficient video recognition. HCMS operates on a low-cost modality, i.e., audio clues, by default, and dynamically decides on-the-fly whether to use computationally-expensive modalities, including appearance and motion clues, on a per-input basis. This is achieved by the collaboration of three LSTMs that are organized in a hierarchical manner. In particular, LSTMs that operate on high-cost modalities contain a gating module, which takes as inputs lower-level features and historical information to adaptively determine whether to activate its corresponding modality; otherwise it simply reuses historical information. We conduct extensive experiments on two large-scale video benchmarks, FCVID and ActivityNet, and the results demonstrate the proposed approach can effectively explore multimodal information for improved classification performance while requiring much less computation.

翻译:常规视频识别管道通常会为改进性能而结合多式联运特征,然而,这不仅计算费用昂贵,而且忽视了不同视频依赖不同预测模式这一事实。本文介绍的是等级和条件模式选择(HCMS),这是高效视频识别的一个简单而高效的多式学习框架。HCMS以低成本模式运作,即默认的音频线索,并动态地决定是否使用计算成本昂贵的模式,包括每次投入的外观和运动线索。这通过三个LSTMs的合作实现。特别是,高成本模式运行的LSTMs包含一个标签模块,该模块采用较低级别特征和历史信息作为投入,以便适应性地决定是否启动其相应模式;否则,它就只是重复历史信息。我们对两个大型视频基准,即FCVID和活动网络进行广泛的实验,并且结果表明拟议方法可以有效探索改进分类绩效的多式联运信息,而不需要进行大量计算。