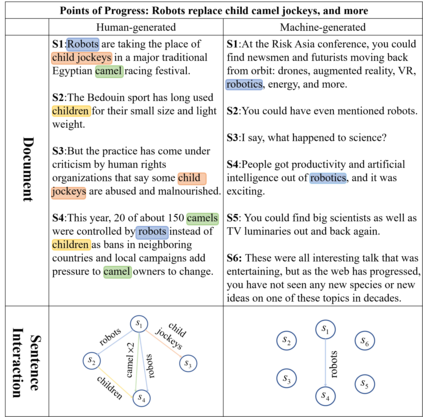

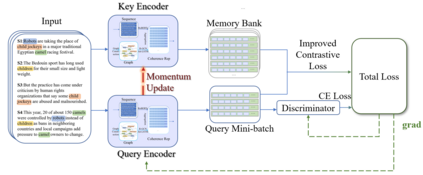

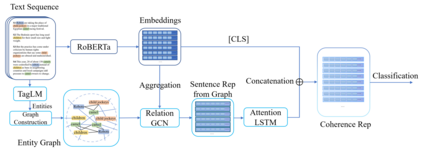

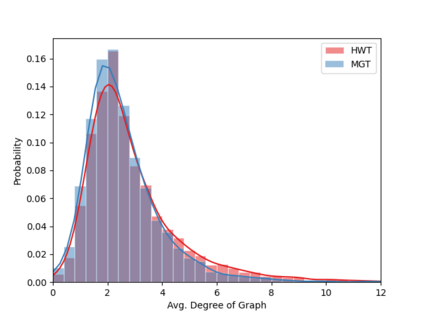

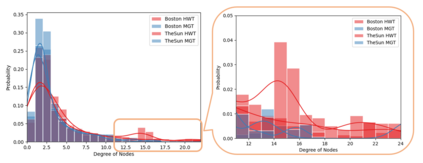

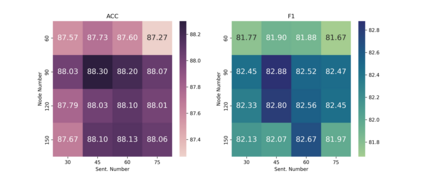

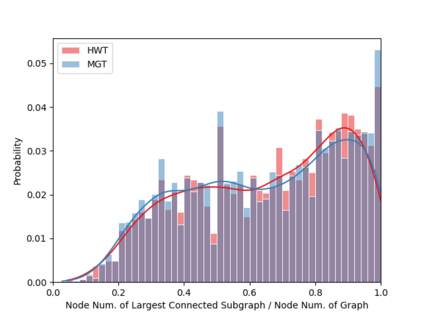

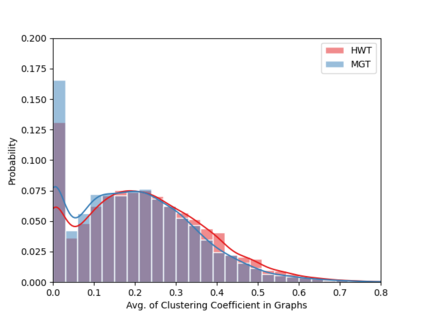

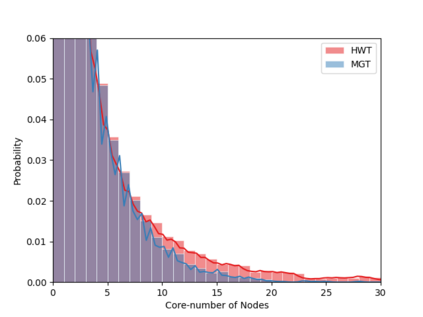

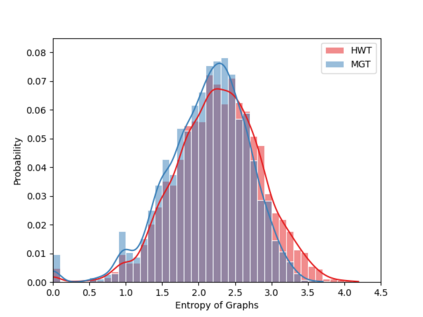

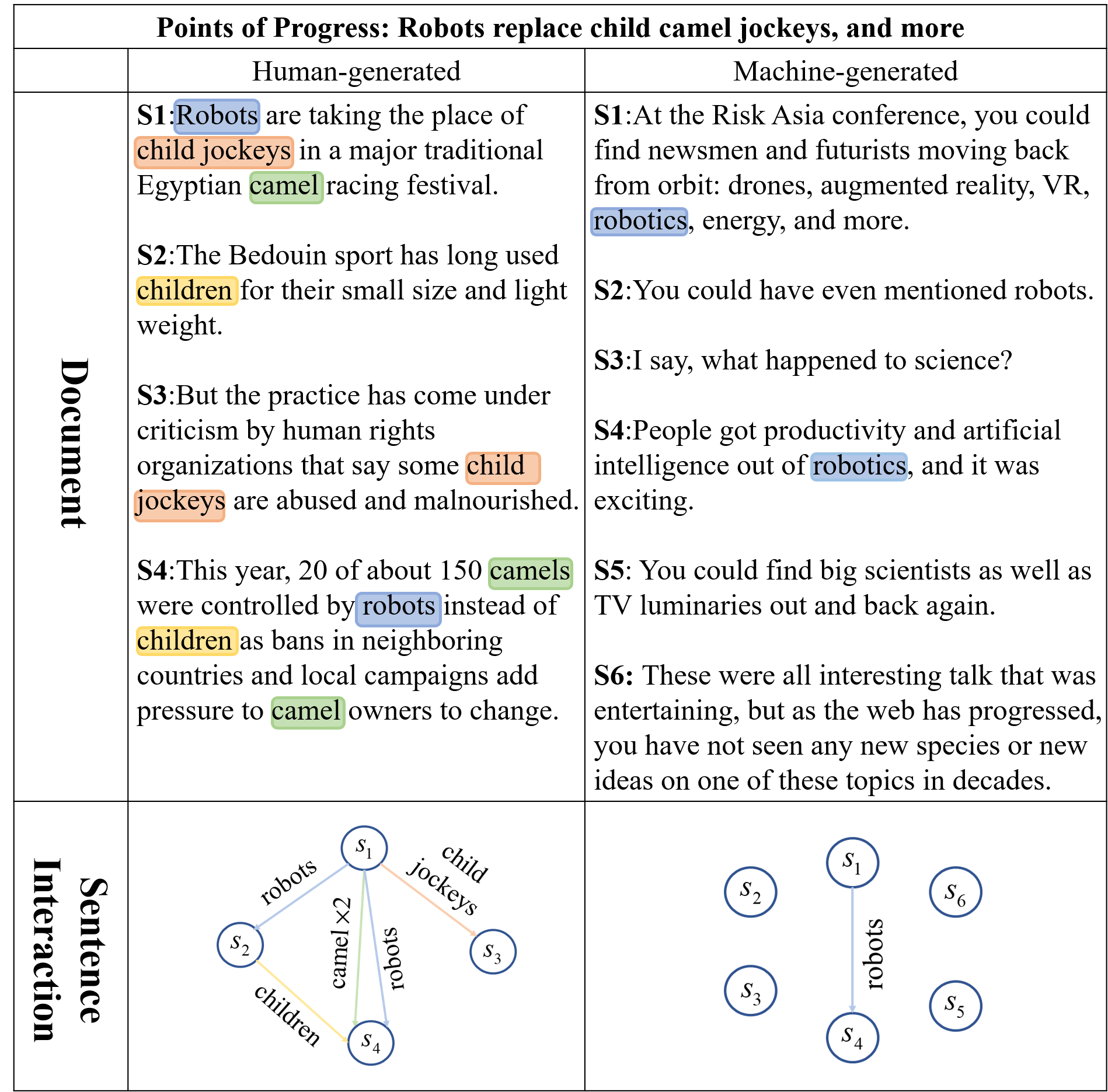

Machine-Generated Text (MGT) detection, a task that discriminates MGT from Human-Written Text (HWT), plays a crucial role in preventing misuse of text generative models, which excel in mimicking human writing style recently. Latest proposed detectors usually take coarse text sequence as input and output some good results by fine-tune pretrained models with standard cross-entropy loss. However, these methods fail to consider the linguistic aspect of text (e.g., coherence) and sentence-level structures. Moreover, they lack the ability to handle the low-resource problem which could often happen in practice considering the enormous amount of textual data online. In this paper, we present a coherence-based contrastive learning model named CoCo to detect the possible MGT under low-resource scenario. Inspired by the distinctiveness and permanence properties of linguistic feature, we represent text as a coherence graph to capture its entity consistency, which is further encoded by the pretrained model and graph neural network. To tackle the challenges of data limitations, we employ a contrastive learning framework and propose an improved contrastive loss for making full use of hard negative samples in training stage. The experiment results on two public datasets prove our approach outperforms the state-of-art methods significantly.

翻译:机械化文本(MGT)检测是人类-文字文本(HWT)中对MGT的区别性任务,在防止滥用文本基因模型方面发挥着关键的作用,这些模型在最近模仿人的写作风格方面非常出色。最新建议的探测器通常采用粗粗体文本序列作为输入和产出一些良好结果的方法,通过精细的预先训练模型,具有标准的跨元素损失标准,这些方法未能考虑到文本的语言方面(例如一致性)和句级结构。此外,它们缺乏处理低资源问题的能力,而考虑到大量在线文本数据,这种低资源问题在实践中往往会发生。在本文件中,我们提出了一个基于一致性的对比学习模型,名为COCoo,以在低资源情景下探测可能的MGT。受语言特征的独特性和持久性特性的启发,我们把文本作为一个一致性图表,以捕捉到其实体的一致性,这种一致性又由预先训练的模型和图形神经网络加以进一步编码。为了应对数据限制的挑战,我们采用了对比性学习框架,并提议改进对比性损失,以便在公共阶段充分使用硬模样试验结果。