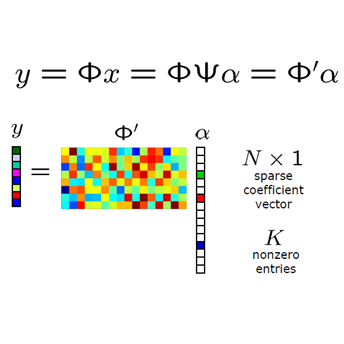

Various iterative reconstruction algorithms for inverse problems can be unfolded as neural networks. Empirically, this approach has often led to improved results, but theoretical guarantees are still scarce. While some progress on generalization properties of neural networks have been made, great challenges remain. In this chapter, we discuss and combine these topics to present a generalization error analysis for a class of neural networks suitable for sparse reconstruction from few linear measurements. The hypothesis class considered is inspired by the classical iterative soft-thresholding algorithm (ISTA). The neural networks in this class are obtained by unfolding iterations of ISTA and learning some of the weights. Based on training samples, we aim at learning the optimal network parameters via empirical risk minimization and thereby the optimal network that reconstructs signals from their compressive linear measurements. In particular, we may learn a sparsity basis that is shared by all of the iterations/layers and thereby obtain a new approach for dictionary learning. For this class of networks, we present a generalization bound, which is based on bounding the Rademacher complexity of hypothesis classes consisting of such deep networks via Dudley's integral.

翻译:针对反面问题的各种迭代重建算法可以作为神经网络展开。 生动地说, 这种方法往往导致结果的改善, 但理论保障仍然很少。 虽然在神经网络的一般特性方面取得了一些进展, 但仍存在着巨大的挑战。 在本章中, 我们讨论并结合这些题目, 提出适合从少数线性测量中进行稀薄重建的一类神经网络的概括性错误分析。 所考虑的假设类是经典的迭代软保持算法( ISTA ) 的启发。 本类的神经网络是通过发展ISTA的迭代和学习一些重量来获得的。 根据培训样本, 我们的目标是通过实验风险最小化来学习最佳网络参数, 从而从它们压缩的线性测量中重建信号的最佳网络。 特别是, 我们可能学习一种由所有线性测量/ 共享的宽度基础, 从而获得一种新的字典学习方法。 对于这一类网络, 我们提出一个概括性约束, 其基础是通过将Dudley 集成的深层网络的Rademacher 复杂的假设课程捆绑在一起。