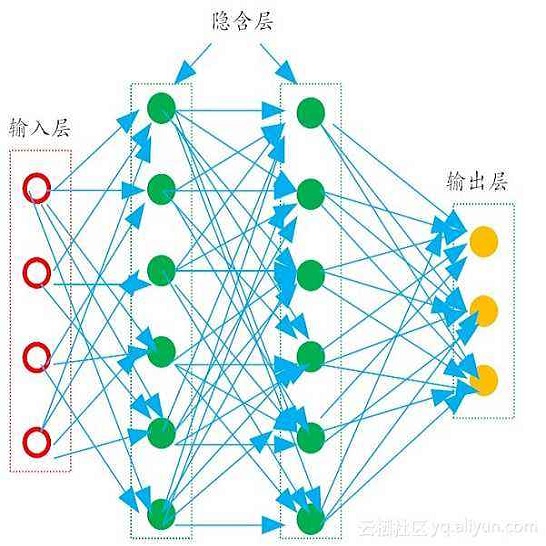

Algebraic Multigrid (AMG) is one of the most widely used iterative algorithms for solving large sparse linear equations $Ax=b$. In AMG, the coarse grid is a key component that affects the efficiency of the algorithm, the construction of which relies on the strong threshold parameter $\theta$. This parameter is generally chosen empirically, with a default value in many current AMG solvers of 0.25 for 2D problems and 0.5 for 3D problems. However, for many practical problems, the quality of the coarse grid and the efficiency of the AMG algorithm are sensitive to $\theta$; the default value is rarely optimal, and sometimes is far from it. Therefore, how to choose a better $\theta$ is an important question. In this paper, we propose a deep learning based auto-tuning method, AutoAMG($\theta$) for multiscale sparse linear equations, which are widely used in practical problems. The method uses Graph Neural Networks (GNNs) to extract matrix features, and a Multilayer Perceptron (MLP) to build the mapping between matrix features and the optimal $\theta$, which can adaptively output $\theta$ values for different matrices. Numerical experiments show that AutoAMG($\theta$) can achieve significant speedup compared to the default $\theta$ value.

翻译:暂无翻译