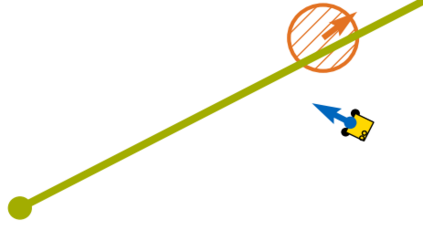

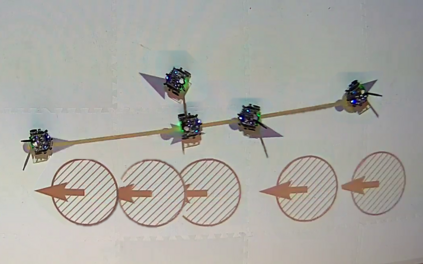

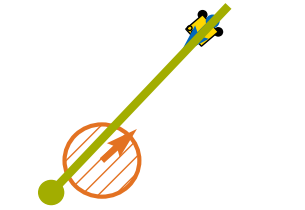

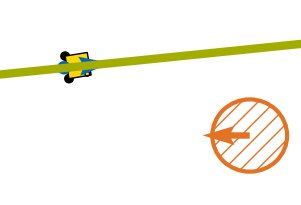

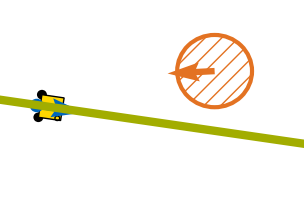

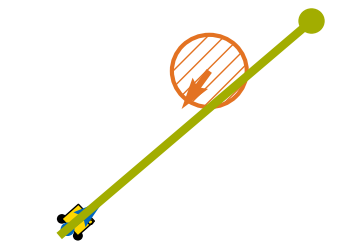

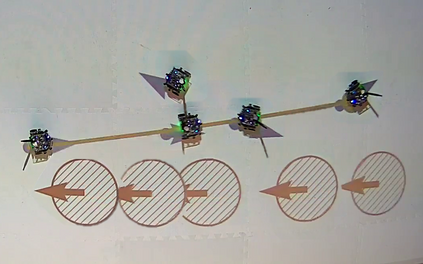

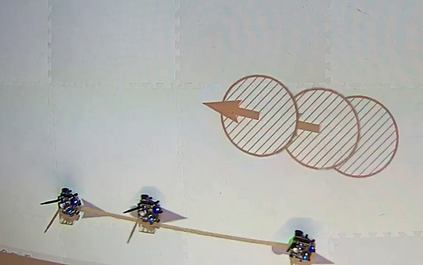

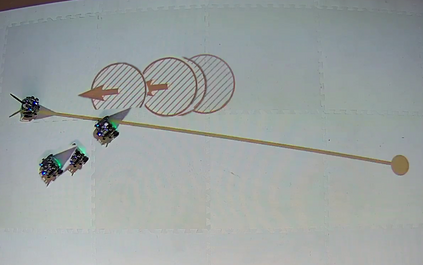

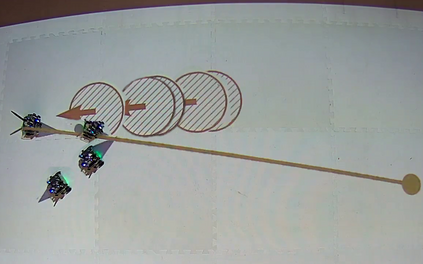

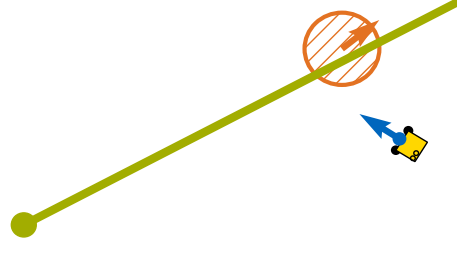

Reinforcement Learning (RL) can solve complex tasks but does not intrinsically provide any guarantees on system behavior. For real-world systems that fulfill safety-critical tasks, such guarantees on safety specifications are necessary. To bridge this gap, we propose a verifiably safe RL procedure with probabilistic guarantees. First, our approach probabilistically verifies a candidate controller with respect to a temporal logic specification, while randomizing the controller's inputs within a bounded set. Then, we use RL to improve the performance of this probabilistically verified, i.e. safe, controller and explore in the same bounded set around the controller's input as was randomized over in the verification step. Finally, we calculate probabilistic safety guarantees with respect to temporal logic specifications for the learned agent. Our approach is efficient for continuous action and state spaces and separates safety verification and performance improvement into two independent steps. We evaluate our approach on a safe evasion task where a robot has to evade a dynamic obstacle in a specific manner while trying to reach a goal. The results show that our verifiably safe RL approach leads to efficient learning and performance improvements while maintaining safety specifications.

翻译:强化学习( RL) 能够解决复杂的任务,但并不在本质上为系统行为提供任何保障。 对于完成安全关键任务的真实世界系统, 安全规格方面的保障是必要的。 为了弥合这一差距, 我们建议了一个可以核查的安全 RL 程序, 并有概率性保障。 首先, 我们的方法可以按照时间逻辑规范对候选人控制器进行核查, 同时将控制器的投入在受约束的数据集中随机排序。 然后, 我们使用 RL 来改进这个概率性能, 即安全、 控制器和探索在控制器输入上设置的相同界限的系统。 最后, 我们计算出一个安全性能保障, 以时间逻辑规范为学习的代理器。 我们的方法对于持续的行动和状态是有效的, 并将安全核查和性能的改进分为两个独立步骤。 我们评估了我们的安全规避任务的方法, 机器人在试图达到目标时, 不得不以特定的方式回避动态障碍。 结果显示, 我们的安全性RL 方法在维持安全性规范的同时, 能够被核查的学习和性能提高安全性。