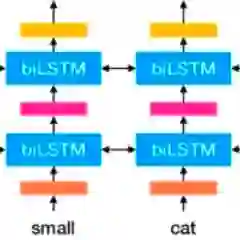

This study evaluates the robustness of two state-of-the-art deep contextual language representations, ELMo and DistilBERT, on supervised learning of binary protest news classification and sentiment analysis of product reviews. A "cross-context" setting is enabled using test sets that are distinct from the training data. Specifically, in the news classification task, the models are developed on local news from India and tested on the local news from China. In the sentiment analysis task, the models are trained on movie reviews and tested on customer reviews. This comparison is aimed at exploring the limits of the representative power of today's Natural Language Processing systems on the path to the systems that are generalizable to real-life scenarios. The models are fine-tuned and fed into a Feed-Forward Neural Network and a Bidirectional Long Short Term Memory network. Multinomial Naive Bayes and Linear Support Vector Machine are used as traditional baselines. The results show that, in binary text classification, DistilBERT is significantly better than ELMo on generalizing to the cross-context setting. ELMo is observed to be significantly more robust to the cross-context test data than both baselines. On the other hand, the baselines performed comparably well to ELMo when the training and test data are subsets of the same corpus (no cross-context). DistilBERT is also found to be 30% smaller and 83% faster than ELMo. The results suggest that DistilBERT can transfer generic semantic knowledge to other domains better than ELMo. DistilBERT is also favorable in incorporating into real-life systems for it requires a smaller computational training budget. When generalization is not the utmost preference and test domain is similar to the training domain, the traditional ML algorithms can still be considered as more economic alternatives to deep language representations.

翻译:本研究评估了两种最先进的深度情境语言表征,ELMo和DistilBERT,对二进制抗议新闻分类和产品评论情感分析的监督学习的鲁棒性。使用与训练数据不同的测试集启用“跨上下文”设置。具体而言,在新闻分类任务中,模型是通过印度本地新闻开发的,并在中国本地新闻上进行测试的。在情感分析任务中,模型是在电影评论上进行训练的,并在客户评论上进行测试的。这种比较旨在探索今天自然语言处理系统的代表能力的局限性,以便走向能够适用于实际情况的系统。模型经过微调并输入前馈神经网络和双向长期短期记忆网络。多项式朴素贝叶斯和线性支持向量机用作传统基准。结果表明,在二进制文本分类中,DistilBERT在泛化到跨上下文设置时比ELMo显着更好。ELMo被观察到比两个基线更能抵御跨上下文的测试数据。另一方面,当训练和测试数据都是同一语料库的子集时(没有跨上下文),基线的表现与ELMo相当。DistilBERT也发现比ELMo更小30%,速度更快83%。结果表明,DistilBERT可以比ELMo更好地将通用语义知识转移到其他领域。当泛化不是最优先考虑因素,且测试领域类似于训练领域时,传统的ML算法仍然可以被认为是经济的深度语言表征的替代方案。