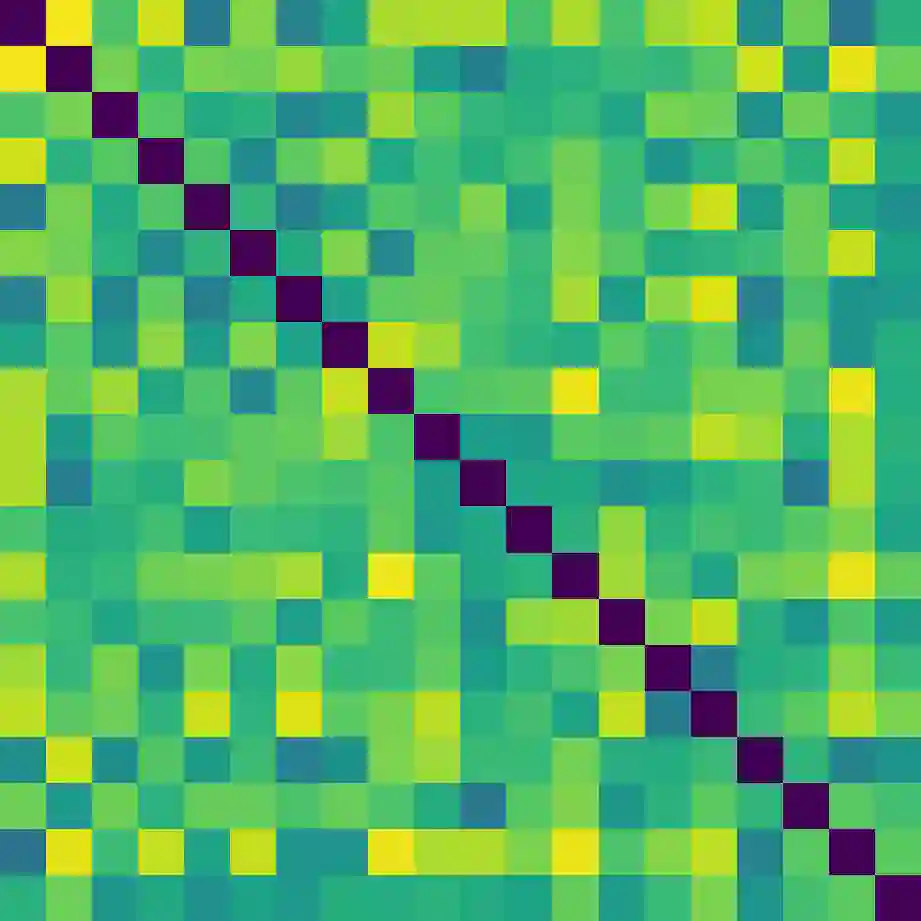

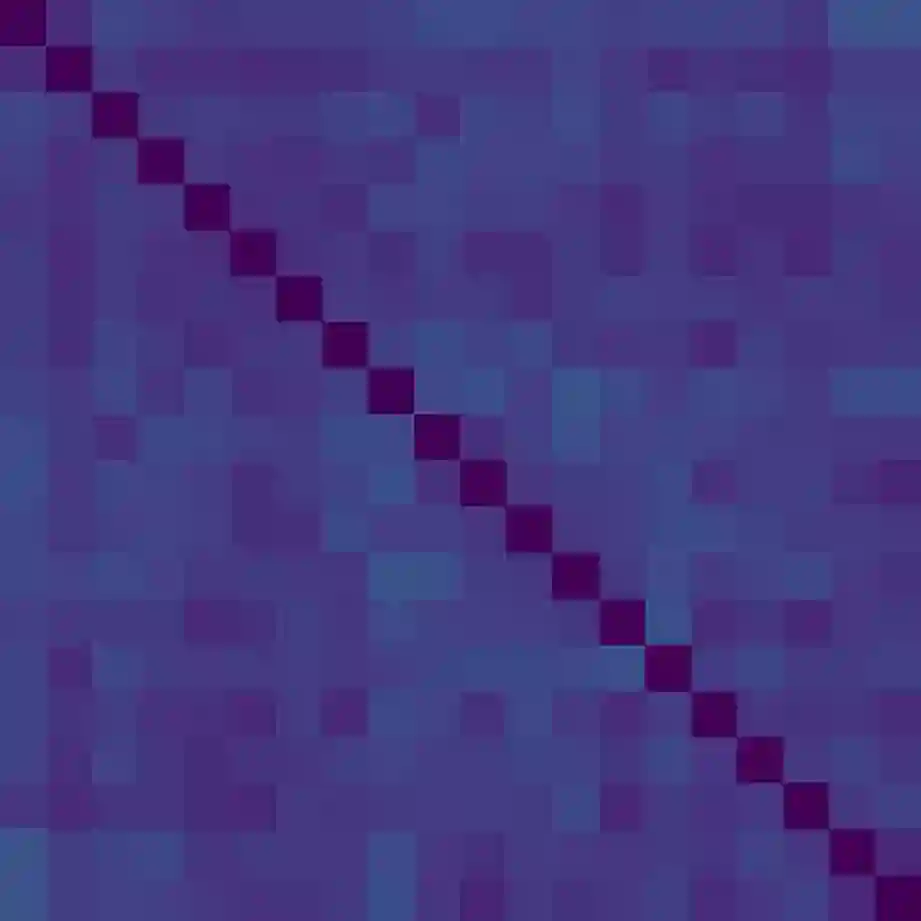

Recent results in supervised learning suggest that while overparameterized models have the capacity to overfit, they in fact generalize quite well. We ask whether the same phenomenon occurs for offline contextual bandits. Our results are mixed. Value-based algorithms benefit from the same generalization behavior as overparameterized supervised learning, but policy-based algorithms do not. We show that this discrepancy is due to the \emph{action-stability} of their objectives. An objective is action-stable if there exists a prediction (action-value vector or action distribution) which is optimal no matter which action is observed. While value-based objectives are action-stable, policy-based objectives are unstable. We formally prove upper bounds on the regret of overparameterized value-based learning and lower bounds on the regret for policy-based algorithms. In our experiments with large neural networks, this gap between action-stable value-based objectives and unstable policy-based objectives leads to significant performance differences.

翻译:受监督的最近学习结果显示,虽然过度分解模型有能力超标,但事实上它们非常笼统。我们询问离线背景强盗是否也出现同样的现象。我们的结果好坏参半。基于价值的算法与过度分解的受监督的学习一样,具有相同的概括性行为,但基于政策的算法却没有。我们表明,这种差异是由于它们的目标的\emph{action-scable。如果存在一种预测(行动价值矢量或行动分布),而这种预测无论观察什么情况都是最佳的,那么目标就是可采取行动的。虽然基于价值的目标是可操作的,但基于政策的目标不稳定。我们正式证明过分分解的基于价值的学习的遗憾和对政策性算法的遗憾程度较低。在与大型神经网络的实验中,行动稳定的价值目标和不稳定的基于政策目标之间的这种差距导致显著的业绩差异。