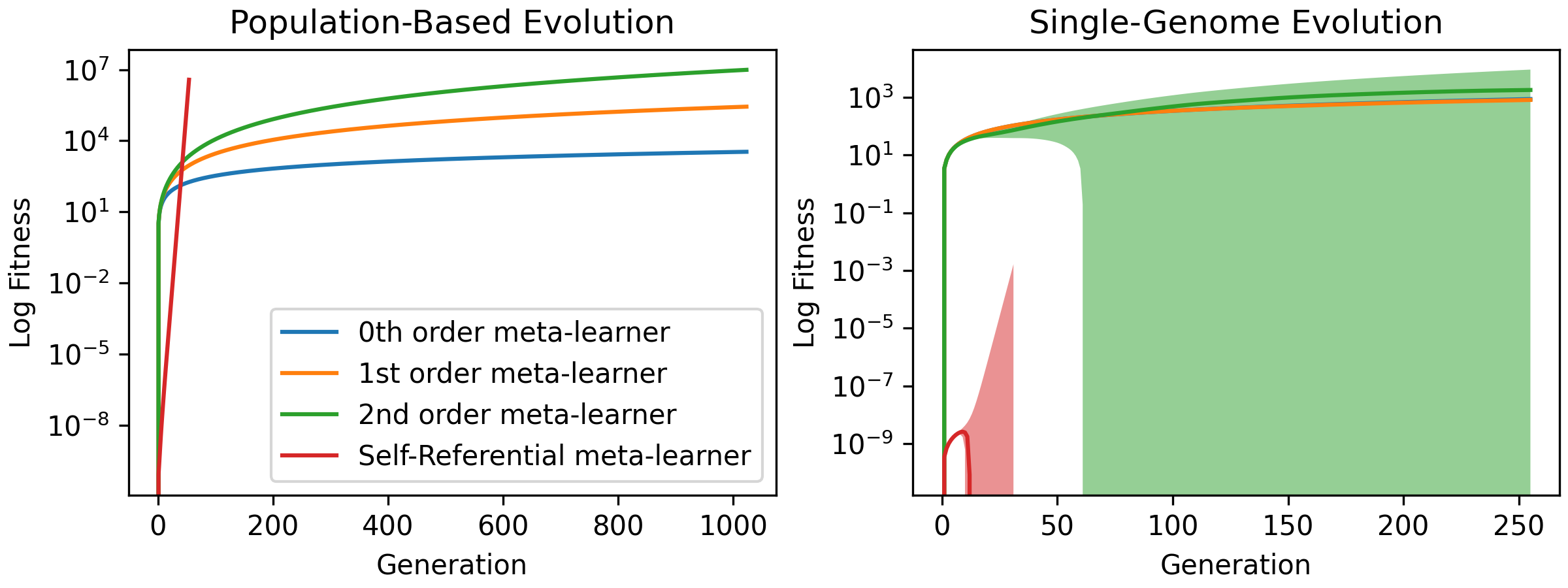

Meta-learning, the notion of learning to learn, enables learning systems to quickly and flexibly solve new tasks. This usually involves defining a set of outer-loop meta-parameters that are then used to update a set of inner-loop parameters. Most meta-learning approaches use complicated and computationally expensive bi-level optimisation schemes to update these meta-parameters. Ideally, systems should perform multiple orders of meta-learning, i.e. to learn to learn to learn and so on, to accelerate their own learning. Unfortunately, standard meta-learning techniques are often inappropriate for these higher-order meta-parameters because the meta-optimisation procedure becomes too complicated or unstable. Inspired by the higher-order meta-learning we observe in real-world evolution, we show that using simple population-based evolution implicitly optimises for arbitrarily-high order meta-parameters. First, we theoretically prove and empirically show that population-based evolution implicitly optimises meta-parameters of arbitrarily-high order in a simple setting. We then introduce a minimal self-referential parameterisation, which in principle enables arbitrary-order meta-learning. Finally, we show that higher-order meta-learning improves performance on time series forecasting tasks.

翻译:元化学习,即学习学习的概念,使学习系统能够迅速灵活地解决新的任务。这通常涉及界定一套外环元参数,然后用来更新一套内环参数。大多数元学习方法使用复杂和计算昂贵的双级优化方案来更新这些元参数。理想的情况是,系统应执行多种元学习顺序,即学会学习学习,从而加速自己的学习。不幸的是,标准的元学习技术往往不适合这些更高层次的元参数,因为元优化程序变得过于复杂或不稳定。受我们在现实世界演变中所观察到的更高层次的元学习的启发,我们表明,使用简单的基于人口的演进对任意高排序的元参数隐含着选择性。首先,我们理论上证明和实验性地表明,基于人口的演进在简单环境中隐含着选择性地选择任意高度秩序的元参数。我们随后引入了最低限度的自优参数化参数,这在原则上可以任意调整元化的元化预测。最后,我们显示,我们用基于人口的演化的简单环境显示,我们展示了基于人口的演化的数学,我们展示了一种最起码的改进的绩效。</s>