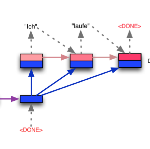

There is mounting evidence that existing neural network models, in particular the very popular sequence-to-sequence architecture, struggle with compositional generalization, i.e., the ability to systematically generalize to unseen compositions of seen components. In this paper we demonstrate that one of the reasons hindering compositional generalization relates to the representations being entangled. We propose an extension to sequence-to-sequence models which allows us to learn disentangled representations by adaptively re-encoding (at each time step) the source input. Specifically, we condition the source representations on the newly decoded target context which makes it easier for the encoder to exploit specialized information for each prediction rather than capturing all source information in a single forward pass. Experimental results on semantic parsing and machine translation empirically show that our proposal yields more disentangled representations and better generalization.

翻译:越来越多的证据表明,现有的神经网络模型,特别是非常流行的序列到序列结构,与构成性一般化挣扎,即能够系统地向看不见的已见组件的构成加以概括。在本文件中,我们证明,妨碍构成性概括化的原因之一是表达方式被纠缠在一起。我们建议扩展顺序到序列模型,使我们能够通过适应性再编码(每一步)源的输入来了解分解的表达方式。具体地说,我们把源的表达方式设置在新解码的目标环境上,使编码者更容易为每一项预测利用专门信息,而不是在一个前方通道中捕捉所有源信息。语义解析和机器翻译实验结果显示,我们的提案产生更加分解和更好的概括化。