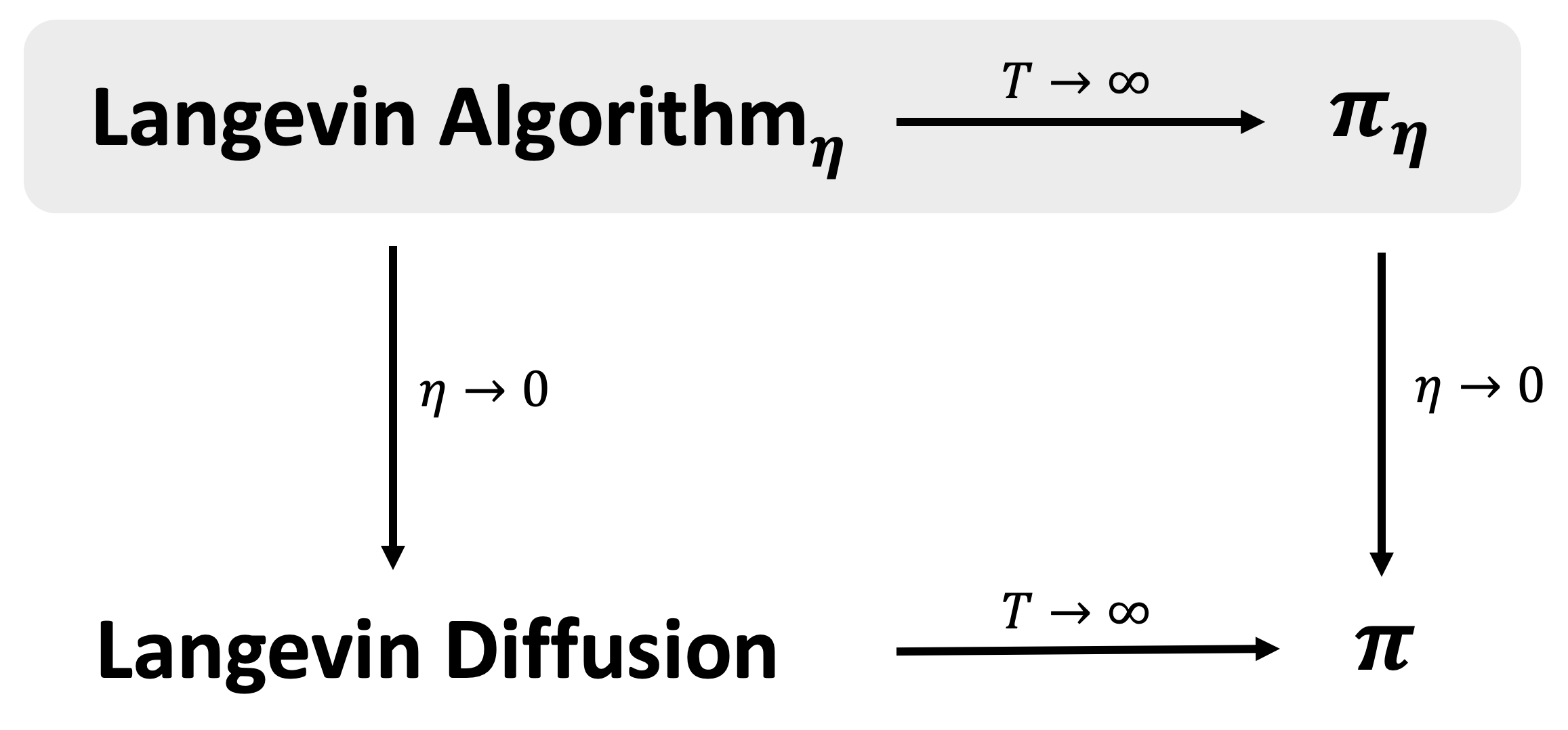

Sampling from a high-dimensional distribution is a fundamental task in statistics, engineering, and the sciences. A canonical approach is the Langevin Algorithm, i.e., the Markov chain for the discretized Langevin Diffusion. This is the sampling analog of Gradient Descent. Despite being studied for several decades in multiple communities, tight mixing bounds for this algorithm remain unresolved even in the seemingly simple setting of log-concave distributions over a bounded domain. This paper completely characterizes the mixing time of the Langevin Algorithm to its stationary distribution in this setting (and others). This mixing result can be combined with any bound on the discretization bias in order to sample from the stationary distribution of the continuous Langevin Diffusion. In this way, we disentangle the study of the mixing and bias of the Langevin Algorithm. Our key insight is to introduce a technique from the differential privacy literature to the sampling literature. This technique, called Privacy Amplification by Iteration, uses as a potential a variant of R\'enyi divergence that is made geometrically aware via Optimal Transport smoothing. This gives a short, simple proof of optimal mixing bounds and has several additional appealing properties. First, our approach removes all unnecessary assumptions required by other sampling analyses. Second, our approach unifies many settings: it extends unchanged if the Langevin Algorithm uses projections, stochastic mini-batch gradients, or strongly convex potentials (whereby our mixing time improves exponentially). Third, our approach exploits convexity only through the contractivity of a gradient step -- reminiscent of how convexity is used in textbook proofs of Gradient Descent. In this way, we offer a new approach towards further unifying the analyses of optimization and sampling algorithms.

翻译:取自高维分布的抽样是统计、工程和科学的基本任务。 本文将兰格文 Algorithm 的混合时间完全描述为 兰格文 Algorithm, 也就是 兰格文 分散传播的 Markov 链。 这是 梯度源的抽样模拟模拟。 尽管在多个社区中研究了数十年, 但这一算法的紧密混合界限仍然没有解决, 即使在一个封闭域的对调调分布的表面上简单设置中也是如此。 本文将兰格文 Algorithm 的混合时间完全描述为在此设置中( 其它) 的固定分布。 这种混合结果可以结合在离散偏差偏差偏差偏差的偏差上结合 兰格文 源源源源。 以这种不相近的变异的变异性, 利用这种变异性变异性, 利用这种变异性变异性变异的变异性, 利用这种变异性变异性变异性变异性, 利用了我们最接近性变异的变异性变异性变异的变异性变异性变异性 。