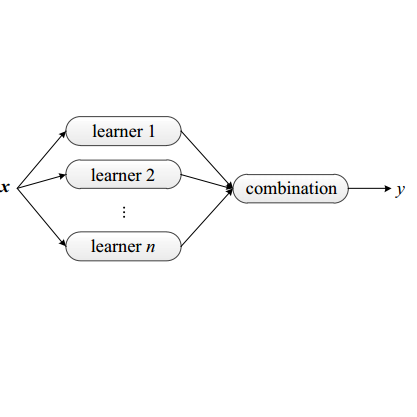

Mixture of experts method is a neural network based ensemble learning that has great ability to improve the overall classification accuracy. This method is based on the divide and conquer principle, in which the problem space is divided between several experts by supervisition of gating network. In this paper, we propose an ensemble learning method based on mixture of experts which is named mixture of ELM based experts with trainable gating network (MEETG) to improve the computing cost and to speed up the learning process of ME. The structure of ME consists of multi layer perceptrons (MLPs) as base experts and gating network, in which gradient-based learning algorithm is applied for training the MLPs which is an iterative and time consuming process. In order to overcome on these problems, we use the advantages of extreme learning machine (ELM) for designing the structure of ME. ELM as a learning algorithm for single hidden-layer feed forward neural networks provides much faster learning process and better generalization ability in comparision with some other traditional learning algorithms. Also, in the proposed method a trainable gating network is applied to aggregate the outputs of the experts dynamically according to the input sample. Our experimental results and statistical analysis on 11 benchmark datasets confirm that MEETG has an acceptable performance in classification problems. Furthermore, our experimental results show that the proposed approach outperforms the original ELM on prediction stability and classification accuracy.

翻译:专家混合方法是一种基于神经网络的混合学习方法,它具有提高总体分类准确性的巨大能力。这种方法基于分化和征服原则,在其中,问题空间由数名专家通过悬浮网络的超光速分配。在本文件中,我们建议一种基于专家混合混合的混合学习方法,称为ELM专家与可训练的网格网(MEETG)混合,以提高计算成本并加快ME的学习过程。ME的结构包括多层分辨器(MLPs),作为基础专家和定位网络,在其中采用基于梯度的学习算法来培训MLPs,这是一个反复和耗时的过程。为了克服这些问题,我们利用极端学习机(ELM)的优势来设计ME。ELM作为单层反馈前神经网络的学习算法,提供了更快的学习过程和更好的概括能力。此外,在拟议的方法中,基于梯度的学习算法用于培训的MLPs,用于培训MLPs, 并用我们的标准模型模型分析结果,在动态的模型分析中,一个可接受的模型化的网络,用来证实我们输入结果。