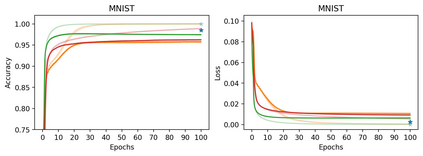

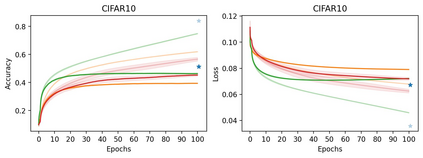

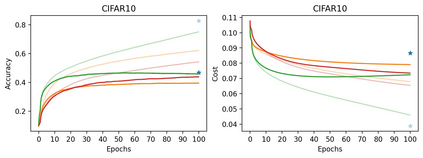

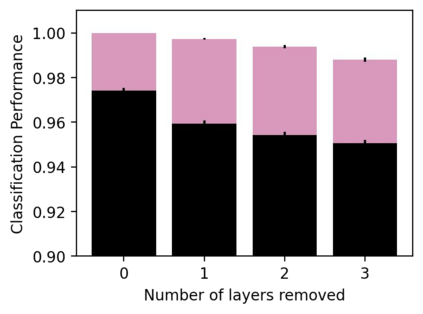

Learning in biological and artificial neural networks is often framed as a problem in which targeted error signals are used to directly guide parameter updating for more optimal network behaviour. Backpropagation of error (BP) is an example of such an approach and has proven to be a highly successful application of stochastic gradient descent to deep neural networks. However, BP relies on the transmission of gradient information directly to parameters, and frames learning as two completely separated passes. We propose constrained parameter inference (COPI) as a new principle for learning. The COPI approach to learning proposes that parameters might infer their updates based upon local neuron activities. This estimation of network parameters is possible under the constraints of decorrelated neural inputs and top-down perturbations of neural states, where credit is assigned to units instead of parameters directly. The form of the top-down perturbation determines which credit assignment method is being used, and when aligned with BP it constitutes a mixture of the forward and backward passes. We show that COPI is not only more biologically plausible but also provides distinct advantages for fast learning when compared to BP.

翻译:在生物和人工神经网络中,学习往往被设计成一个问题,在这个问题中,定点错误信号被用来直接指导参数更新,以实现更理想的网络行为。错误的反向分析(BP)是这种方法的一个实例,并且证明是向深神经网络高度成功地应用了随机梯度梯度梯度梯度下移;然而,BP依靠将梯度信息直接传送到参数,并将学习框架作为两个完全分离的通道进行。我们提议将受限参数推导作为新的学习原则。COPI的学习方法提议,参数可以根据当地神经活动推断出其更新。这种网络参数的估算是在与神经相关的神经输入和神经状态自上而下的自上而下扰动的制约下推力下推力下推力下推力下推力下推力下推力,将信用直接分配给单位而不是参数。自上而下的扰动作用决定了使用哪种信用分配方法,当与BP相匹配时,它构成前向和后向通道的混合物。我们表明,COPI不仅在生物学上更合理,而且还为与BP相比的快速学习提供了明显的优势。