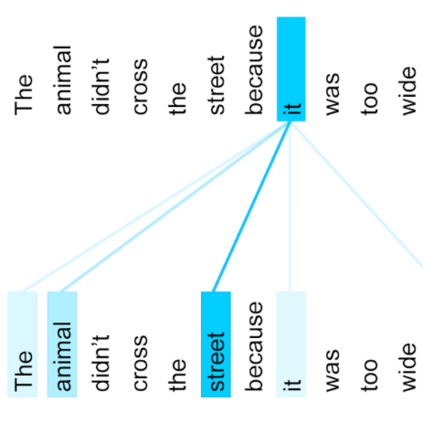

Curriculum learning begins to thrive in the speech enhancement area, which decouples the original spectrum estimation task into multiple easier sub-tasks to achieve better performance. Motivated by that, we propose a dual-branch attention-in-attention transformer dubbed DB-AIAT to handle both coarse- and fine-grained regions of the spectrum in parallel. From a complementary perspective, a magnitude masking branch is proposed to coarsely estimate the overall magnitude spectrum, and simultaneously a complex refining branch is elaborately designed to compensate for the missing spectral details and implicitly derive phase information. Within each branch, we propose a novel attention-in-attention transformer-based module to replace the conventional RNNs and temporal convolutional networks for temporal sequence modeling. Specifically, the proposed attention-in-attention transformer consists of adaptive temporal-frequency attention transformer blocks and an adaptive hierarchical attention module, aiming to capture long-term temporal-frequency dependencies and further aggregate global hierarchical contextual information. Experimental results on Voice Bank + DEMAND demonstrate that DB-AIAT yields state-of-the-art performance (e.g., 3.31 PESQ, 95.6% STOI and 10.79dB SSNR) over previous advanced systems with a relatively small model size (2.81M).

翻译:语言增强领域的课程学习开始蓬勃发展,使最初的频谱估计任务分化为多个更加容易的子任务,以取得更好的业绩。为此,我们提议一个名为DB-AIAT的双部门关注点和注意点变压器,以同时处理光谱中粗略和细微的变压器。从互补的角度出发,提议一个规模遮盖分支,以粗略估计总体规模的频谱,同时,一个复杂的精炼分支正在精心设计,以弥补缺失的光谱细节,并隐含地获取阶段信息。在每一个分支中,我们提出一个新的关注点变压器模块,以取代传统的RNNS和时间动态网络进行时间序列建模。具体地说,拟议的注意点变压器包括适应性时间频率变压器块和一个适应性分级关注模块,目的是捕捉长期的时频依赖性和进一步汇总的全球等级背景信息。语音银行+DEAANDAND的实验结果显示,DB-AIAT以关注点变换为状态模型,10.79M.M.M.