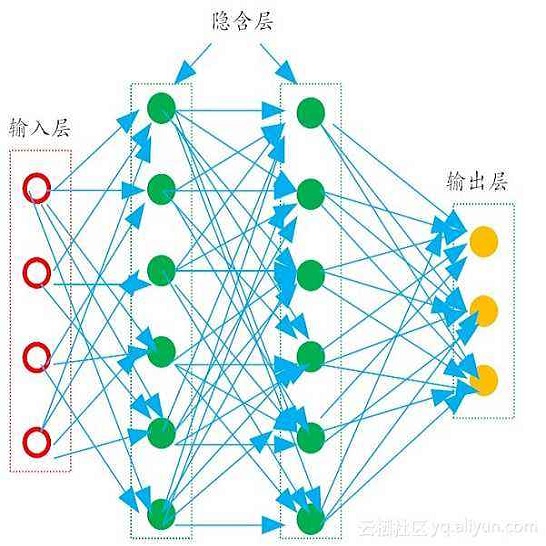

Data-driven learning of partial differential equations' solution operators has recently emerged as a promising paradigm for approximating the underlying solutions. The solution operators are usually parameterized by deep learning models that are built upon problem-specific inductive biases. An example is a convolutional or a graph neural network that exploits the local grid structure where functions' values are sampled. The attention mechanism, on the other hand, provides a flexible way to implicitly exploit the patterns within inputs, and furthermore, relationship between arbitrary query locations and inputs. In this work, we present an attention-based framework for data-driven operator learning, which we term Operator Transformer (OFormer). Our framework is built upon self-attention, cross-attention, and a set of point-wise multilayer perceptrons (MLPs), and thus it makes few assumptions on the sampling pattern of the input function or query locations. We show that the proposed framework is competitive on standard benchmark problems and can flexibly be adapted to randomly sampled input.

翻译:数据驱动部分差异方程式解决方案操作员的学习最近成为接近基本解决方案的有希望的范例。解决方案操作员通常以基于特定问题的感应偏差的深学习模型作为参数。例如,利用功能值抽样的本地电网结构的进化或图形神经网络。另一方面,关注机制提供了一种灵活的方式,可以隐含地利用投入中的模式,以及任意查询地点和投入之间的关系。在这项工作中,我们提出了一个基于关注的数据驱动操作员学习框架,我们称之为“操作员变换器(Oformer) ” 。我们的框架建立在自用、交叉注意和一组点向多角度的透视器(MLPs)的基础上,因此对输入功能或查询地点的抽样模式没有多少假设。我们表明,拟议的框架在标准基准问题上具有竞争力,可以灵活地适应随机抽样输入。