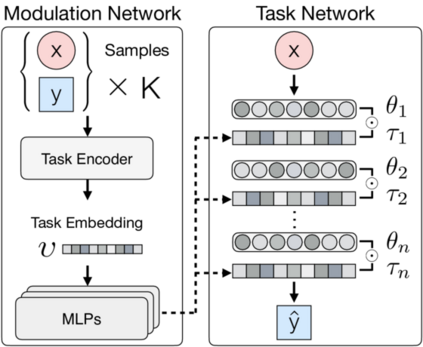

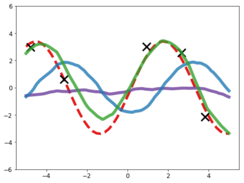

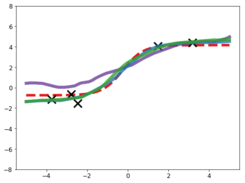

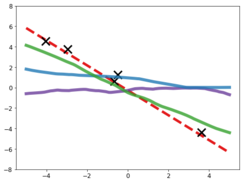

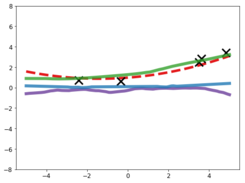

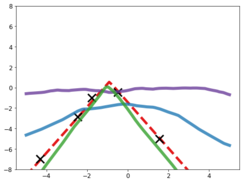

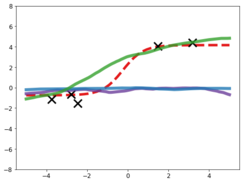

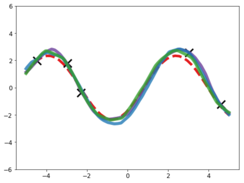

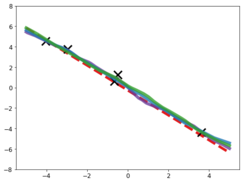

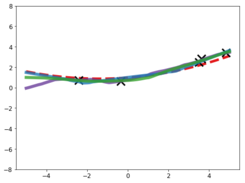

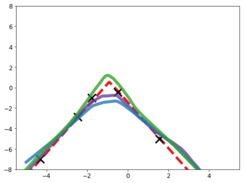

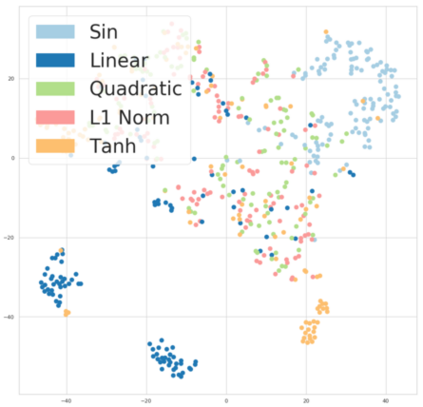

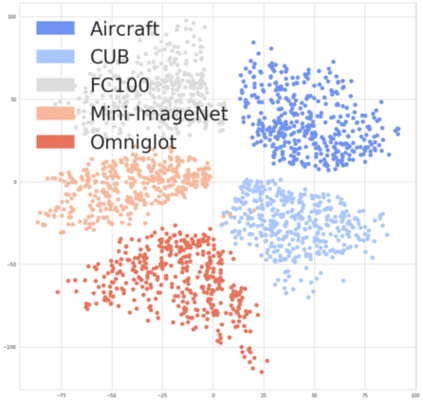

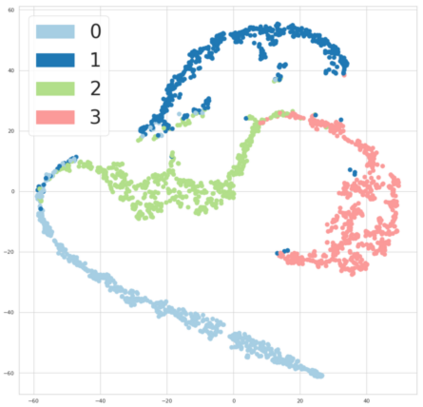

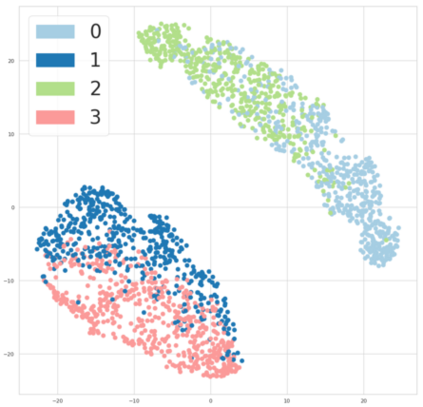

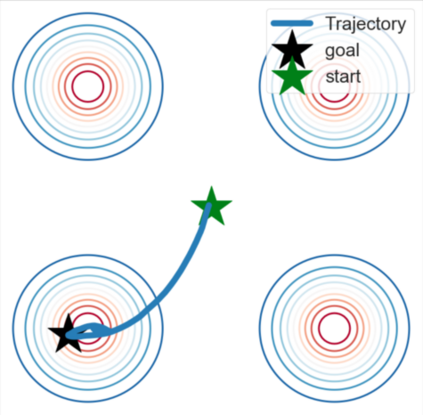

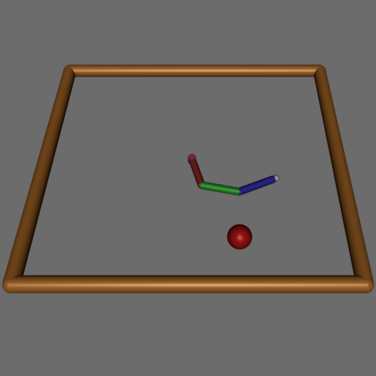

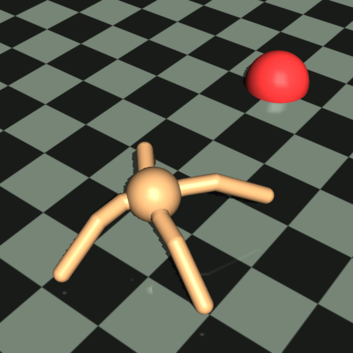

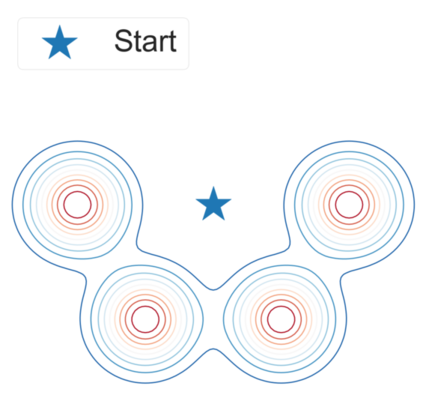

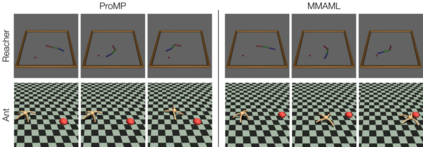

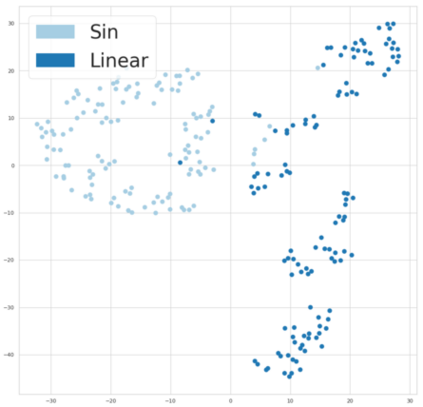

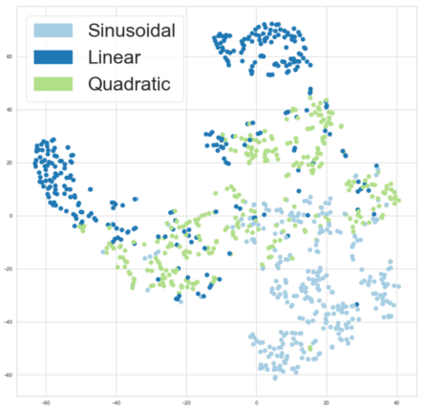

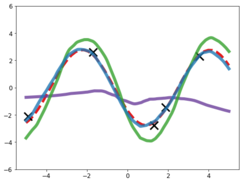

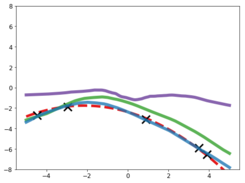

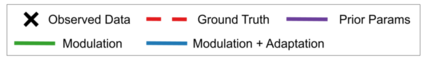

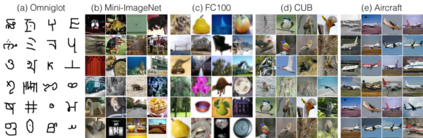

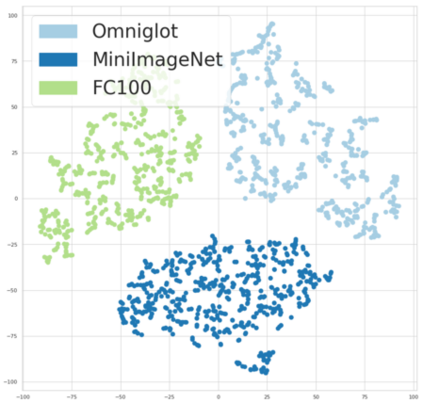

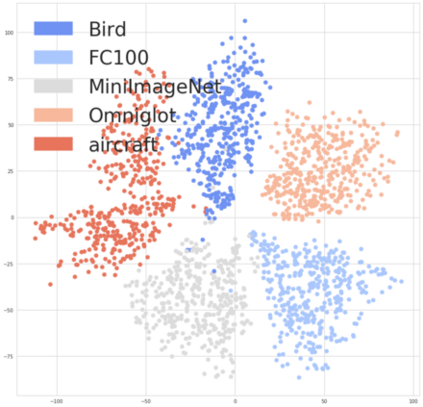

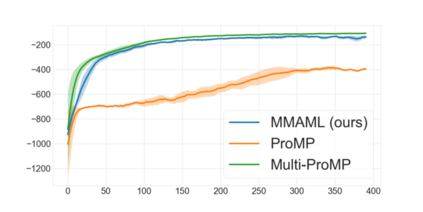

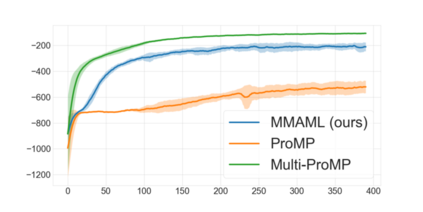

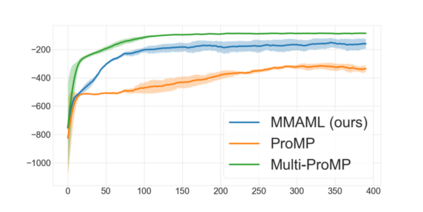

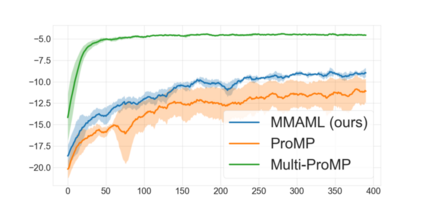

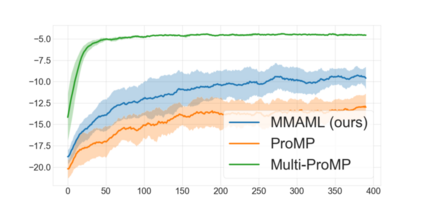

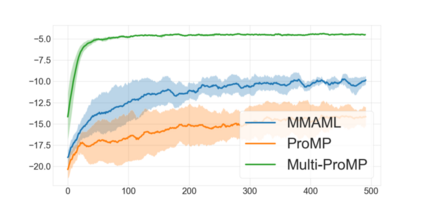

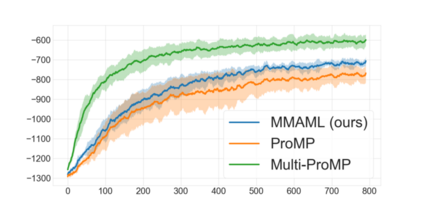

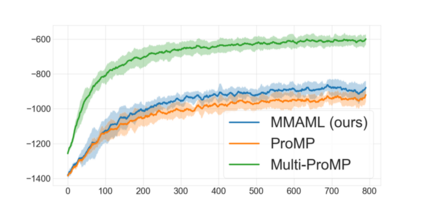

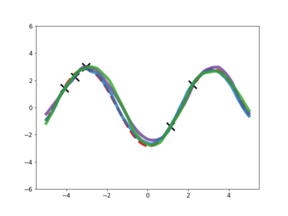

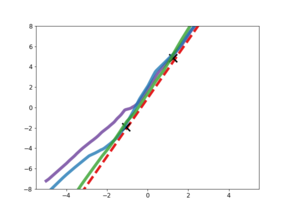

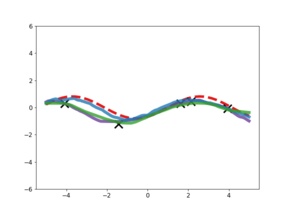

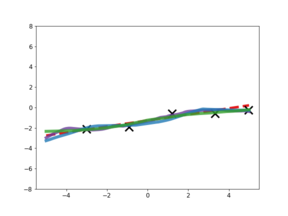

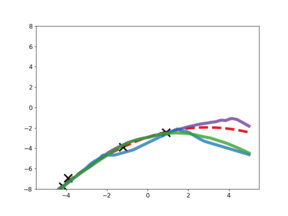

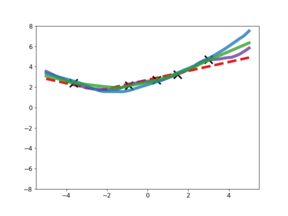

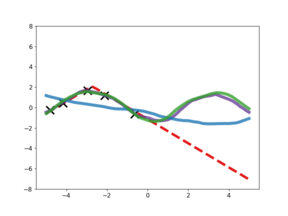

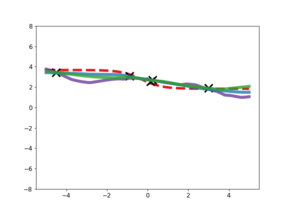

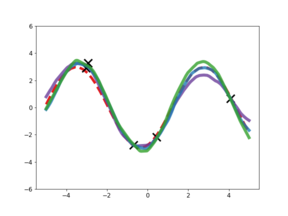

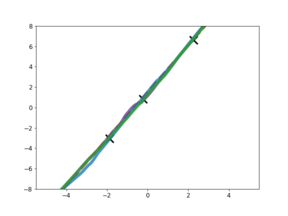

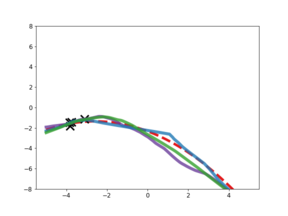

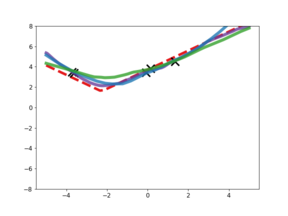

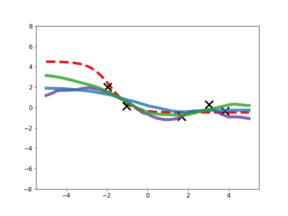

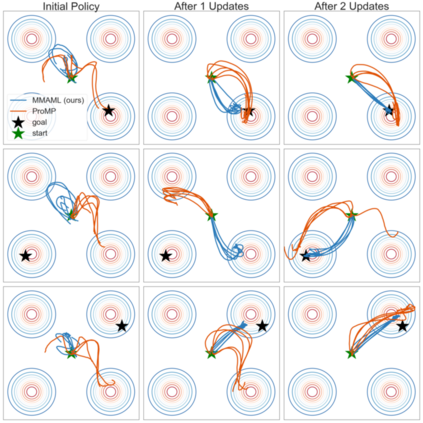

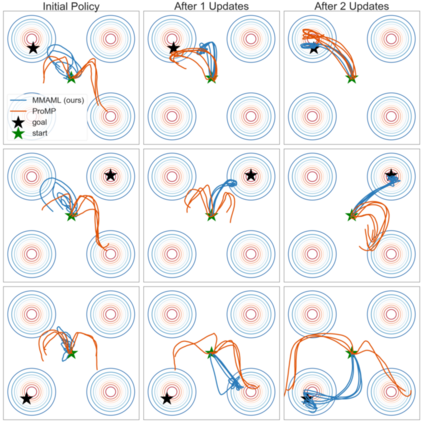

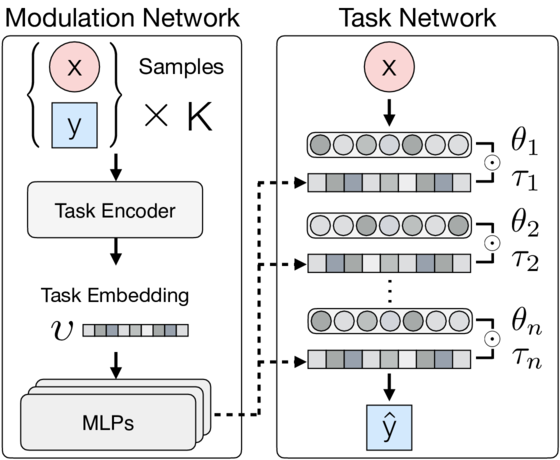

Model-agnostic meta-learners aim to acquire meta-learned parameters from similar tasks to adapt to novel tasks from the same distribution with few gradient updates. With the flexibility in the choice of models, those frameworks demonstrate appealing performance on a variety of domains such as few-shot image classification and reinforcement learning. However, one important limitation of such frameworks is that they seek a common initialization shared across the entire task distribution, substantially limiting the diversity of the task distributions that they are able to learn from. In this paper, we augment MAML with the capability to identify the mode of tasks sampled from a multimodal task distribution and adapt quickly through gradient updates. Specifically, we propose a multimodal MAML (MMAML) framework, which is able to modulate its meta-learned prior parameters according to the identified mode, allowing more efficient fast adaptation. We evaluate the proposed model on a diverse set of few-shot learning tasks, including regression, image classification, and reinforcement learning. The results not only demonstrate the effectiveness of our model in modulating the meta-learned prior in response to the characteristics of tasks but also show that training on a multimodal distribution can produce an improvement over unimodal training.

翻译:模型 -- -- 不可知性元 Learners 的目标是从类似的任务中获得元学的参数,以适应同一分布的新的任务,而没有多少梯度更新。这些框架在选择模型方面具有灵活性,显示了在微小图像分类和强化学习等各个领域的有吸引力的业绩。然而,这些框架的一个重要局限性是,它们寻求在整个任务分布中共享共同初始化,大大限制了它们能够从中学习的任务分布的多样性。在本文件中,我们增强MAML,使之有能力确定从多式联运任务分配中抽样的任务模式,并通过梯度更新迅速适应。具体地说,我们提议了一个多式联运MAML(MMMM)框架,它能够根据所确定的模式调整其元学前参数,从而能够更有效地快速适应。我们评估了一组不同的微小学习任务的拟议模型,包括回归、图像分类和强化学习。结果不仅表明我们模型在适应任务特点之前调整元学方面的有效性,而且还表明关于多式联运的培训能够比非模版培训产生改进。