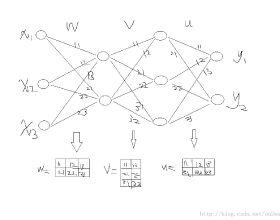

Top-down connections in the biological brain has been shown to be important in high cognitive functions. However, the function of this mechanism in machine learning has not been defined clearly. In this study, we propose to lay out a framework constituted by a bottom-up and a top-down network. Here, we use a Top-down Credit Assignment Network (TDCA-network) to replace the loss function and back propagation (BP) which serve as the feedback mechanism in traditional bottom-up network training paradigm. Our results show that the credit given by well-trained TDCA-network outperforms the gradient from backpropagation in classification task under different settings on multiple datasets. In addition, we successfully use a credit diffusing trick, which can keep training and testing performance remain unchanged, to reduce parameter complexity of the TDCA-network. More importantly, by comparing their trajectories in the parameter landscape, we find that TDCA-network directly achieved a global optimum, in contrast to that backpropagation only can gain a localized optimum. Thus, our results demonstrate that TDCA-network not only provide a biological plausible learning mechanism, but also has the potential to directly achieve global optimum, indicating that top-down credit assignment can substitute backpropagation, and provide a better learning framework for Deep Neural Networks.

翻译:生物大脑的自上而下连接已被证明在高认知功能中很重要。 但是,这个机制在机器学习中的功能还没有明确界定。 在本研究中,我们提议建立一个由自下而上和自下而下网络组成的框架。 在这里,我们使用一个自下而下信用分配网络(TDCA-network)来取代损失功能和后向传播(BP),作为传统自下而上网络培训模式中的反馈机制。我们的结果显示,训练有素的TDCA-网络提供的信用超过了在多个数据集的不同设置下分类任务从反向调整的梯度。 此外,我们成功地使用了一种信用消化技巧,可以保持培训和测试性能不变,降低TDCA-网络的参数复杂性。 更重要的是,通过比较其在参数景观中的轨迹,我们发现TDCA-网络直接达到全球最佳效果,而反向调整只能取得局部最佳效果。 因此,我们的结果显示,TDC- 网络不仅提供了一个生物学上可信的学习机制,而且还可以提供更深的顶向后的学习框架。