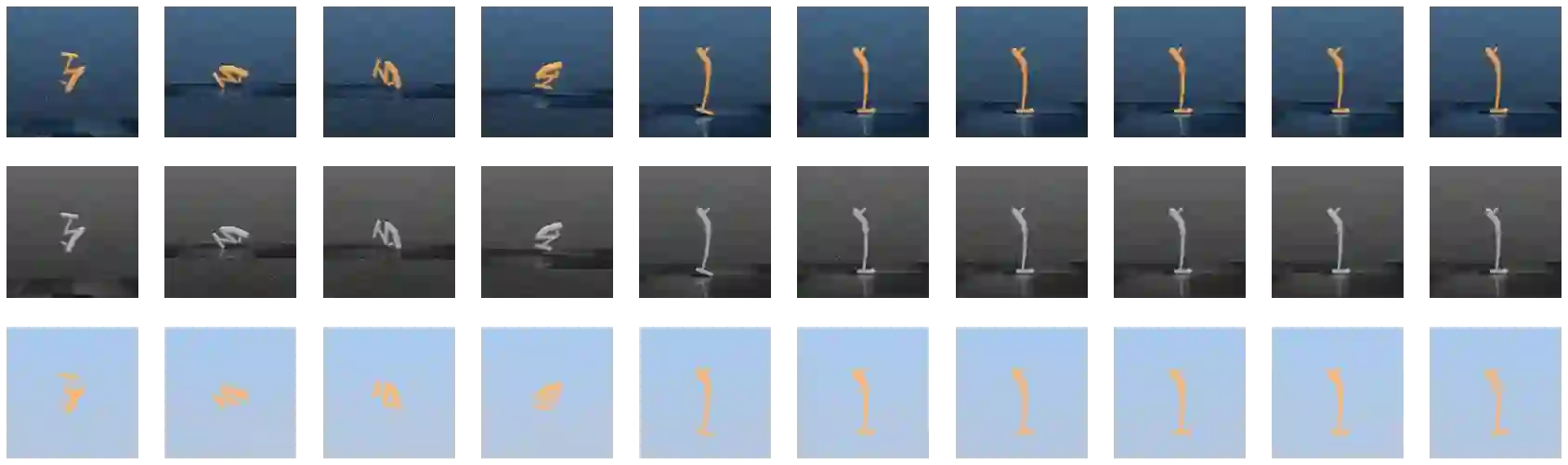

Imitation from observation (IfO) is a learning paradigm that consists of training autonomous agents in a Markov Decision Process (MDP) by observing expert demonstrations without access to its actions. These demonstrations could be sequences of environment states or raw visual observations of the environment. Recent work in IfO has focused on this problem in the case of observations of low-dimensional environment states, however, access to these highly-specific observations is unlikely in practice. In this paper, we adopt a challenging, but more realistic problem formulation, learning control policies that operate on a learned latent space with access only to visual demonstrations of an expert completing a task. We present BootIfOL, an IfO algorithm that aims to learn a reward function that takes an agent trajectory and compares it to an expert, providing rewards based on similarity to agent behavior and implicit goal. We consider this reward function to be a distance metric between trajectories of agent behavior and learn it via contrastive learning. The contrastive learning objective aims to closely represent expert trajectories and to distance them from non-expert trajectories. The set of non-expert trajectories used in contrastive learning is made progressively more complex by bootstrapping from roll-outs of the agent learned through RL using the current reward function. We evaluate our approach on a variety of control tasks showing that we can train effective policies using a limited number of demonstrative trajectories, greatly improving on prior approaches that consider raw observations.

翻译:从观察(IfO) 中产生的想法是一种学习模式,它包括通过观察专家演示,对马克夫决策程序(MDP)的自主代理进行培训,不让他们看到自己的行动,这些演示可以是环境状态的顺序或对环境的原始视觉观察。IfO最近的工作侧重于这一问题,但从低维环境观察中发现,在实践上不大可能获得这些高度具体的观察。在本文中,我们采用了一种具有挑战性但更现实的问题制定,学习控制政策,在学习的潜潜藏空间里操作,只允许专家完成一项任务。我们介绍了BoutIfOL,一种IfO算法,目的是学习一种奖赏功能,这种功能取自代理人的轨迹,将其比照环境环境的轨迹或环境的原始视觉观察。我们认为,这种奖赏功能是代理人行为的轨迹之间的距离,通过对比性学习,通过我们目前所学会的变现式策略的滚动动作,通过我们所学会的变式动作的变式动作,从我们所学会的变式的变式策略中逐渐学习。