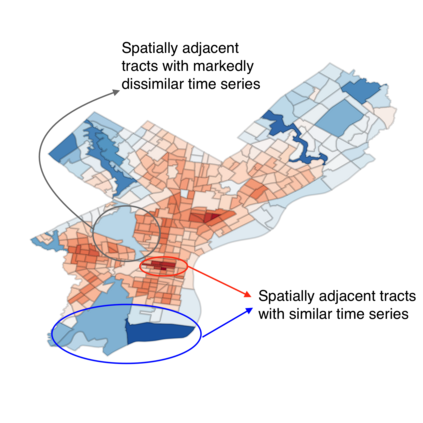

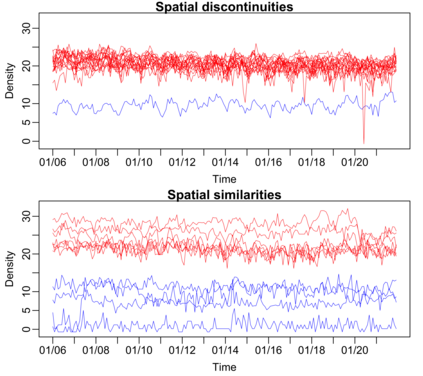

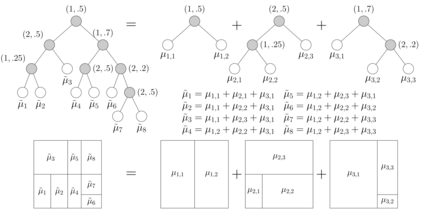

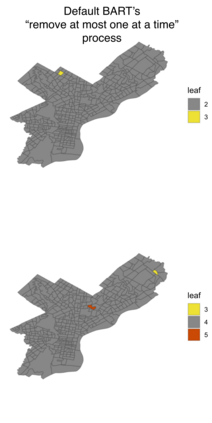

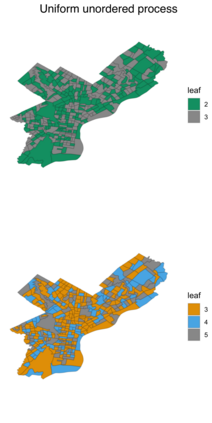

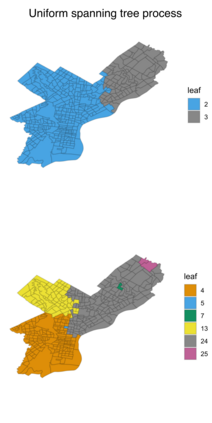

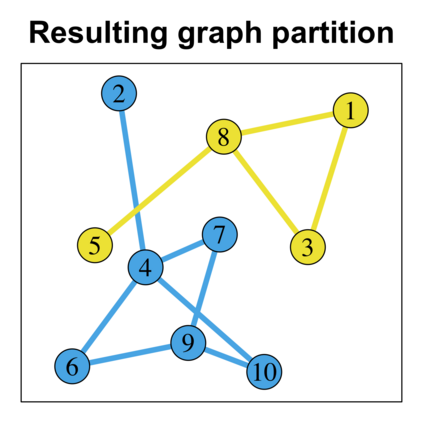

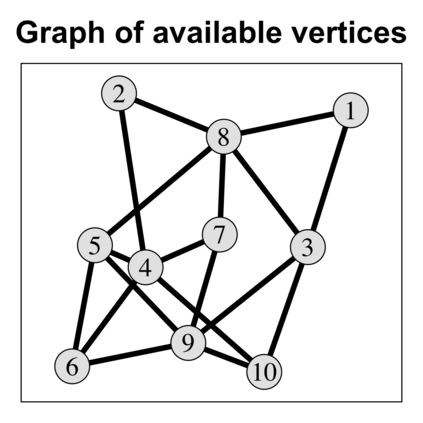

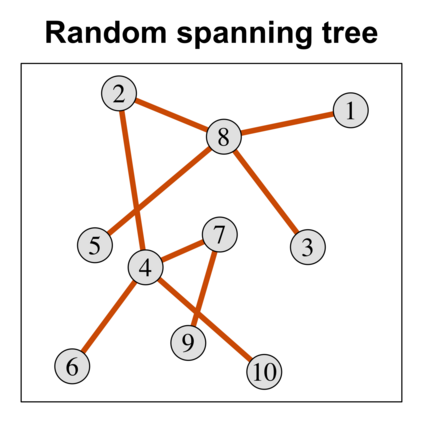

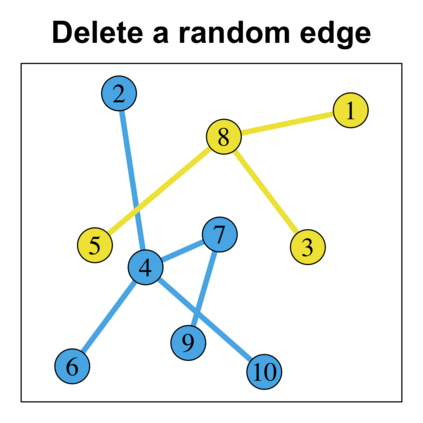

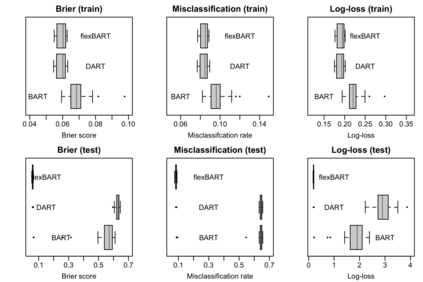

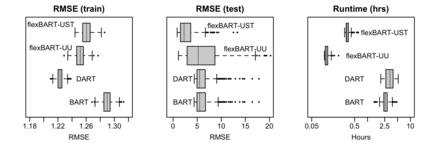

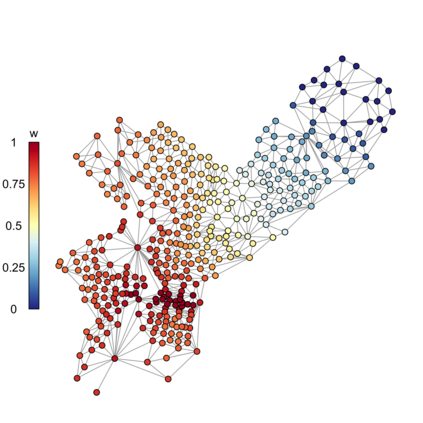

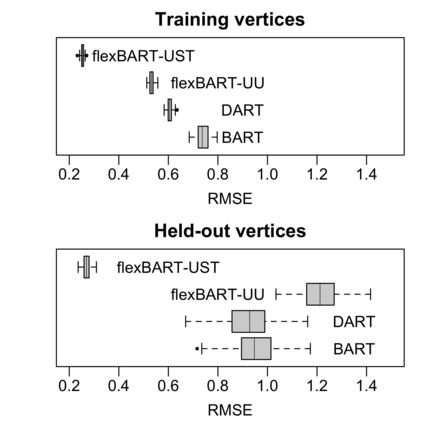

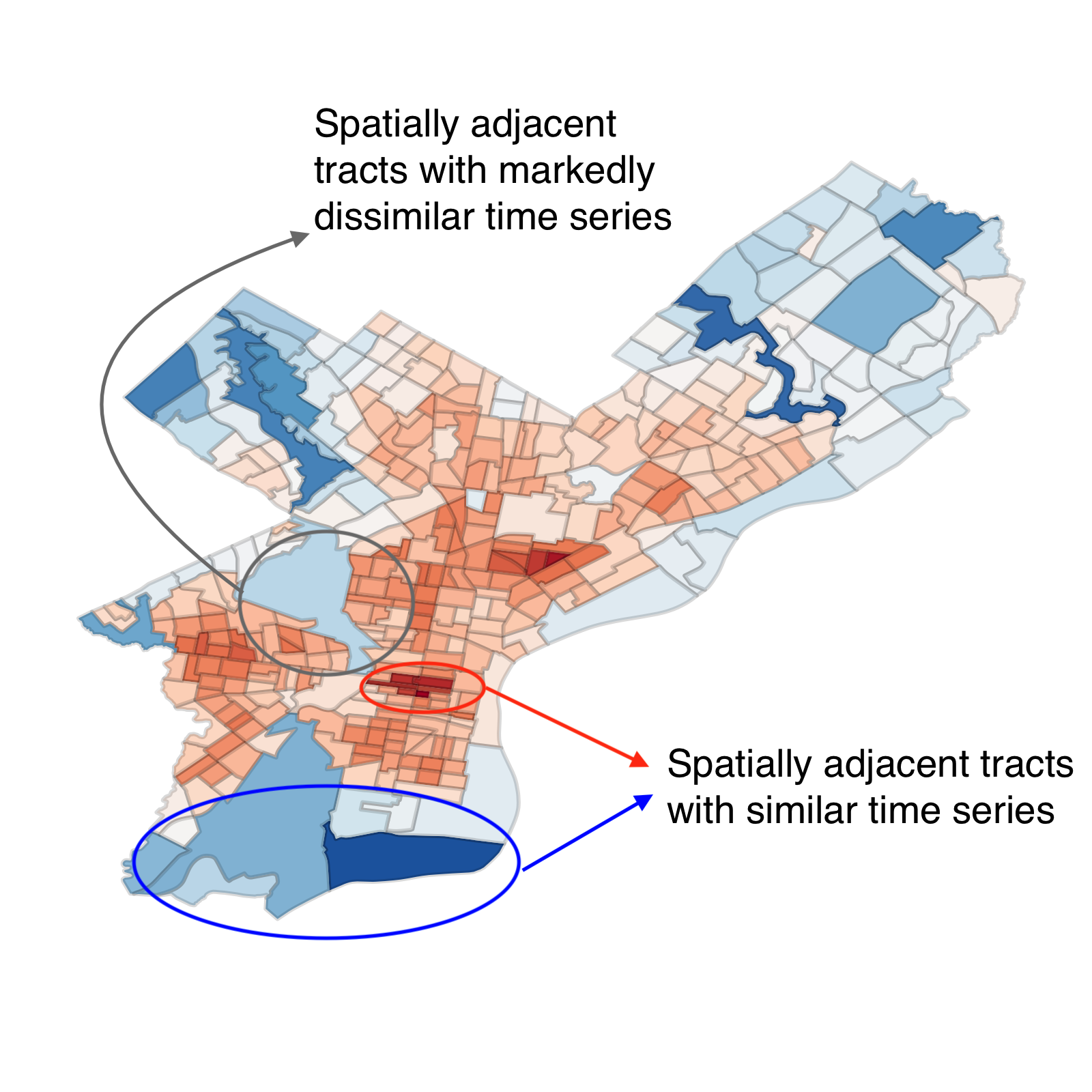

Default implementations of Bayesian Additive Regression Trees (BART) represent categorical predictors using several binary indicators, one for each level of each categorical predictor. Regression trees built with these indicators partition the levels using a ``remove one a time strategy.'' Unfortunately, the vast majority of partitions of the levels cannot be built with this strategy, severely limiting BART's ability to ``borrow strength'' across groups of levels. We overcome this limitation with a new class of regression tree and a new decision rule prior that can assign multiple levels to both the left and right child of a decision node. Motivated by spatial applications with areal data, we introduce a further decision rule prior that partitions the areas into spatially contiguous regions by deleting edges from random spanning trees of a suitably defined network. We implemented our new regression tree priors in the flexBART package, which, compared to existing implementations, often yields improved out-of-sample predictive performance without much additional computational burden. We demonstrate the efficacy of flexBART using examples from baseball and the spatiotemporal modeling of crime.

翻译:Bayesian Additive Regression 树(BART) 的默认执行代表了使用数种二进制指标的绝对预测值, 每个绝对预测器的每个级别各有一个。 以这些指标建造的倒退树使用“ 重新移动一个时间战略” 来分隔水平 。 不幸的是, 绝大多数水平分区无法用这个战略来构建, 严重限制了 BAART 在各个层次上“ 浏览力” 的能力。 我们克服了这一限制, 我们用一个新的回归树类别和之前的新决定规则, 可以给决定节点的左边和右边的孩子分配多个级别。 受区域空间应用的驱动, 以等数据为动力, 我们引入了进一步的决定规则, 将区域分割到空间毗连区域, 将随机横贯的、 适当界定的网络的树木的边缘从边緣分割出来 。 我们在弹性BARTART包中实施了我们新的回归树前的边缘, 与现有的执行相比, 通常在不增加计算负担的情况下, 产生更好的超量的预测性功能。 我们用棒球和偏向犯罪模型来展示LiveBAT的功效。