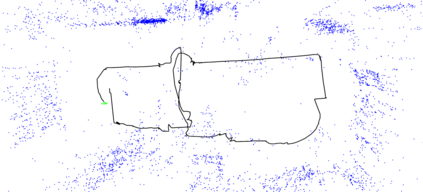

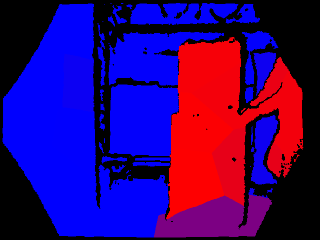

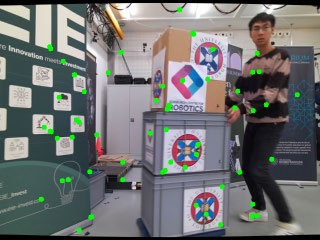

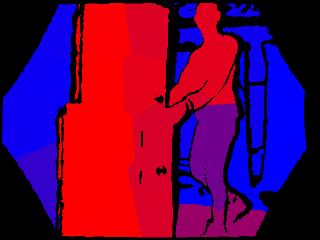

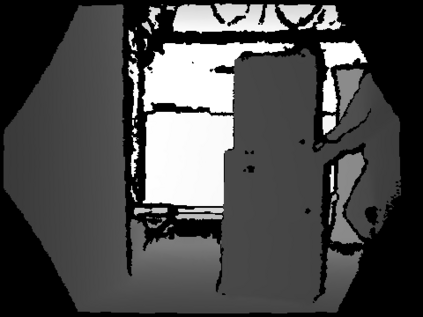

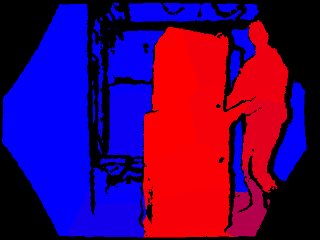

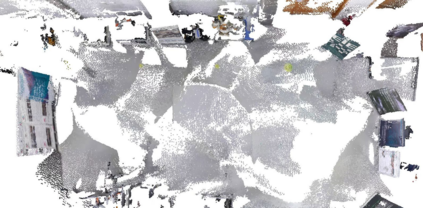

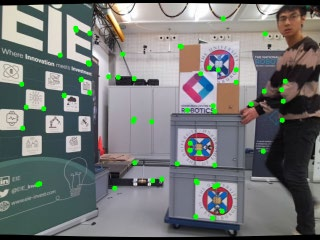

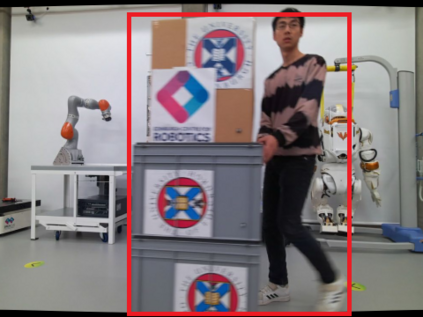

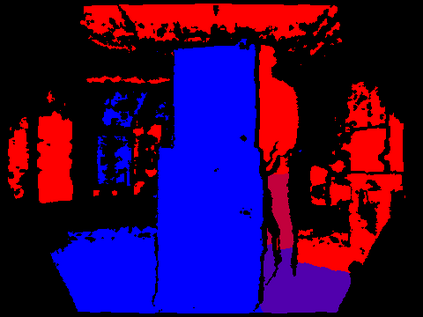

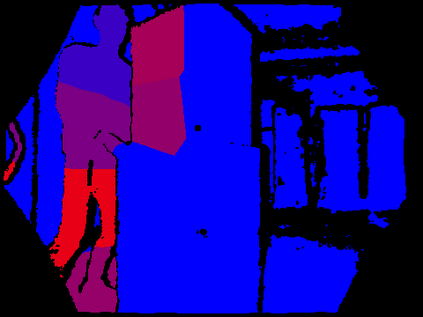

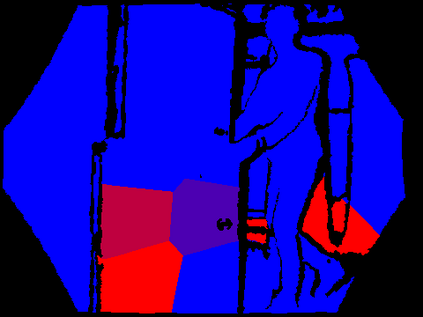

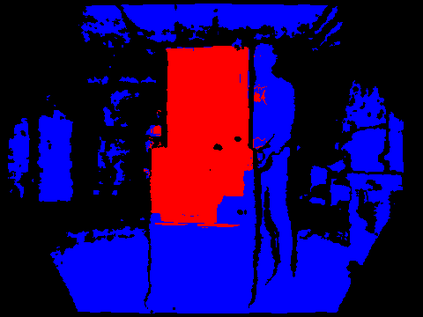

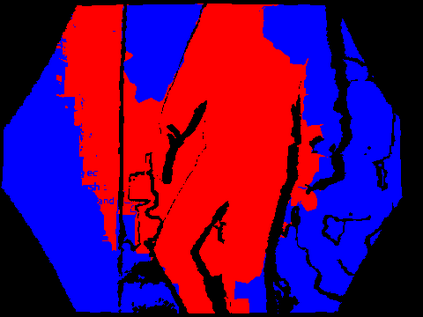

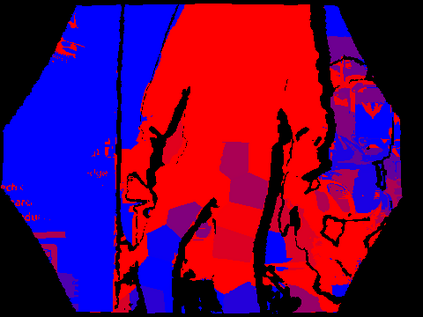

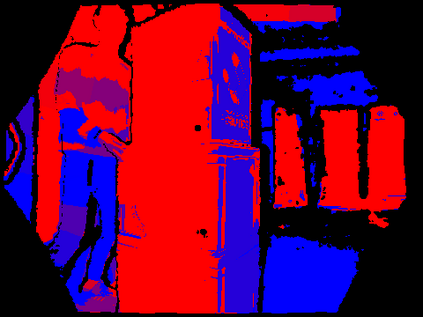

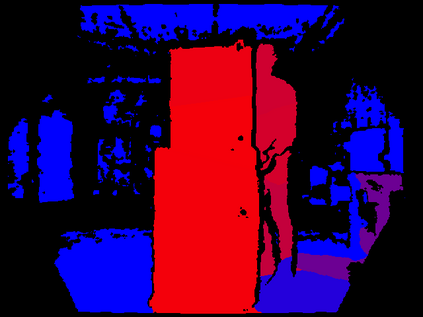

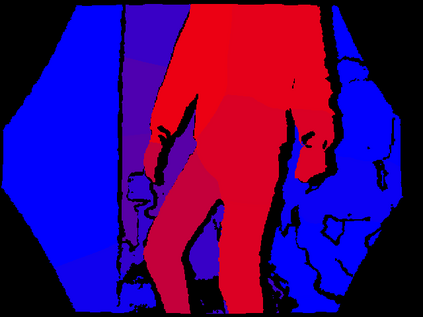

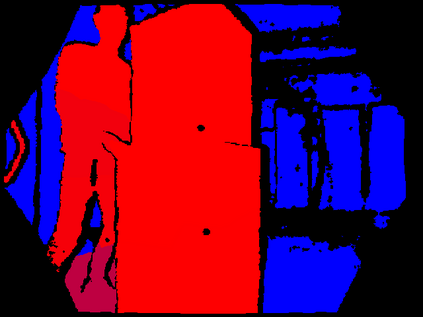

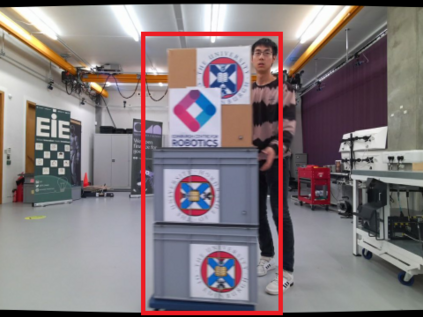

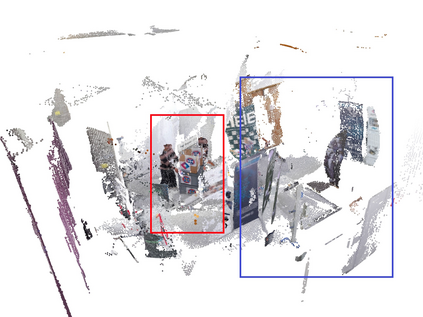

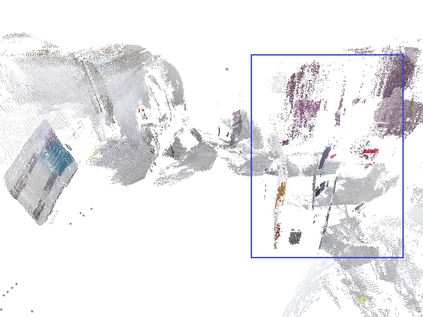

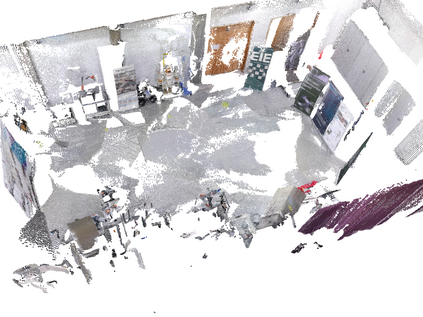

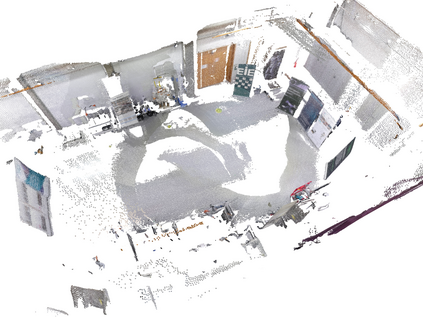

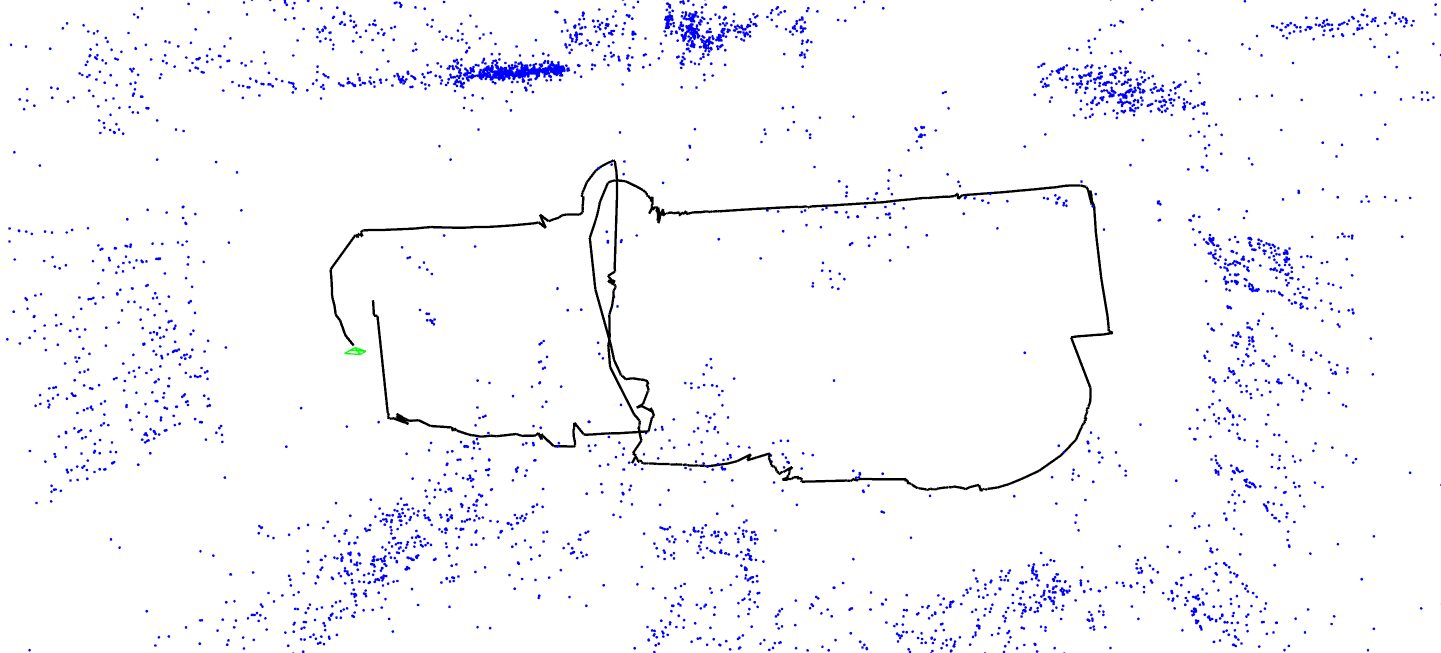

This work presents a novel RGB-D-inertial dynamic SLAM method that can enable accurate localisation when the majority of the camera view is occluded by multiple dynamic objects over a long period of time. Most dynamic SLAM approaches either remove dynamic objects as outliers when they account for a minor proportion of the visual input, or detect dynamic objects using semantic segmentation before camera tracking. Therefore, dynamic objects that cause large occlusions are difficult to detect without prior information. The remaining visual information from the static background is also not enough to support localisation when large occlusion lasts for a long period. To overcome these problems, our framework presents a robust visual-inertial bundle adjustment that simultaneously tracks camera, estimates cluster-wise dense segmentation of dynamic objects and maintains a static sparse map by combining dense and sparse features. The experiment results demonstrate that our method achieves promising localisation and object segmentation performance compared to other state-of-the-art methods in the scenario of long-term large occlusion.

翻译:本文提出了一种新型RGB-D-inertial动态SLAM方法,能够在大量动态物体长期遮挡摄像头时实现准确的定位。大多数动态SLAM方法要么将动态物体作为异常值移除,当它们占视觉输入的小部分时,要么在摄像机跟踪前使用语义分割检测动态物体。因此,大幅度遮挡区域的动态物体很难在没有先前信息的情况下被检测到。同时,当大遮挡持续时间很长时,静态背景中剩余的视觉信息也不足以支持定位。为了解决这些问题,我们的框架提出了一种强大的视觉惯性束调整方法,可以同时跟踪相机,估计动态物体的簇内密集分割并通过结合密集和稀疏特征来维护静态稀疏地图。实验结果表明,与其他最先进的方法相比,我们的方法在长期大遮挡的场景中实现了有前途的定位和对象分割性能。