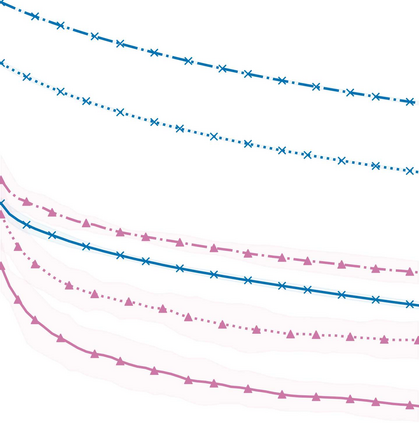

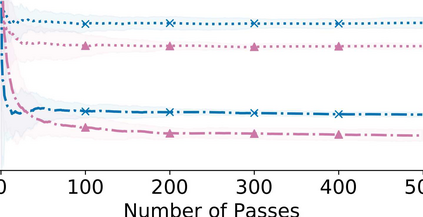

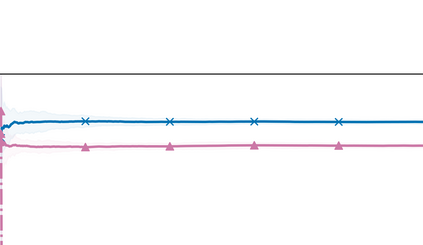

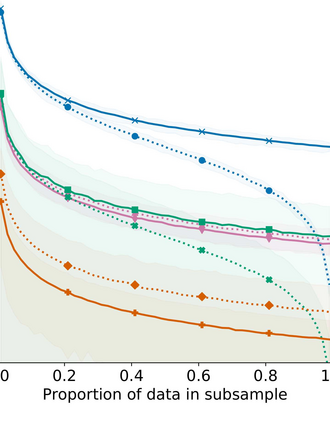

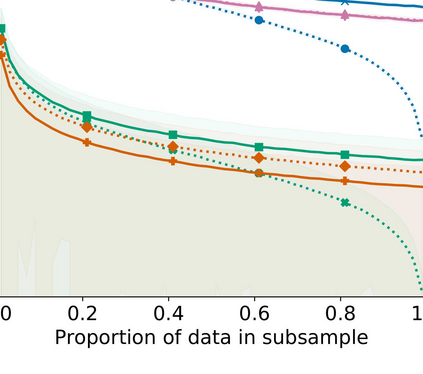

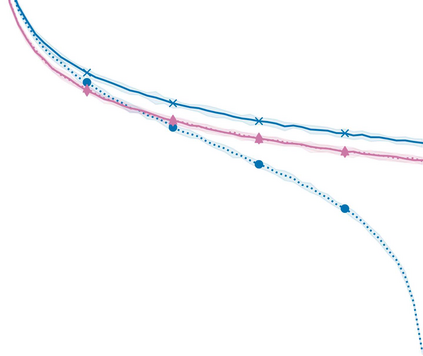

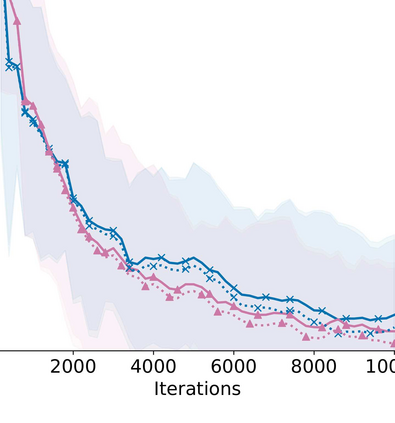

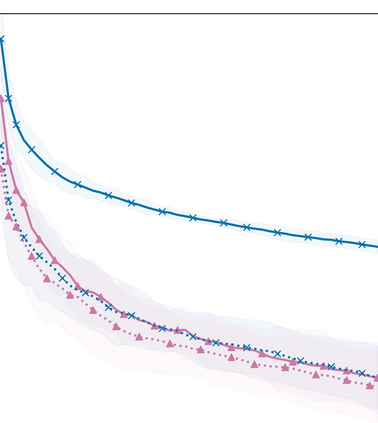

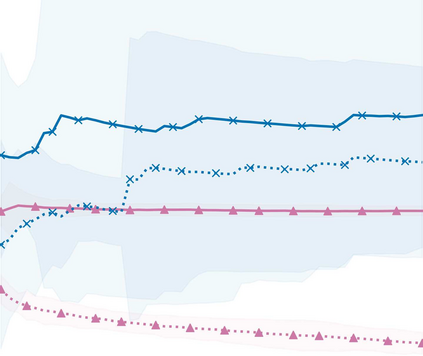

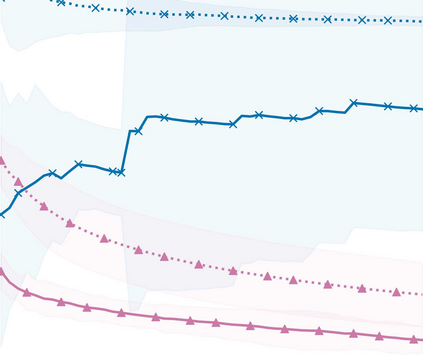

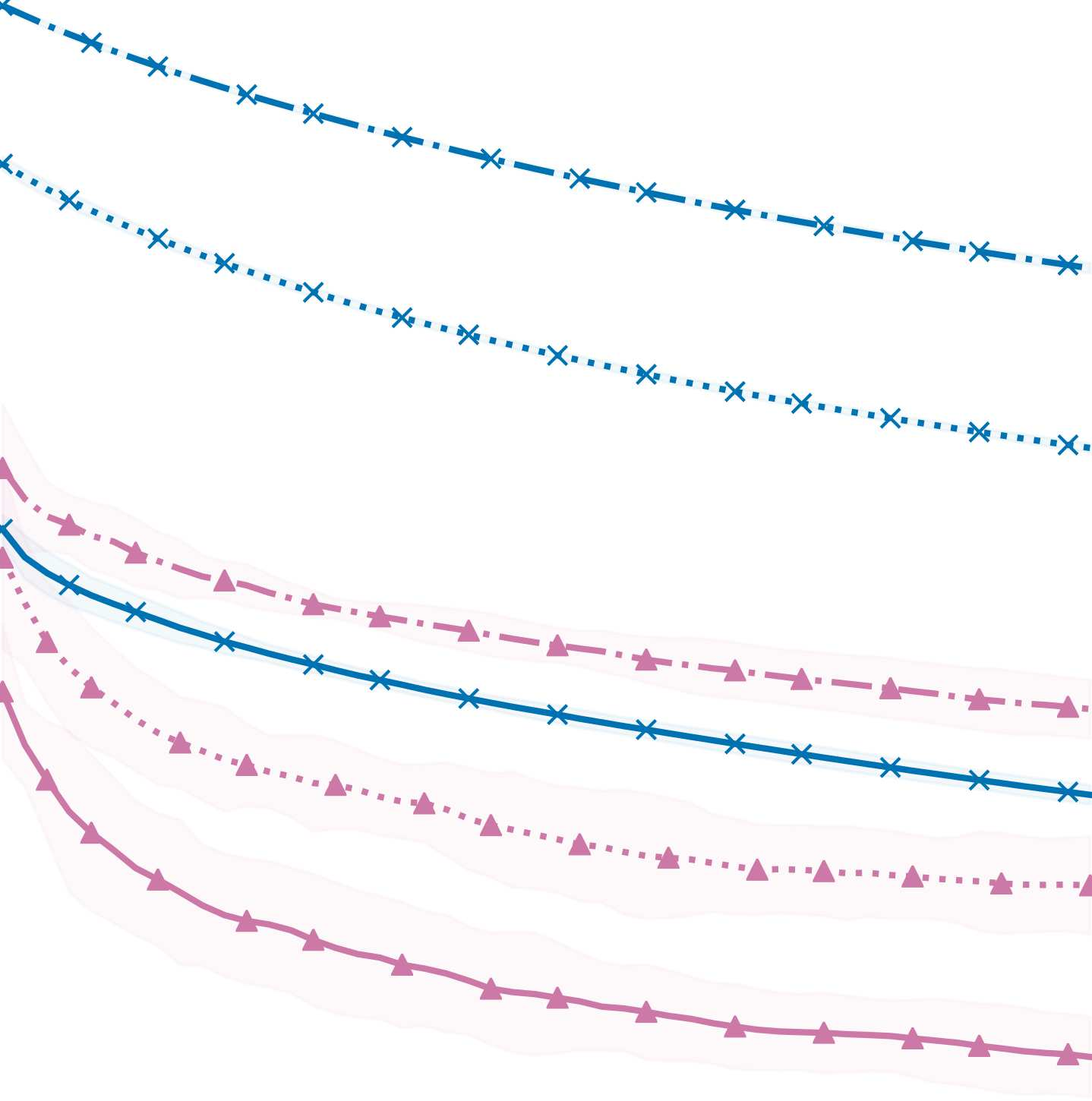

Stochastic gradient MCMC (SGMCMC) offers a scalable alternative to traditional MCMC, by constructing an unbiased estimate of the gradient of the log-posterior with a small, uniformly-weighted subsample of the data. While efficient to compute, the resulting gradient estimator may exhibit a high variance and impact sampler performance. The problem of variance control has been traditionally addressed by constructing a better stochastic gradient estimator, often using control variates. We propose to use a discrete, non-uniform probability distribution to preferentially subsample data points that have a greater impact on the stochastic gradient. In addition, we present a method of adaptively adjusting the subsample size at each iteration of the algorithm, so that we increase the subsample size in areas of the sample space where the gradient is harder to estimate. We demonstrate that such an approach can maintain the same level of accuracy while substantially reducing the average subsample size that is used.

翻译:软性梯度 MCMC (SGMCC) 提供了一种可缩放的传统 MCMC 的替代方法,即用一个小的、统一加权的数据子样本,对正对正对帐面的梯度进行公正的估计,对数据进行一个小的、统一加权的子样本。虽然计算效率很高,但由此产生的梯度估计值可能表现出很高的差异和影响取样员的性能。差异控制问题传统上是通过建造更好的随机梯度估计值来解决的,通常使用控制变量。我们提议使用一种离散的、非统一的概率分布法,优先用于对随机梯度有较大影响的子样本数据点。此外,我们提出一种在每次运算法的迭代中适应性调整子样本大小的方法,这样我们就可以在梯度较难估计的地区增加采样空间的子抽样体大小。我们证明,这样一种方法可以保持同样的准确度,同时大幅度降低所使用的平均亚样本大小。