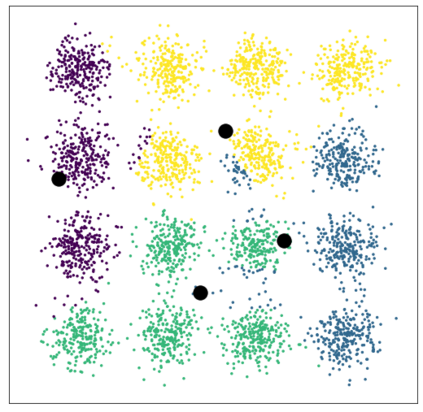

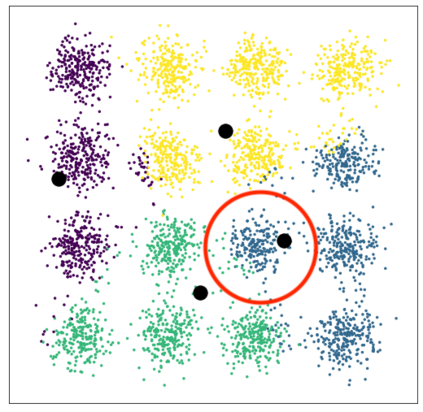

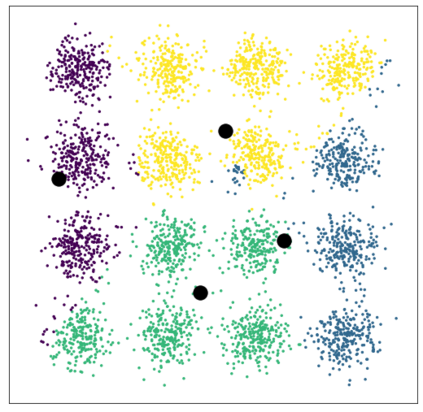

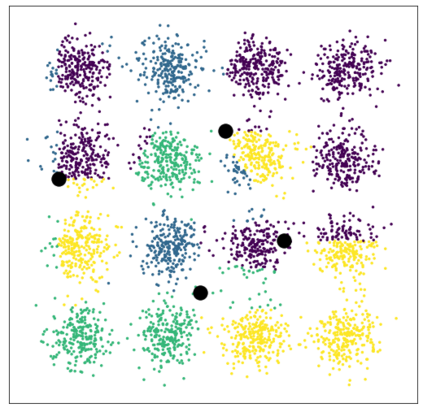

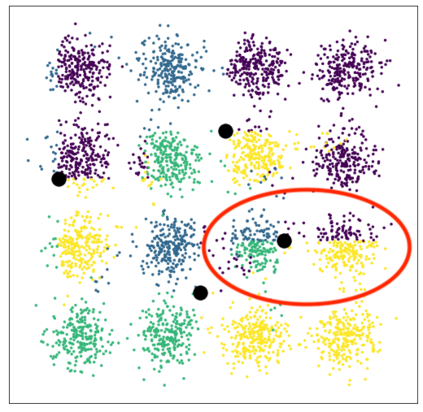

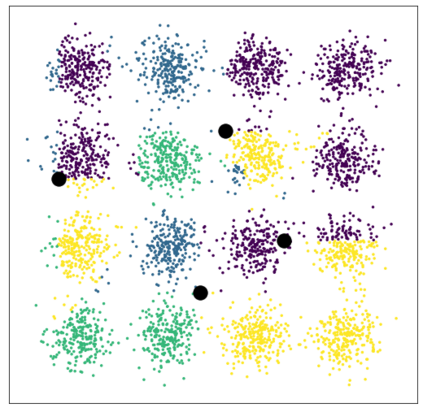

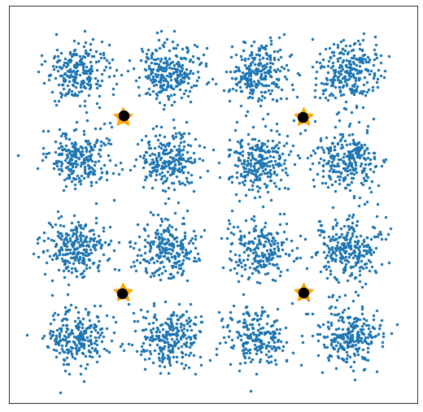

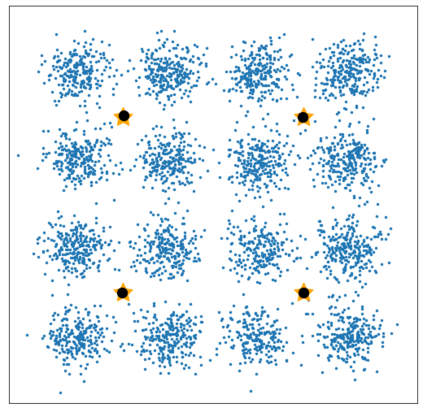

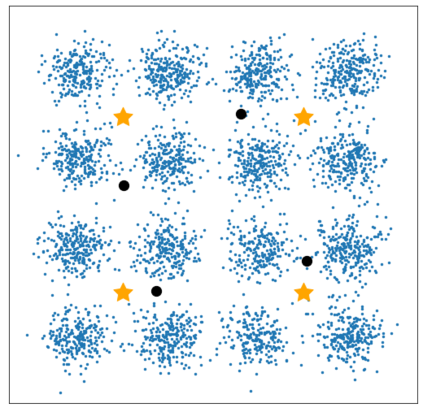

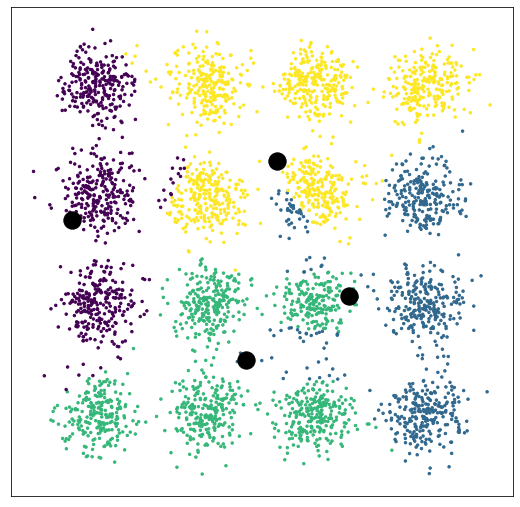

Latent variable models (LVMs) with discrete compositional latents are an important but challenging setting due to a combinatorially large number of possible configurations of the latents. A key tradeoff in modeling the posteriors over latents is between expressivity and tractable optimization. For algorithms based on expectation-maximization (EM), the E-step is often intractable without restrictive approximations to the posterior. We propose the use of GFlowNets, algorithms for sampling from an unnormalized density by learning a stochastic policy for sequential construction of samples, for this intractable E-step. By training GFlowNets to sample from the posterior over latents, we take advantage of their strengths as amortized variational inference algorithms for complex distributions over discrete structures. Our approach, GFlowNet-EM, enables the training of expressive LVMs with discrete compositional latents, as shown by experiments on non-context-free grammar induction and on images using discrete variational autoencoders (VAEs) without conditional independence enforced in the encoder.

翻译:具有离散构成潜值的隐性可变模型(LVMs)是一个重要但具有挑战性的环境,因为潜值可能配置的组合性很大。在潜值之上建模的后层模型中,一个关键的权衡点是显性与可移动优化之间。对于基于期望-最大化的算法(EM)而言,E阶段往往是难以操作的,而不会对后层有限制性近似值。我们建议使用GFlowNets, 算法从非常规密度的样本连续构造中取样, 以这种难以控制的 E级步骤。通过培训 GFlowNets 从后部取样样本中取样到从后部取样的样本中进行取样, 我们利用了它们的力量,作为离散分布在离散结构上的复杂分布的变异变法算法。 我们的方法,即GFlowNet-EM, 能够用离散的构成潜值来训练表达式LVMs,正如在不通则文的语系引导试验中所表明的那样,以及使用离散变式自动自动自动自动自动自动自动自动的自动变形器图像。