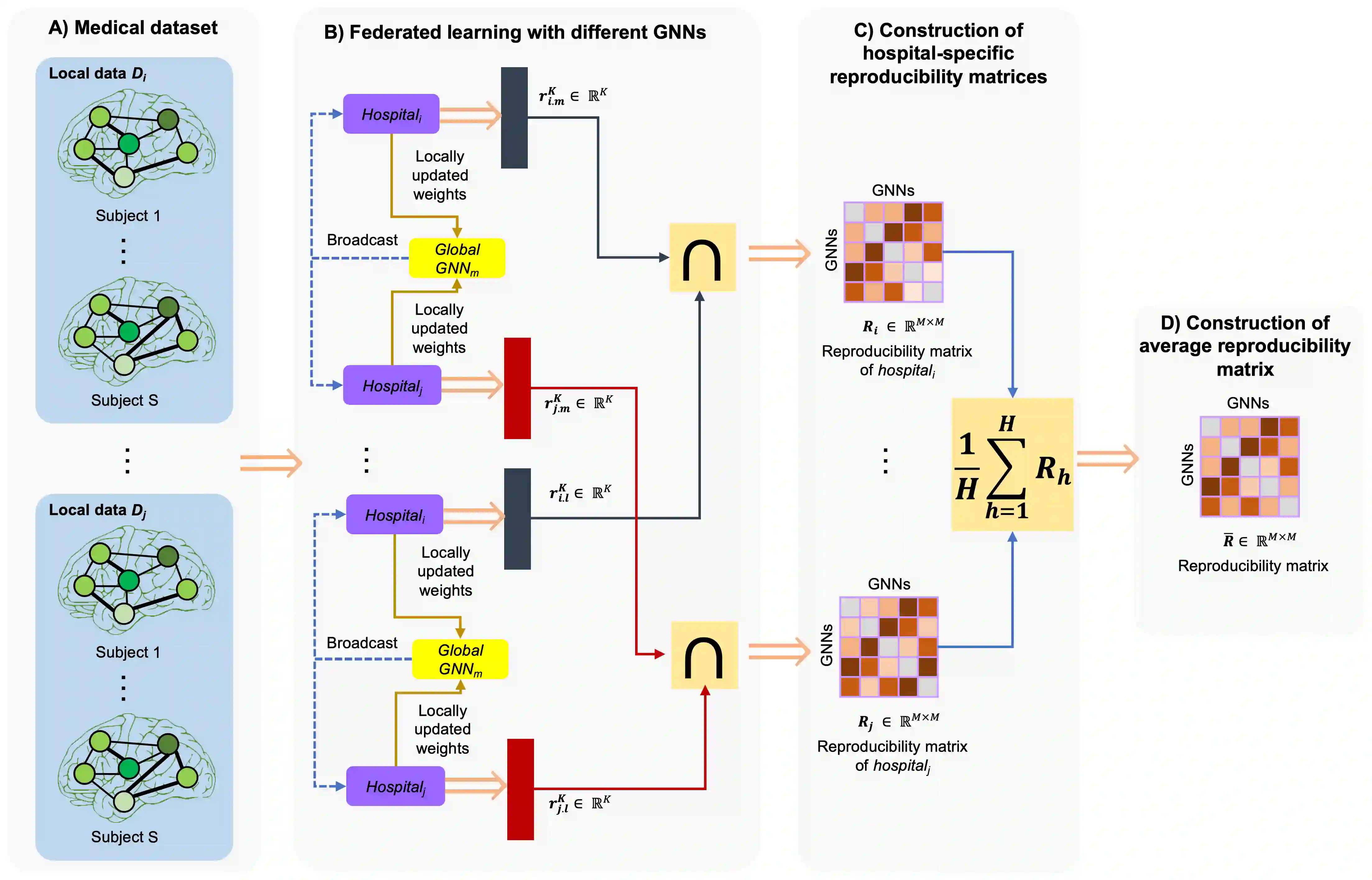

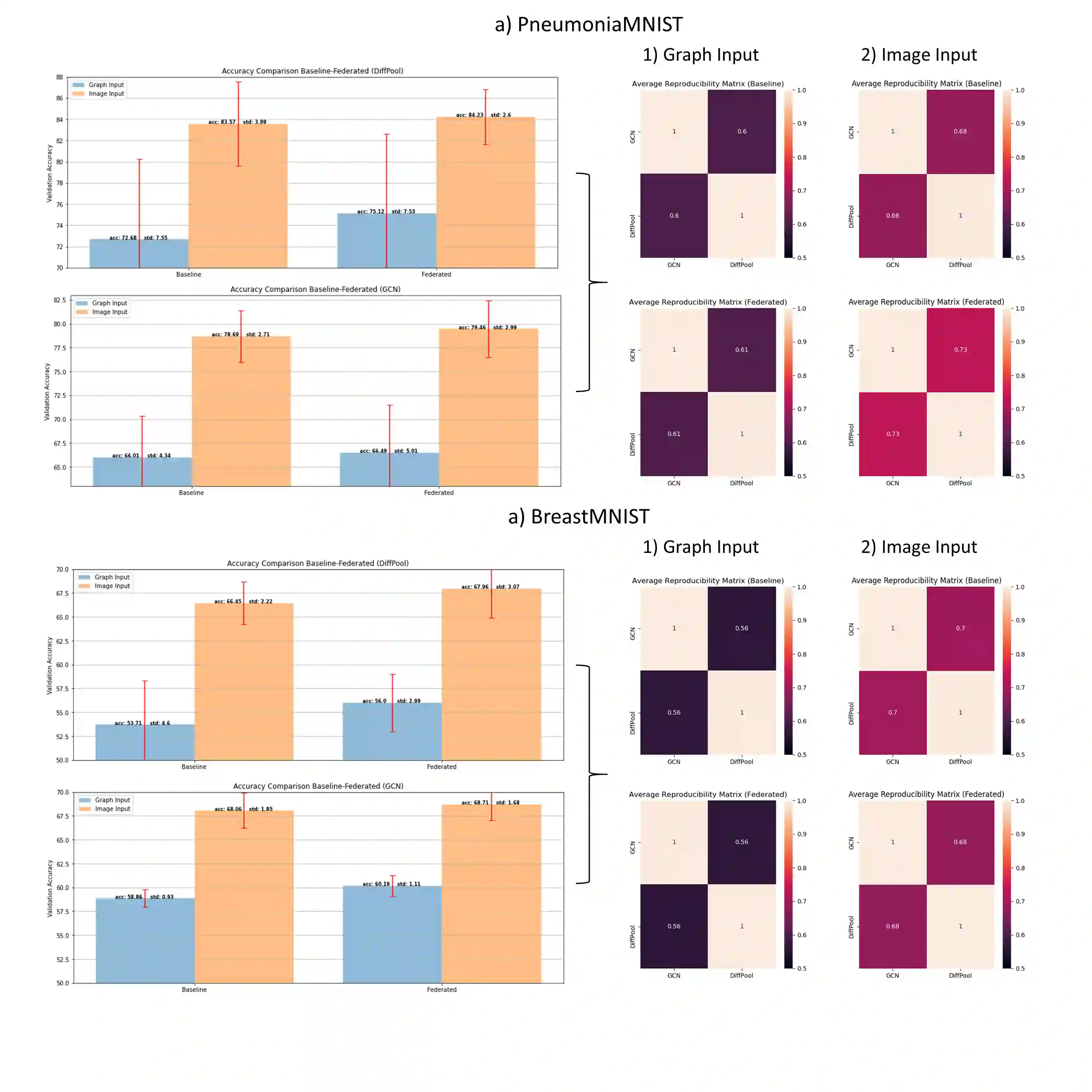

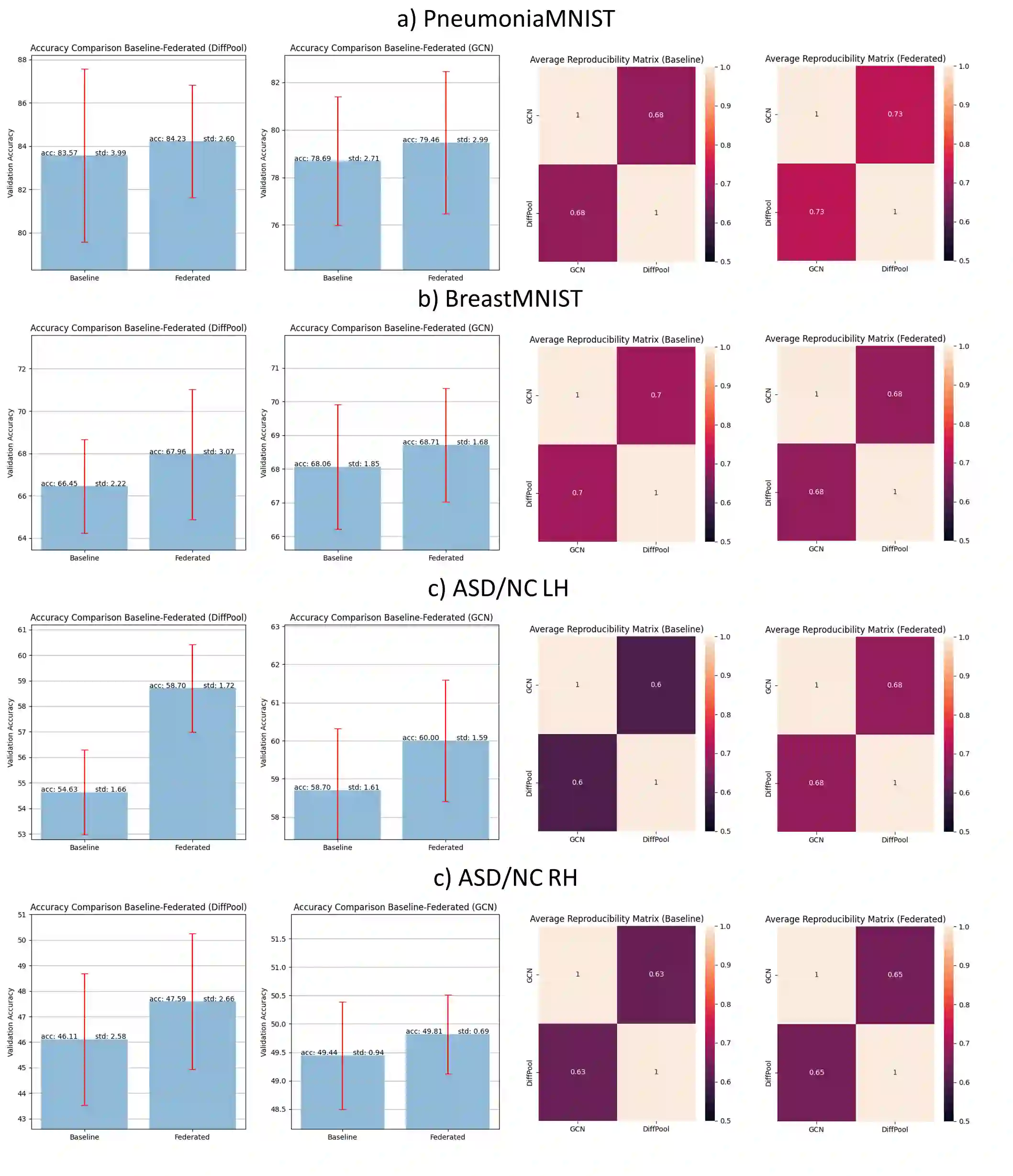

Graph neural networks (GNNs) have achieved extraordinary enhancements in various areas including the fields medical imaging and network neuroscience where they displayed a high accuracy in diagnosing challenging neurological disorders such as autism. In the face of medical data scarcity and high-privacy, training such data-hungry models remains challenging. Federated learning brings an efficient solution to this issue by allowing to train models on multiple datasets, collected independently by different hospitals, in fully data-preserving manner. Although both state-of-the-art GNNs and federated learning techniques focus on boosting classification accuracy, they overlook a critical unsolved problem: investigating the reproducibility of the most discriminative biomarkers (i.e., features) selected by the GNN models within a federated learning paradigm. Quantifying the reproducibility of a predictive medical model against perturbations of training and testing data distributions presents one of the biggest hurdles to overcome in developing translational clinical applications. To the best of our knowledge, this presents the first work investigating the reproducibility of federated GNN models with application to classifying medical imaging and brain connectivity datasets. We evaluated our framework using various GNN models trained on medical imaging and connectomic datasets. More importantly, we showed that federated learning boosts both the accuracy and reproducibility of GNN models in such medical learning tasks. Our source code is available at https://github.com/basiralab/reproducibleFedGNN.

翻译:医学成像和网络神经科学在各个领域都取得了非凡的改进,包括医学成像和网络神经科学领域,在诊断自闭症等具有挑战性的神经疾病时表现出高度准确性。面对医疗数据稀缺和高隐私度,培训这类数据饥饿模型仍然具有挑战性。联邦学习通过允许培训多数据集模型模型,通过不同医院独立收集、完全数据保存的方式,为这一问题提供了有效的解决方案。虽然最新技术的GNN和联合学习技术都侧重于提高分类准确性,但它们忽略了一个关键的未解问题:调查GNN模型在联邦学习模式中选择的最有歧视的生物标志(即特征)的再降级。量化了预测医学模型的再生性,防止培训和测试数据分布,这是发展翻译临床应用中最难克服的一个障碍。 至于我们的知识中的最佳,这是第一次调查FNFNB模型(即特征)在联邦化模型和GNNF模型的再生性更新性研究中,我们用经过培训的GNNF模型在医学模型上进行了更多的数据连结。