主题: Recent Advances in 3D Object and Hand Pose Estimation

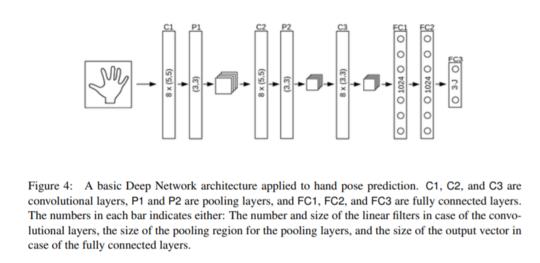

摘要: 3D对象和手势估计具有增强现实的巨大潜力,可以实现可识别的界面,自然界面以及模糊真实世界和虚拟世界之间的边界。本文介绍了利用摄像机进行三维物体和手姿态估计的最新进展,并讨论其功能和局限性以及该领域的未来发展。

成为VIP会员查看完整内容

相关内容

专知会员服务

59+阅读 · 2020年2月6日

专知会员服务

85+阅读 · 2019年11月15日

Arxiv

3+阅读 · 2017年12月28日