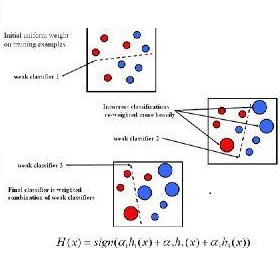

Domain adaptation is to transfer the shared knowledge learned from the source domain to a new environment, i.e., target domain. One common practice is to train the model on both labeled source-domain data and unlabeled target-domain data. Yet the learned models are usually biased due to the strong supervision of the source domain. Most researchers adopt the early-stopping strategy to prevent over-fitting, but when to stop training remains a challenging problem since the lack of the target-domain validation set. In this paper, we propose one efficient bootstrapping method, called Adaboost Student, explicitly learning complementary models during training and liberating users from empirical early stopping. Adaboost Student combines the deep model learning with the conventional training strategy, i.e., adaptive boosting, and enables interactions between learned models and the data sampler. We adopt one adaptive data sampler to progressively facilitate learning on hard samples and aggregate ``weak'' models to prevent over-fitting. Extensive experiments show that (1) Without the need to worry about the stopping time, AdaBoost Student provides one robust solution by efficient complementary model learning during training. (2) AdaBoost Student is orthogonal to most domain adaptation methods, which can be combined with existing approaches to further improve the state-of-the-art performance. We have achieved competitive results on three widely-used scene segmentation domain adaptation benchmarks.

翻译:从源域到新的环境,即目标域,要将共享的知识从源域学到共享的知识转移到目标域。一种常见的做法是,在有标签的源域数据和无标签的目标域数据方面培训模型。然而,由于源域的严密监督,学习的模型通常存在偏差。大多数研究人员都采用早期停止战略,以防止过度适应,但由于缺少目标域校准集,停止培训仍是一个具有挑战性的问题。在本文中,我们提议一种高效的踢踏方法,称为Adaboost学生,在培训过程中明确学习补充模型,使用户从经验域早期停止中解放出来。Adaboost学生将深层次的模型学习与常规培训战略相结合,即适应性增强,并使学习的模型和数据取样者之间能够进行互动。我们采用一种适应性的数据取样器,以逐步便利学习硬样品和汇总的“weak”模型,以防止过度适应。广泛的实验表明:(1) 无需担心停止时间,Adaboost学生在培训中明确学习补充性模型,通过高效的模型学习方式,在应用中普遍地进行实地调整。(2) Ada-boost-boost-rodeal-rodudeal-resis-to thestal acal-comtradeal-to