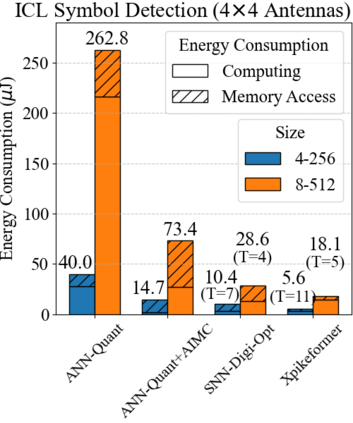

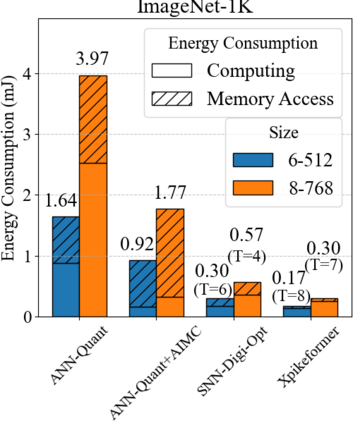

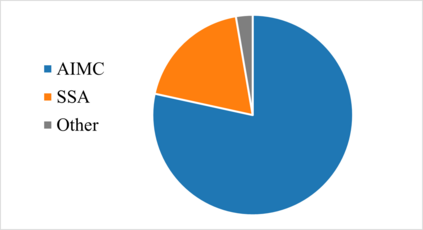

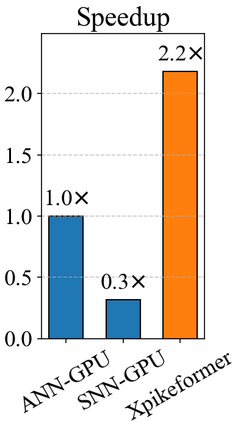

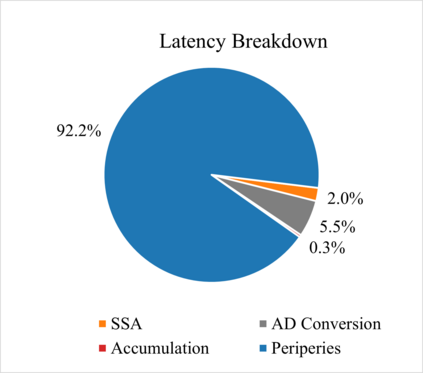

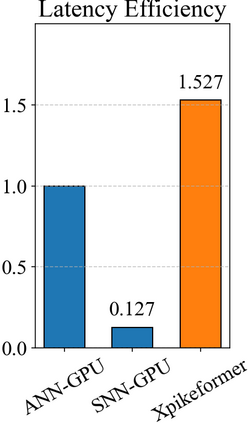

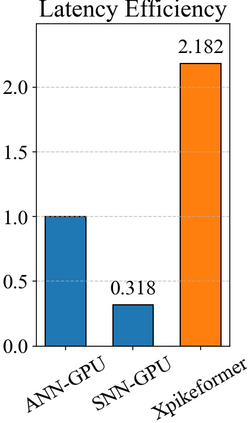

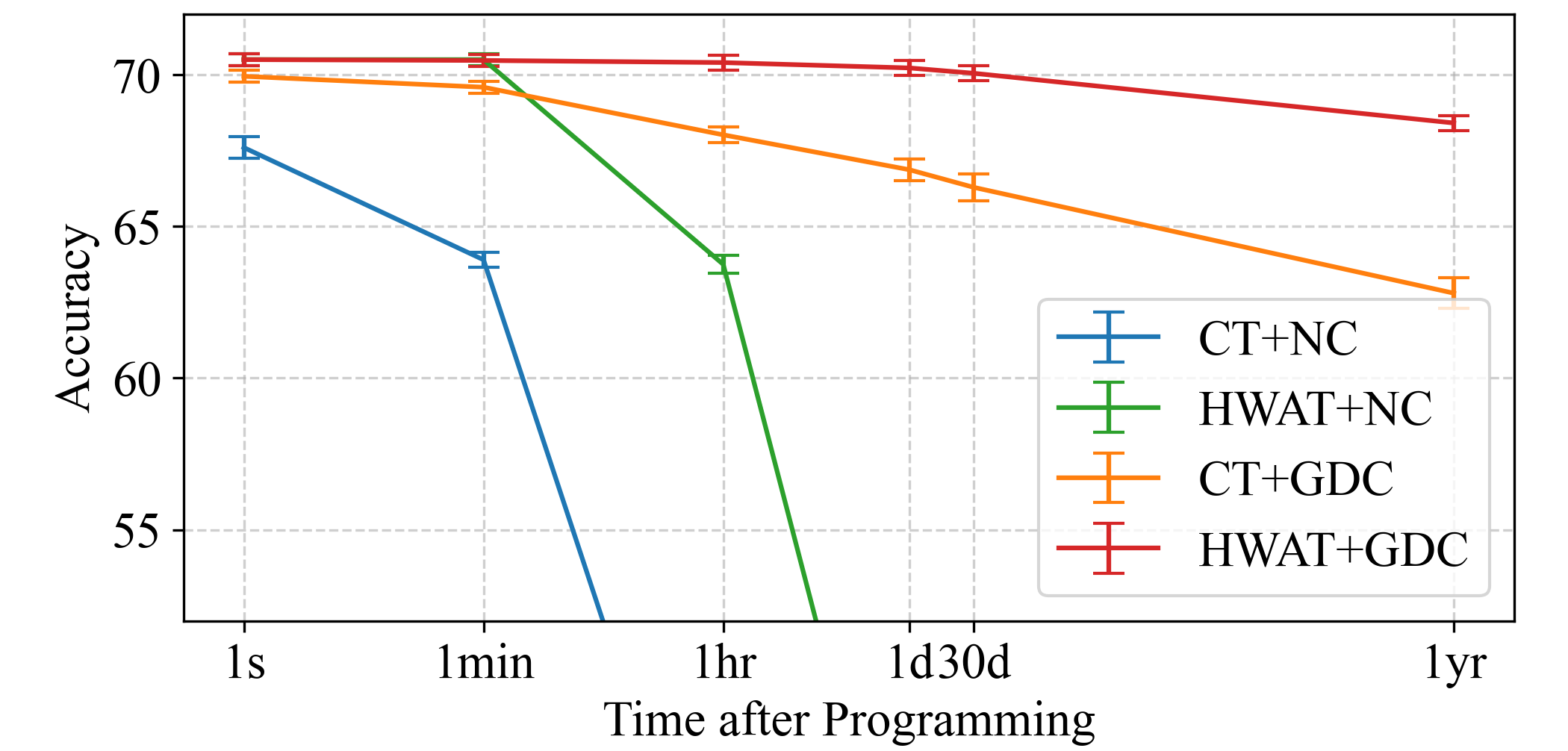

The integration of neuromorphic computing and transformers through spiking neural networks (SNNs) offers a promising path to energy-efficient sequence modeling, with the potential to overcome the energy-intensive nature of the artificial neural network (ANN)-based transformers. However, the algorithmic efficiency of SNN-based transformers cannot be fully exploited on GPUs due to architectural incompatibility. This paper introduces Xpikeformer, a hybrid analog-digital hardware architecture designed to accelerate SNN-based transformer models. The architecture integrates analog in-memory computing (AIMC) for feedforward and fully connected layers, and a stochastic spiking attention (SSA) engine for efficient attention mechanisms. We detail the design, implementation, and evaluation of Xpikeformer, demonstrating significant improvements in energy consumption and computational efficiency. Through image classification tasks and wireless communication symbol detection tasks, we show that Xpikeformer can achieve inference accuracy comparable to the GPU implementation of ANN-based transformers. Evaluations reveal that Xpikeformer achieves $13\times$ reduction in energy consumption at approximately the same throughput as the state-of-the-art (SOTA) digital accelerator for ANN-based transformers. Additionally, Xpikeformer achieves up to $1.9\times$ energy reduction compared to the optimal digital ASIC projection of SOTA SNN-based transformers.

翻译:暂无翻译