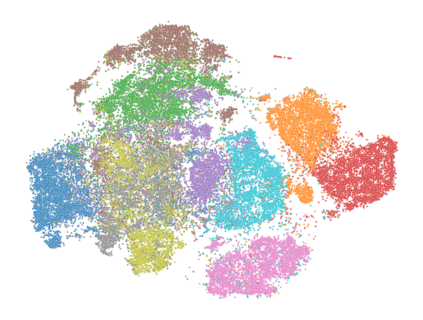

Self-supervised Learning (SSL) including the mainstream contrastive learning has achieved great success in learning visual representations without data annotations. However, most of methods mainly focus on the instance level information (\ie, the different augmented images of the same instance should have the same feature or cluster into the same class), but there is a lack of attention on the relationships between different instances. In this paper, we introduced a novel SSL paradigm, which we term as relational self-supervised learning (ReSSL) framework that learns representations by modeling the relationship between different instances. Specifically, our proposed method employs sharpened distribution of pairwise similarities among different instances as \textit{relation} metric, which is thus utilized to match the feature embeddings of different augmentations. Moreover, to boost the performance, we argue that weak augmentations matter to represent a more reliable relation, and leverage momentum strategy for practical efficiency. Experimental results show that our proposed ReSSL significantly outperforms the previous state-of-the-art algorithms in terms of both performance and training efficiency. Code is available at \url{https://github.com/KyleZheng1997/ReSSL}.

翻译:自我监督学习(SSL) 包括主流对比学习(SSL), 包括主流对比学习(SSL) 在没有数据说明的情况下学习视觉表现方面取得了巨大成功。 但是,大多数方法主要侧重于实例级信息(\ ), 同一实例的不同放大图像应该具有相同的特征或组装在同一类中), 但对于不同实例之间的关系缺乏关注。 在本文中, 我们引入了一个新的 SSL 模式, 我们称之为关系自监督学习( SSL) 框架, 通过建模不同实例之间的关系来学习表现。 具体地说, 我们提议的方法采用了更精细地在不同的实例间分配配对相似之处, 如\ textit{ relation} 度, 从而用于匹配不同增强的特性嵌入。 此外, 为了提高性能, 我们提出, 弱的增强是代表一种更可靠的关系, 并带动实际效率的动力战略。 实验结果显示, 我们提议的 reSSL 明显地超越了先前在业绩和培训效率两方面的状态算法。 代码可以在 urlas/ givus/Reheng/KylezhealZylearZwylear 。